As artificial intelligence systems become increasingly conversational, Samsung Display is making a counterintuitive bet: the future of humanoid robots will still depend on screens.

While voice interfaces have reduced friction in consumer AI products, recognition errors and contextual limitations remain persistent challenges. For humanoid robots operating in homes, hospitals, or public spaces, visual confirmation may prove critical. Samsung Display and domestic rival LG Display are positioning OLED panels as the interface layer that bridges machine cognition and human trust.

The strategy reflects a broader industrial calculation. As robotics moves from industrial arms toward service-oriented humanoids, display manufacturers see a new growth frontier beyond smartphones and televisions.

Screens as a Reliability Layer

Voice control is often framed as the natural interface for AI-powered machines. But real-world deployments reveal gaps in comprehension, ambient noise interference, and user hesitation around relying solely on audio feedback.

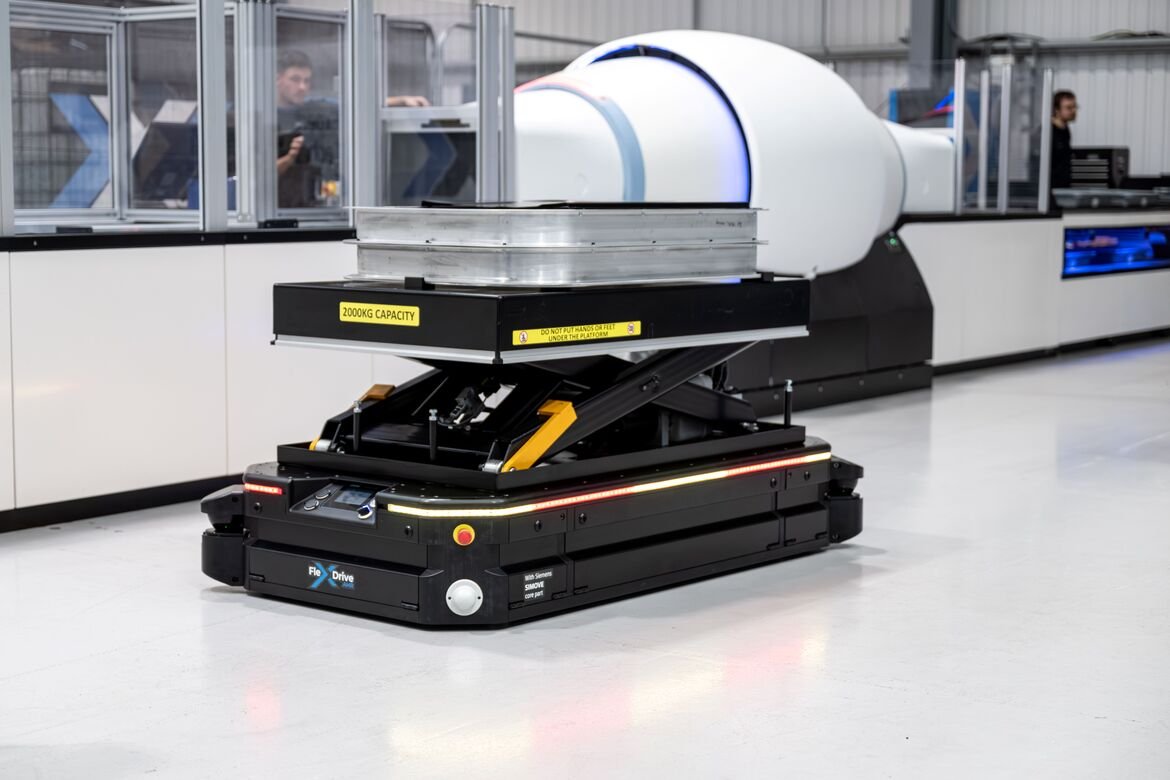

Display makers argue that humanoid robots will require multimodal interfaces, combining speech with visual cues that clarify instructions, system states, and user prompts. An OLED screen embedded in a robot’s torso or face could present task confirmations, safety alerts, or contextual information in environments where verbal communication is insufficient.

Samsung Display and LG Display have reportedly been engaging automakers entering robotics and smaller robotics developers alike, seeking early design wins before the humanoid segment scales. The companies are effectively attempting to establish screens as standard components in next-generation robot platforms.

Industry forecasts cited in Korean media estimate the humanoid robotics market could reach 55 trillion won, roughly $40 billion, by 2035. If display adoption approaches 80 percent across assistance, service, and home robots, it would open a significant adjacent revenue stream for display manufacturers.

Early Positioning Ahead of Scale

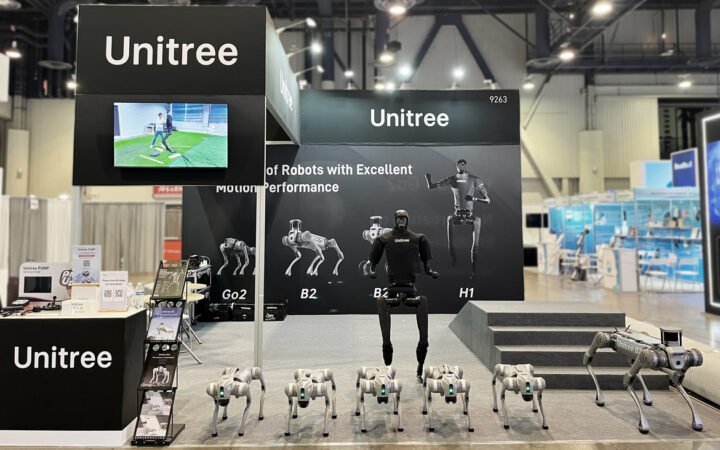

The emphasis on robotics was visible at CES 2026 in Las Vegas, where Samsung Display dedicated substantial floor space to demonstrating OLED applications across multiple robotic form factors. Rather than treating robotics as an experimental sideline, the company framed it as a long-term vertical alongside automotive and mobile displays.

LG Display is pursuing a parallel strategy, aiming to secure supply agreements before humanoid robotics transitions from pilot programs to scaled production. The competitive dynamic mirrors earlier battles in automotive displays, where early supplier relationships often determine long-term dominance.

For display makers facing saturation in smartphones and televisions, humanoid robotics offers a new category with potentially differentiated requirements, including durability, energy efficiency, and unconventional shapes.

The Interface Question in Physical AI

The push by Samsung and LG underscores a deeper debate within robotics: how humans will interact with embodied AI systems.

As generative AI advances rapidly in language and reasoning, the physical manifestation of that intelligence still requires intuitive communication channels. In factories, robots can rely on status lights and dashboards. In homes or public settings, interaction becomes more social and immediate.

Screens provide persistent visibility. Unlike voice, they allow users to review information, confirm instructions, and understand robot intent at a glance. That capability could become particularly important in service roles where clarity and safety are paramount.

If humanoid robots become widespread over the next decade, interface design may prove as commercially consequential as mobility or manipulation hardware. Samsung Display’s investment suggests it sees OLED panels not as optional accessories, but as foundational infrastructure in the emerging human-robot relationship.