Monthly Archives: April 2026

Google Advances Embodied AI with Gemini Robotics ER Model

Google has introduced a new AI model that improves how robots understand, plan, and act in real-world environments, marking progress in embodied reasoning.

Google has introduced a new AI model designed to improve how robots understand and operate in real-world environments, targeting one of the most persistent limitations in robotics: the ability to reason beyond predefined instructions.

The model, Gemini Robotics-ER 1.6, focuses on what researchers describe as embodied reasoning – the capacity for machines to interpret visual inputs, plan sequences of actions, and determine when a task has been successfully completed. The update reflects a broader shift in robotics from systems that execute commands to those that can make context-aware decisions in dynamic settings.

The model is being made available to developers through Google’s AI tooling ecosystem, positioning it as part of a growing effort to standardize software layers for physical AI.

Moving from Perception to Reasoning

Robotics systems have historically relied on separate modules for perception, planning, and control, often requiring extensive engineering to connect them. Gemini Robotics-ER 1.6 attempts to unify these functions, allowing robots to process visual information and translate it directly into action.

The model improves spatial reasoning, enabling robots to identify objects, understand their relationships, and break tasks into smaller steps. It can also track objects across multiple viewpoints, combining inputs from different cameras to build a more complete understanding of an environment.

This multi-view capability is particularly relevant in real-world settings, where occlusion, clutter, and changing conditions can limit the effectiveness of single-camera systems. By integrating multiple perspectives, robots can maintain situational awareness even when parts of a scene are temporarily hidden.

Another key advancement is success detection. The model allows robots to evaluate whether a task has been completed correctly, reducing reliance on external validation or rigid programming. This is a critical requirement for autonomous operation, particularly in environments where tasks may need to be repeated or adjusted in real time.

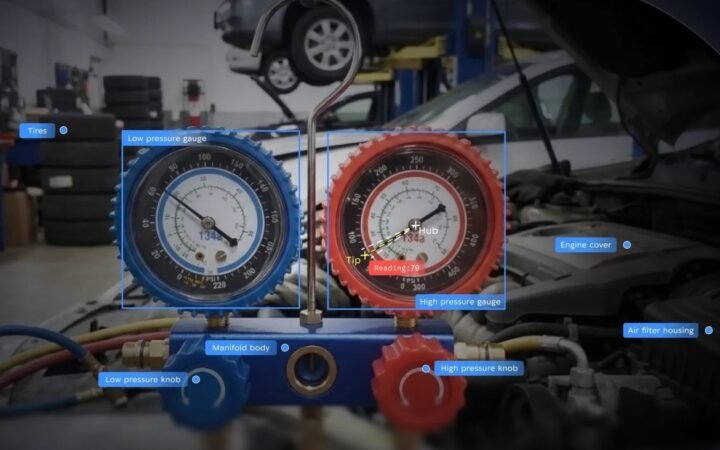

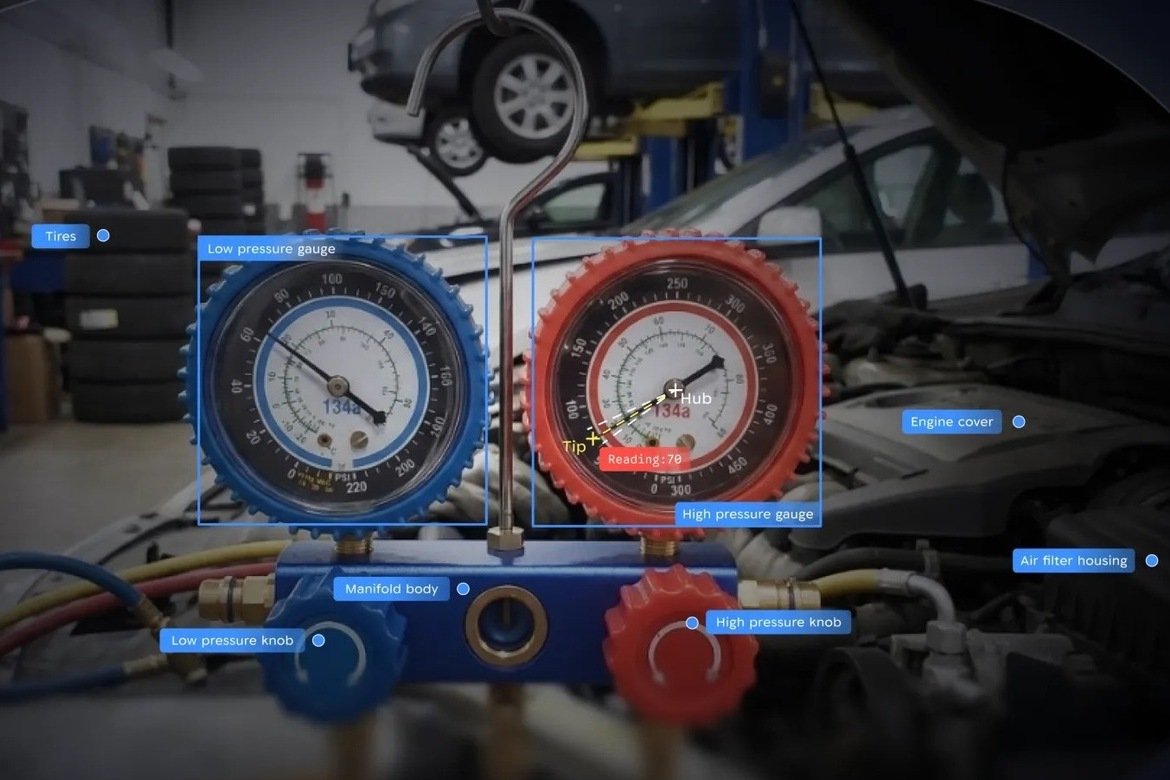

Interpreting the Physical World

One of the more practical capabilities introduced in the model is the ability to read instruments such as gauges, meters, and digital displays. This function is particularly relevant for industrial and inspection applications, where robots must interpret physical indicators rather than purely digital data.

In collaboration with Boston Dynamics, the system has been applied to robots like Spot, which are used for facility monitoring. The model can analyze visual inputs, identify key components such as needles or numerical readouts, and calculate values with a high degree of accuracy.

Reported improvements in instrument reading performance suggest a significant step forward. Accuracy has increased from earlier levels of around 23% to over 90% in some scenarios, indicating that robots are becoming more capable of handling tasks that require precise interpretation of real-world signals.

The model also incorporates safety-aware reasoning, allowing robots to identify potential hazards and avoid unsafe interactions. This reflects an increasing emphasis on aligning robotic behavior with physical constraints, particularly as systems move into environments shared with humans.

Building a Software Layer for Physical AI

The release of Gemini Robotics-ER 1.6 highlights a broader trend toward treating robotics as a software problem as much as a hardware one. As companies race to develop humanoid and autonomous systems, the ability to generalize across tasks and environments is becoming a key differentiator.

Efforts by companies such as Nvidia and others have focused on simulation and training infrastructure, while Google’s approach emphasizes reasoning and decision-making at runtime. Together, these developments point toward a layered architecture for physical AI, where perception, reasoning, and control are increasingly integrated.

The remaining challenge is translating these capabilities into reliable real-world performance at scale. While models like Gemini Robotics-ER 1.6 demonstrate significant progress in controlled evaluations, deployment in complex environments will require further advances in robustness, data integration, and system design.

Google’s latest model suggests that robotics is entering a phase where intelligence is defined less by isolated capabilities and more by the ability to connect perception, reasoning, and action. As embodied AI systems become more capable of interpreting and responding to the physical world, the boundary between digital intelligence and physical execution continues to narrow.

The extent to which this translates into widespread adoption will depend on how quickly these systems can move from experimental demonstrations to dependable tools in industry and beyond.

Unitree Brings $4000 Humanoid Robot to Global Buyers via AliExpress

Unitree is bringing its lowest-cost humanoid robot to global markets via AliExpress, signaling a shift toward early consumer adoption of robotics.

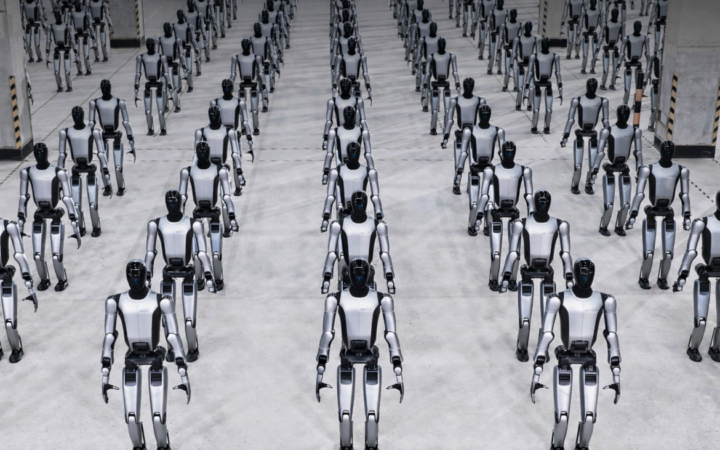

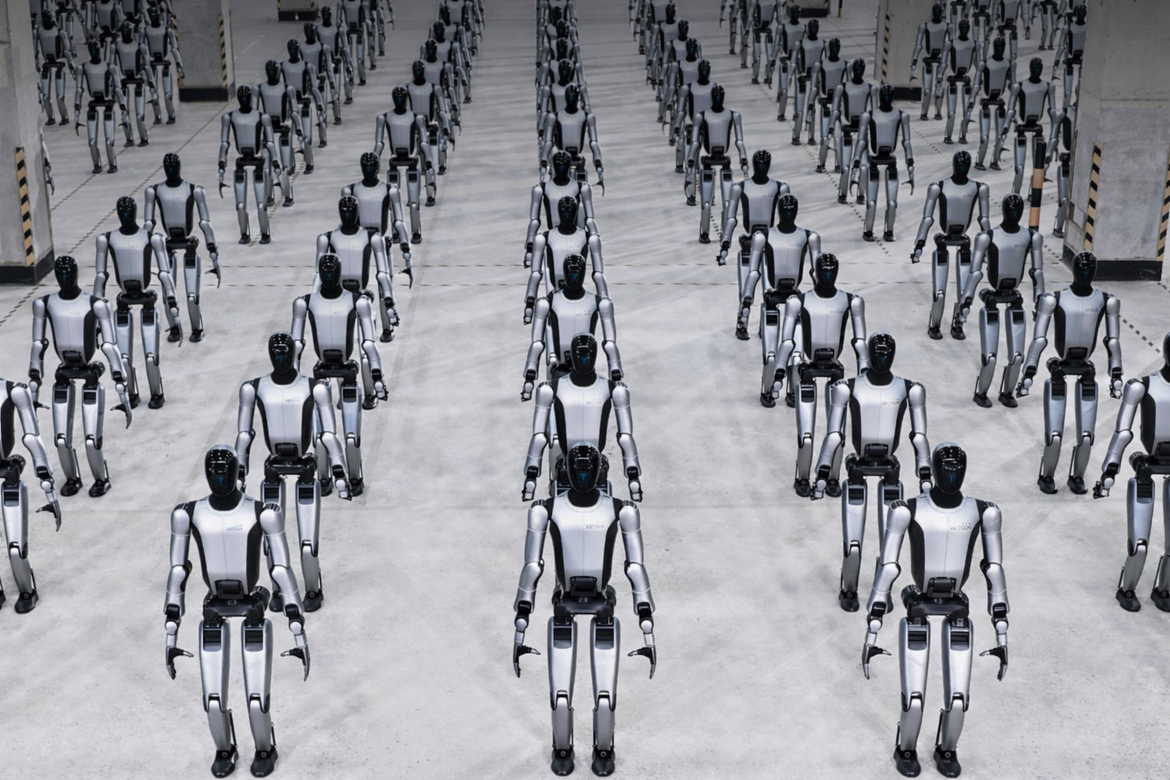

Chinese robotics firm Unitree Robotics is preparing to launch its most affordable humanoid robot globally, a move that could test whether the category is beginning to transition from industrial experimentation to early consumer markets.

The company plans to debut its R1 humanoid robot through AliExpress, targeting customers in North America, Europe, Japan, and Singapore. With a starting price of around $4,000 in China, the R1 is among the lowest-cost humanoid robots introduced to date, positioning it closer to consumer electronics than traditional industrial machinery.

The rollout comes as Unitree accelerates production and expands internationally, following a year in which it shipped more than 5,500 humanoid robots – far exceeding most global competitors.

Lower Prices Meet Global Distribution

The R1 reflects a broader push to reduce the cost of humanoid robotics while expanding access through global distribution platforms. By launching on AliExpress, Unitree is bypassing traditional enterprise sales channels and testing direct-to-market demand.

The robot stands just over 1.2 meters tall and is designed for dynamic movement, including running, recovering from falls, and performing coordinated motions. Marketed as “sport-ready”, it highlights Unitree’s focus on mobility and mechanical performance rather than immediate utility in structured work environments.

The pricing strategy marks a significant departure from earlier humanoid systems, which have typically been priced in the tens of thousands of dollars or higher. Even companies such as Tesla have suggested that future humanoid robots could cost around $20,000, placing Unitree’s offering well below that threshold.

The question is not only whether such pricing is sustainable, but whether it will translate into meaningful adoption beyond research labs and demonstration use cases.

Scaling Production Ahead of Demand

Unitree’s global expansion is closely tied to its manufacturing scale. The company has set a target of shipping between 10,000 and 20,000 robots in 2026, building on its current position as one of the highest-volume producers of humanoid systems.

According to industry estimates, competitors such as Figure AI and Agility Robotics have shipped only a few hundred units each, underscoring the gap between Chinese and U.S. production capacity.

Market research firm TrendForce expects Unitree to account for a substantial share of global humanoid output in the near term, reflecting both aggressive scaling and a focus on cost reduction.

At the same time, the company is preparing for a potential IPO in Shanghai, aiming to raise capital to expand manufacturing and research. The R1’s international debut may therefore serve a dual purpose: generating revenue while demonstrating global demand to investors.

From Demonstration to Early Adoption

The launch also highlights a shift in how humanoid robots are being positioned. Rather than targeting a single industrial application, the R1 appears designed as a general-purpose platform that can showcase capabilities and attract a broader user base.

Unitree has previously gained visibility through high-profile demonstrations, including coordinated performances by its robots on national television. The move into global e-commerce suggests a transition from spectacle to early commercialization, even if practical use cases remain limited.

For now, most humanoid robots are still used in research, education, and controlled environments. The introduction of a lower-cost model does not immediately resolve challenges around autonomy, reliability, or real-world utility.

However, it may begin to reshape expectations. If consumers and small businesses can access humanoid robots at a fraction of previous costs, the market could shift from a handful of experimental deployments to a larger base of exploratory use.

Unitree’s R1 launch represents one of the clearest attempts to test that transition. By combining lower pricing with global distribution, the company is effectively probing whether humanoid robotics can move beyond early adopters and into a broader commercial category.

The outcome will depend less on technical capability alone and more on whether users find meaningful ways to integrate these systems into everyday environments. For an industry still searching for its first large-scale application, that question remains open.

AGIBOT Launches Genie Sim 3.0 to Power Embodied AI Development

AGIBOT introduced Genie Sim 3.0, a unified platform combining simulation, data generation, and benchmarking to accelerate embodied AI development.

AGIBOT has introduced Genie Sim 3.0, a new platform designed to unify simulation, data generation, and benchmarking for embodied artificial intelligence. The release reflects a growing industry push to address one of robotics’ biggest constraints – the lack of scalable, high-quality training data and standardized evaluation.

While advances in AI models have driven rapid progress in robotics, real-world deployment remains limited by expensive data collection, fragmented testing environments, and inconsistent performance metrics. Genie Sim 3.0 aims to consolidate these elements into a single development infrastructure, reducing the gap between research and deployment.

The platform combines environment creation, simulation, training, and evaluation into a continuous pipeline. Instead of building each component separately, developers can now iterate within a unified system designed specifically for embodied AI systems.

From Simulation to Scalable Data

A central feature of Genie Sim 3.0 is its ability to generate interactive 3D environments from text or image inputs, using a spatial world model. This allows developers to create training scenarios in minutes rather than hours, significantly lowering the cost and complexity of robotics development.

The system produces synchronized multimodal outputs – including visual, depth, and LiDAR data – closely aligned with real-world robot perception. This is critical for improving transfer from simulation to physical environments, a longstanding challenge in robotics.

By automating environment creation and scaling data generation, AGIBOT is effectively turning simulation into a primary source of training data, rather than a supplementary tool. This shift mirrors broader trends in AI, where synthetic data is increasingly used to overcome real-world limitations.

Standardizing Evaluation and Closing the Sim-to-Real Gap

Beyond data generation, Genie Sim 3.0 introduces a structured benchmarking framework designed to evaluate core robotic capabilities. These include instruction following, spatial reasoning, manipulation skills, robustness under environmental changes, and sim-to-real transfer performance.

This standardized approach addresses a key issue in robotics – the lack of consistent metrics across models and systems. By defining common evaluation tasks, the platform enables more reliable comparison and faster iteration.

The system also integrates reinforcement learning pipelines, allowing models to be trained and tested within the same environment. High-frequency physics simulation combined with parallel processing enables faster convergence and more efficient experimentation.

Taken together, these capabilities create a closed-loop system where robots can learn, adapt, and be evaluated continuously within simulation before deployment.

Genie Sim 3.0 reflects a broader shift toward infrastructure-driven robotics development. As embodied AI moves from research into real-world applications, platforms that unify data, training, and evaluation are becoming essential.

By reducing engineering overhead and accelerating iteration cycles, AGIBOT is positioning simulation not just as a tool, but as the foundation for scaling the next generation of intelligent machines.

Humanoid and Quadruped Robot Shipments Set to Hit 810,000 Units by 2030

Global shipments of humanoid and quadruped robots are projected to reach 810,000 units by 2030, as enterprise adoption replaces early experimentation.

The global market for humanoid and quadruped robots is entering a decisive growth phase, with shipments projected to reach 810,000 units by 2030, according to new industry forecasts by SAG. The shift reflects a broader transition from early-stage experimentation to real-world deployment across logistics, manufacturing, and service industries.

Recent data shows the pace of expansion is already accelerating, reports AIstify. Global shipments reached nearly 53,000 units in 2025, representing a 250% year-over-year increase, while total market revenue approached $1 billion. By the end of the decade, the market is expected to scale to $8 billion, supported by sustained double-digit growth.

The defining change is not just technological progress, but demand. After years of testing and pilot programs, companies are now integrating robots directly into operational workflows where labor shortages, safety requirements, and efficiency pressures are most acute.

Enterprise Adoption Becomes the Primary Growth Driver

The next phase of growth will be driven primarily by enterprise adoption rather than experimentation. Early deployments focused on validation and proof-of-concept, but that cycle is now reaching its limits.

“The robotics industry delivered strong growth in 2025, but the real test lies ahead,” said Yiwen Wu, Lead Research Advisor at Smart Analytics Global. “Enterprise adoption will be the key. Only vendors that can scale real-world deployments will define the next phase of the industry.”

Quadruped robots are currently leading in real-world use cases, particularly in inspection, security, and industrial monitoring. Their ability to navigate uneven terrain and operate in hazardous environments has made them easier to commercialize at scale.

Humanoid robots, by contrast, remain earlier in deployment but are attracting significantly more investment and policy support. Their long-term potential lies in operating within human-designed environments, from warehouses and retail to healthcare and household applications.

This creates a dual-track market: quadrupeds driving immediate adoption, while humanoids dominate long-term strategic positioning.

China Dominates Hardware While Global Competition Intensifies

The geographic distribution of the market reveals a clear imbalance. Chinese companies accounted for approximately 85% of global shipments in 2025, with China itself absorbing more than 60% of total demand.

Companies such as Unitree Robotics, Agibot, DOBOT, and Galbot are scaling production rapidly, leveraging manufacturing efficiency to capture early market share. Unitree alone held a leading position across both segments, with a particularly dominant share in quadruped robots.

At the same time, Western companies are maintaining an advantage in software, AI models, and advanced research. Firms like Boston Dynamics, Tesla, and Amazon are focusing on autonomy, perception systems, and large-scale AI integration.

This divergence is shaping a fragmented but complementary global landscape, where leadership is split across hardware manufacturing, software intelligence, and regulatory frameworks. South Korea is increasing investment in robotics, while Europe continues to specialize in safety, certification, and high-value industrial applications.

Looking ahead, analysts expect consolidation pressure to increase as the market matures. Vendors that expanded production ahead of proven demand may face challenges, while others with strong deployment pipelines could emerge as dominant players.

The result is a market approaching a critical inflection point. Robotics is no longer defined by technical capability alone – it is increasingly shaped by scalability, economics, and the ability to operate reliably in the real world.

Humanoid Robots Are Being Trained by Gig Workers Filming Life at Home

Gig workers across more than 50 countries are recording household tasks to train humanoid robots, revealing a new data economy behind physical AI.

The development of humanoid robots is increasingly dependent not just on hardware breakthroughs or AI models, but on a growing global workforce capturing the physical world on camera. Across more than 50 countries, gig workers are now filming themselves performing everyday household tasks to generate training data for robots that are still years away from widespread deployment.

The model, led by startups such as Micro1, reflects a broader shift in how physical AI systems are built. Just as large language models relied on vast corpora of text scraped and labeled at scale, humanoid robots require detailed recordings of human interaction with objects in real-world environments. The difference is that this data must be created, not collected – and it is being produced inside people’s homes.

Building the Data Layer for Physical AI

Humanoid robots face a fundamentally different challenge from software-based AI systems: they must operate in unstructured, unpredictable environments. Tasks such as folding laundry, loading dishwashers, or organizing shelves involve subtle variations that are difficult to simulate or script.

To address this, companies are assembling large datasets of human activity, capturing how people manipulate objects in real settings. Workers are paid to record themselves performing routine tasks, often wearing cameras that track hand movements, object interactions, and spatial context.

The resulting footage forms the foundation for training robot perception and control systems. Companies such as Scale AI have already accumulated tens of thousands of hours of such material, while platforms like DoorDash have begun experimenting with allowing gig workers to contribute training data alongside their primary work.

This emerging pipeline suggests that physical AI will depend on a new category of data infrastructure – one that extends beyond digital content into the physical behaviors of human workers.

A Familiar Economic Structure in a New Domain

The economics of this system closely resemble earlier phases of the AI industry. Workers contributing data are typically paid hourly rates that are competitive within their local economies but represent a small fraction of the value generated downstream.

Participants receive no ownership over the data they produce and no share in the long-term value of the models trained on it. As humanoid robotics companies attract billions in investment, the gap between capital allocation and labor compensation is becoming more pronounced.

This structure mirrors the development of computer vision and natural language processing systems, where data labeling and annotation were outsourced globally. The key difference is that physical AI requires more invasive forms of data collection, capturing not just digital inputs but lived environments.

The result is a new layer of the gig economy, one that sits beneath the visible robotics industry and provides the raw material for its progress.

Privacy Risks Move Into the Home

Unlike earlier data pipelines, which largely relied on public or platform-generated content, the data used to train humanoid robots is often recorded in private spaces. Videos include kitchen layouts, household items, and other details that collectively form a detailed map of domestic life.

This raises questions about data ownership, consent, and long-term storage. Workers may have limited visibility into how their recordings are used, whether they are anonymized, or how long they are retained. The implications extend beyond individual privacy to broader concerns about the creation of large-scale visual datasets of private environments.

Researchers in human-centered computing have emphasized the need for clearer disclosure and safeguards, but industry practices remain inconsistent. As the volume of collected data grows, so too does the potential risk associated with breaches, misuse, or secondary applications.

The reliance on gig workers to generate training data underscores a central reality of humanoid robotics: progress depends not only on engineering advances, but on access to large-scale, real-world human behavior.

This data-centric approach may accelerate development, but it also introduces new questions about labor, ownership, and privacy. As physical AI moves closer to commercial deployment, the systems being built will increasingly reflect not just technological innovation, but the global infrastructure of work that supports them.

New Robotic Skin Brings Human Like Touch Closer to Machines

Researchers have developed a flexible sensor that allows robots to detect gentle touch with high precision, marking a step toward safer human-machine interaction.

Robots have made rapid progress in vision and motion, but touch has remained a persistent limitation. Without reliable tactile feedback, even advanced systems struggle to handle fragile objects or safely interact with humans. A new class of flexible sensors developed by researchers at Penn State suggests that gap may be narrowing.

The team has created a lightweight “robotic skin” capable of detecting extremely small pressure changes while maintaining durability under repeated use. The development reflects a broader push in robotics to move beyond perception and mobility toward physical intelligence – systems that can interpret and respond to the physical world with greater nuance.

Turning Pressure into Real Time Control

At the core of the system is a small, flexible sensor built around graphene aerogel, a porous material that converts mechanical pressure into electrical signals. The structure allows the sensor to respond quickly to light touch while remaining stable under heavier loads, addressing a common tradeoff between sensitivity and durability.

Each sensor can register contact in just over 100 milliseconds and recover shortly after, enabling near real-time feedback. When arranged in arrays, these sensors generate pressure maps that function similarly to human skin, allowing robots to interpret how force is distributed across their surface.

This capability shifts tactile sensing from passive measurement to active control. In demonstrations, robotic hands equipped with the sensors adjusted grip strength dynamically, preventing damage to delicate objects such as soft food items. The system effectively translates touch into immediate motor responses, closing a loop that has historically been difficult to achieve in robotics.

From Grasping to Perception

Beyond simple force control, the sensor system introduces a new layer of perception. By analyzing pressure patterns, robots can begin to distinguish between different materials and objects based on how they respond to touch.

In experimental tests, researchers trained a lightweight model to classify food items using tactile data alone. After repeated training cycles, the system achieved accuracy above 99%, suggesting that touch-based recognition could complement or, in some cases, substitute for visual input.

This has implications for environments where vision is unreliable, such as cluttered industrial settings or domestic spaces with variable lighting. It also aligns with a growing interest in multimodal AI systems that combine vision, language, and physical interaction.

The same sensing approach has also been applied to wearable devices, where it can track pulse signals and joint movement with consistent accuracy. This points to potential crossover applications in healthcare, prosthetics, and rehabilitation.

Expanding the Role of Tactile Intelligence

The development highlights a broader shift in robotics toward integrating sensing, control, and learning into unified systems. While vision-based AI has dominated recent advances, tactile intelligence is emerging as a critical component for real-world deployment.

Companies such as Tesla and Nvidia have emphasized the importance of physical interaction in next-generation AI systems, particularly in humanoid robotics and automation. However, progress in touch sensing has lagged behind advances in perception and planning.

The Penn State research suggests that scalable, low-cost tactile systems may begin to close that gap. The sensors can also detect pressure changes in non-robotic contexts, such as monitoring swelling in battery systems – an early indicator of potential failure in electric vehicles.

Despite the progress, the technology remains in an early stage. Challenges include miniaturization, long-term reliability, and integration with existing robotic platforms. Researchers are also exploring ways to expand the sensing capabilities to include temperature and stretch, bringing the system closer to the complexity of human skin.

The ability to sense and respond to gentle touch is likely to be a defining feature of next-generation robots, particularly as they move into homes, healthcare settings, and collaborative workplaces. While the current system is still experimental, it illustrates how advances in materials science and AI are converging to address one of robotics’ most persistent limitations.

If scaled successfully, tactile sensing could shift robots from rigid, pre-programmed machines to adaptive systems capable of interacting with the physical world in a more human-like way.

BMW Rebuilds Munich Plant Around AI Brain and 2000 Robots

BMW has overhauled its Munich plant with an AI-driven production system and thousands of robots, signaling a shift toward software-defined manufacturing for electric vehicles.

BMW has completed a €650 million transformation of its Munich factory, embedding artificial intelligence and robotics at the core of production as it prepares to manufacture its next generation of electric vehicles. The overhaul signals a broader shift in automotive manufacturing, where software systems are beginning to orchestrate not only design and engineering, but the physical assembly process itself.

At the center of the upgrade is what BMW describes as an “AI brain” – a centralized system that coordinates production lines, logistics, and quality control across the plant. The system is being deployed as part of the company’s broader iFactory strategy, which aims to standardize digitalized manufacturing across its global operations.

The Munich site, which will begin producing the Neue Klasse i3 sedan in August 2026, is expected to scale to around 1,000 vehicles per day, placing it among the highest-output EV facilities in Europe.

A Software Layer for Physical Production

BMW’s approach reflects a growing convergence between industrial automation and AI-driven orchestration. Rather than treating robotics as isolated systems, the company has integrated approximately 2,000 robotic arms and a fleet of autonomous logistics machines into a unified control architecture.

The AI system manages workflows in real time, from coordinating robotic assembly tasks to directing material movement across the factory floor. Around 200 mobile robots handle internal logistics, transporting components from incoming shipments to production lines. These machines are expected to perform up to 17,000 transport operations per day by 2027, effectively taking over what BMW describes as the “last mile” of factory logistics.

A key feature of the system is its use of digital twins, allowing the factory to simulate and test production scenarios before they are executed. This enables rapid adjustments to workflows, reducing downtime and allowing the plant to respond more quickly to changes in demand or product configuration.

While similar concepts have been tested elsewhere, including at facilities developed by Hyundai, BMW’s implementation stands out for its scale and integration into a high-volume production environment.

Flexibility Becomes a Competitive Requirement

The redesigned Munich plant is built to accommodate a wide range of vehicle variants on a single production line, reflecting the increasing variability of the EV market. According to BMW, production sequences can be reconfigured in as little as six days, compared to weeks or months in conventional factories.

This level of flexibility is intended to allow production to “follow the market”, adapting to shifts in demand, regulatory requirements, or supply chain constraints. It also reduces the need for dedicated production lines for individual models, a structure that has historically limited responsiveness in automotive manufacturing.

The shift aligns with a broader industry move toward modular platforms and software-defined vehicles, where differentiation occurs more through software and configuration than through fundamentally different hardware architectures.

Human Workers Remain in the Loop

Despite the scale of automation, BMW maintains that human workers will continue to play a central role in the factory. Tasks such as installing interiors, wiring, and final assembly will still be carried out by people, supported by robotic systems designed to reduce physical strain and improve precision.

AI is also being applied to quality control. Robotic inspection systems capture and analyze large volumes of visual data to identify defects early in the production process. In some cases, robots can autonomously correct issues, reducing the need for rework at later stages and improving overall throughput.

The company has emphasized that the introduction of AI and robotics is intended to augment, rather than replace, human labor, positioning workers as operators and supervisors within increasingly automated environments.

BMW’s Munich transformation highlights a broader shift in industrial strategy, where competitiveness is increasingly defined by the ability to integrate software, robotics, and data into a cohesive production system. As automakers transition to electric vehicles and face greater market volatility, factories are becoming less like static assembly lines and more like adaptive, software-controlled systems.

The success of this approach will depend not only on technological execution but on whether such highly automated systems can deliver consistent gains in efficiency and quality at scale. For now, BMW’s investment offers one of the clearest examples of how physical AI is beginning to reshape large-scale manufacturing.

DNA Robots Advance Toward Targeted Drug Delivery and Virus Detection

Researchers are developing DNA-based nanorobots capable of delivering drugs and targeting viruses, though the technology remains in early experimental stages.

The idea of robots operating inside the human body has long been associated with science fiction. But recent advances in DNA-based nanotechnology are beginning to translate that vision into early-stage experimental systems, where programmable molecular machines can move, sense, and interact with biological environments.

Researchers are now designing DNA “robots” capable of delivering drugs directly to diseased cells and identifying viral threats within the bloodstream. While these systems remain far from clinical deployment, they represent a shift in how robotics is defined – extending from mechanical systems into the molecular domain.

Reimagining Robotics at the Molecular Scale

Unlike conventional robots built from metal, electronics, and actuators, DNA robots are constructed from strands of nucleic acids that can be folded, connected, and programmed into functional structures. Using techniques inspired by origami, scientists can create rigid joints, flexible linkages, and dynamic components that mimic mechanical systems at a nanoscale.

This approach adapts established principles from traditional robotics – including rigid-body motion and compliant mechanisms – into a biochemical context. The result is a new class of machines that operate not through motors or gears, but through chemical interactions and structural transformations.

Controlling these systems presents a fundamental challenge. At the molecular level, motion is dominated by random thermal fluctuations, known as Brownian motion, which can disrupt precise behavior. To address this, researchers rely on biochemical programming methods such as DNA strand displacement, where specific sequences act as triggers to initiate movement or change configuration.

External signals, including light, magnetic fields, and electric fields, can also be used to guide these nanorobots, providing an additional layer of control in otherwise unpredictable environments.

Medical Applications Remain Experimental

The most immediate interest in DNA robotics lies in medicine, where the ability to operate at cellular or even molecular resolution could enable highly targeted interventions. In experimental settings, DNA robots have been designed to locate specific cell types, release therapeutic payloads, and potentially capture or neutralize viruses.

Such systems could function as “nano-surgeons”, delivering drugs with far greater precision than conventional treatments and reducing side effects associated with systemic therapies. Researchers are also exploring their potential to detect and bind to viral particles, including pathogens similar to COVID-19, as a step toward autonomous diagnostic or therapeutic platforms.

Beyond medicine, DNA robots may also serve as tools for nanoscale manufacturing. By positioning molecules and nanoparticles with sub-nanometer precision, they could enable new forms of computing and materials engineering that are difficult to achieve with existing fabrication techniques.

However, most current systems remain proof-of-concept demonstrations. They typically operate in controlled laboratory conditions and lack the robustness required for real-world biological environments.

From Proof of Concept to Scalable Systems

The transition from experimental prototypes to practical applications presents several challenges. In addition to environmental unpredictability, researchers face limitations in modeling and design. There is currently no comprehensive database of DNA mechanical properties, and simulation tools for predicting nanorobot behavior remain underdeveloped.

Scaling these systems will likely require advances across multiple domains, including bio-manufacturing, materials science, and artificial intelligence. Proposed approaches include the development of standardized DNA component libraries and the use of AI-driven design tools to optimize structures and predict performance.

The broader implication is that robotics may increasingly extend beyond traditional hardware into programmable biological systems. DNA robots, if successfully scaled, could redefine automation at the smallest possible level – enabling machines that operate not in factories or warehouses, but within cells and molecules themselves.

For now, the technology remains in its formative stage. But its trajectory suggests that the next phase of robotics innovation may be less about building larger, more capable machines, and more about engineering systems that can function where conventional robots cannot reach.

LG CNS Expands Physical AI Strategy Through Silicon Valley Partnerships

LG CNS has partnered with Silicon Valley robotics startups to strengthen its physical AI capabilities, combining robot foundation models with new hardware platforms.

LG CNS is deepening its push into physical AI through a set of partnerships with Silicon Valley robotics startups, signaling a shift from enterprise software toward integrated AI and robotics systems. The move reflects a broader trend among large technology firms seeking to secure both the software intelligence and hardware platforms required for real-world automation.

The South Korean company announced that it has partnered with U.S.-based startups Config and Dexmate following its Open Innovation Summit held in Silicon Valley on March 19. The initiative is part of an ongoing effort to identify early-stage technologies that can be incorporated into LG CNS’s enterprise-focused AI and automation offerings.

Combining Robot Foundation Models with Hardware

At the center of the partnerships is Config, a startup focused on robot foundation models, a category of AI systems designed to generalize across tasks in physical environments. The company’s technology enables robots to learn from human motion data, translating real-world demonstrations into structured training inputs for robotic systems.

LG CNS plans to integrate Config’s models into its robotics stack to improve precision in dual-arm manipulation, a capability widely seen as critical for industrial automation and service robotics. Unlike traditional robotic programming, which relies on predefined instructions, these models aim to allow robots to adapt to variable environments with less manual configuration.

The partnership with Dexmate, meanwhile, extends LG CNS’s reach into hardware. Dexmate develops humanoid robots equipped with dual arms and wheel-based mobility, offering an alternative to bipedal locomotion that can simplify stability and deployment in structured environments.

LG CNS had previously invested in Dexmate, and the expanded partnership suggests a longer-term strategy of aligning software capabilities with specific hardware platforms rather than remaining hardware-agnostic.

Expanding the Definition of Physical AI

The company’s approach reflects an evolving definition of physical AI, where progress depends on the interaction between machine learning models and mechanical systems rather than advances in either domain alone. By working with both a model developer and a hardware manufacturer, LG CNS is positioning itself within a growing ecosystem that spans perception, control, and actuation.

This mirrors broader industry developments led by companies such as Nvidia, which has promoted integrated frameworks combining simulation, AI training, and robotics deployment. The emphasis on full-stack systems is becoming increasingly important as robotics moves from controlled demonstrations to operational environments.

LG CNS’s expansion into wheel-based humanoid systems also suggests a pragmatic approach to deployment. While bipedal robots remain a long-term goal for many developers, hybrid designs that prioritize stability and efficiency are gaining traction in logistics, manufacturing, and service applications.

Open Innovation as a Scaling Strategy

The partnerships were announced as part of LG CNS’s broader open innovation program, which seeks to identify and collaborate with startups at an early stage. This model allows large enterprises to access emerging technologies without building all capabilities in-house, while giving startups a pathway to commercial deployment.

For LG CNS, the strategy appears aimed at accelerating its transition from enterprise IT services into a provider of AI-driven automation infrastructure. By combining internal capabilities with external innovation, the company is attempting to build a flexible ecosystem that can adapt as both AI models and robotics hardware continue to evolve.

The challenge, as with much of the physical AI sector, lies in translating technical capability into scalable, real-world use cases. While partnerships can accelerate development, widespread deployment will depend on whether these integrated systems can deliver consistent performance in complex environments.

Unitree Files for IPO as Humanoid Robot Market Enters New Phase

Unitree Robotics has filed for a Shanghai IPO after becoming the world’s largest humanoid robot seller, signaling a shift from experimentation to early commercialization.

The planned public listing of Unitree Robotics marks a turning point for the humanoid robotics sector, which has long been defined by prototypes, research funding, and speculative timelines. By moving toward an initial public offering, the Hangzhou-based company is positioning itself as one of the first large-scale tests of whether humanoid robots can sustain a viable commercial market.

Unitree filed to list on the Shanghai Stock Exchange on March 20, seeking to raise 4.2 billion yuan, or about $610 million, to expand manufacturing and research. The company’s trajectory, from viral demonstrations to profitability within a year, places it at the center of a broader shift in how robotics companies are financed and evaluated.

Profitability Arrives Ahead of Mass Adoption

Unlike many peers, Unitree enters the public markets with profitability already established. The company reported an adjusted net profit of 600 million yuan in 2025, a sharp increase from its first profitable year in 2024. Revenue rose to 1.71 billion yuan from 392 million yuan the previous year, reflecting both volume growth and expanding product adoption.

This distinguishes Unitree from earlier entrants such as UBTech Robotics, which has remained unprofitable despite going public. The contrast highlights a widening gap between companies still operating in development mode and those beginning to scale production.

Even so, the market remains early. More than 100 humanoid robotics companies currently operate in China, according to Counterpoint Research, with consolidation expected as capital markets begin to impose stricter performance expectations. Unitree’s IPO is likely to serve as an early signal of which business models can sustain investor confidence.

From Quadrupeds to Humanoids

Unitree’s growth has been driven in part by a transition from quadruped robots to humanoid systems. The company shipped more than 30,000 quadrupeds between 2022 and 2025, establishing a hardware and supply chain base before scaling humanoid production.

In 2025, it sold 5,500 humanoid robots, which accounted for over half of its core revenue, up from less than 2% two years earlier. The majority of these units were sold to research institutions and educational users, indicating that widespread enterprise deployment remains limited.

The shift reflects a broader industry pattern, in which quadruped platforms have served as an intermediate step toward more complex humanoid systems. These earlier products provide revenue, operational data, and manufacturing experience that can be transferred into humanoid development.

Falling Prices and Vertical Integration

One of the more notable signals in Unitree’s prospectus is the rapid decline in pricing. The average price of its humanoid robots fell from roughly 593,400 yuan in 2023 to 167,600 yuan in 2025, bringing systems closer to a range that could support broader adoption.

At the same time, gross margins improved to nearly 60%, suggesting that cost reductions are being driven by manufacturing efficiencies rather than discounting alone. Unitree attributes this to its strategy of developing and producing key components in-house, reducing reliance on external suppliers.

This combination of falling prices and improving margins remains rare in the humanoid robotics sector, where most companies are still managing high costs and limited production volumes.

However, external dependencies remain. Like many robotics developers, Unitree relies on computing platforms and chips from Nvidia for core processing capabilities, leaving part of its supply chain exposed to geopolitical and trade uncertainties.

A Market Signal for Physical AI

Unitree’s IPO arrives amid intensifying global competition in humanoid robotics. In the United States, Elon Musk has said that Tesla plans to begin retail sales of its Optimus robots by 2027, framing humanoids as a future mass-market product.

At the same time, the concept of “physical AI” – systems that combine machine learning with real-world interaction – is gaining traction across the industry. Unitree’s robots were featured alongside other platforms at a recent conference led by Jensen Huang, underscoring growing alignment between hardware manufacturers and AI infrastructure providers.

Despite this momentum, near-term demand remains concentrated in research, education, and controlled industrial environments. Unitree’s own projections, which include plans to produce tens of thousands of humanoids annually within five years, suggest confidence in scaling, but not necessarily immediate mass adoption.

The company’s public listing will therefore function as more than a financing event. It will offer one of the first measurable indicators of whether investors view humanoid robotics as an emerging industrial category or as a longer-term technological bet.

Ubtech Sales Surge Signals Shift to Commercial Humanoid Robotics in China

Ubtech reported a 23-fold increase in humanoid robot sales, driven by industrial deployments. The results point to accelerating commercialization of embodied AI in China.

Chinese robotics firm Ubtech Robotics reported a sharp increase in humanoid robot sales, offering one of the clearest signals yet that the sector is moving from pilot projects to early commercial deployment. The company said it sold more than 1,000 full-size humanoid robots last year, representing a 23-fold increase compared with the previous year.

The surge in sales was accompanied by a significant shift in revenue composition. Humanoid robots accounted for more than 40 percent of total revenue, up from a negligible share the year before. The results highlight how embodied AI systems are beginning to contribute meaningfully to business performance rather than remaining confined to research or demonstration.

The company’s stock rose following the announcement, reflecting investor interest in what is increasingly viewed as a high-growth segment within robotics.

Industrial Deployment Drives Growth

Ubtech’s growth has been driven primarily by the deployment of its Walker S series humanoid robots in industrial environments. These systems are being used across sectors including automotive manufacturing, electronics, semiconductors, and logistics.

The robots are designed to perform tasks such as material handling, sorting, assembly, and inspection, positioning them as flexible alternatives to traditional automation systems. Unlike fixed industrial robots, humanoids can operate in environments built for human workers, reducing the need for infrastructure changes.

This shift from training to deployment marks a turning point. For several years, humanoid robotics companies have focused on developing capabilities and collecting data. Ubtech’s results suggest that at least part of the market is now transitioning toward real-world applications with measurable output.

At the same time, the company continues to expand into non-industrial scenarios, including education, research, and public-facing roles such as event assistance and exhibition guides. These use cases provide additional pathways for adoption, though industrial deployments appear to be driving the majority of revenue growth.

China’s Accelerating Embodied AI Push

Ubtech’s performance reflects a broader trend within China’s robotics ecosystem, where government support, manufacturing capacity, and supply chain integration are enabling rapid scaling.

While revenue from some of the company’s legacy robotics segments declined, the growth in humanoid systems more than offset those losses, contributing to an overall increase in total revenue and an improvement in gross margins. The company also reported a narrowing net loss, suggesting early signs of improving operational efficiency as production scales.

The pace of growth underscores a key dynamic in the global robotics race. Chinese companies are moving quickly to commercialize humanoid systems, leveraging cost advantages and manufacturing expertise to accelerate deployment.

The sharp increase in Ubtech’s humanoid robot sales highlights a broader inflection point for the industry. As systems transition from development to deployment, the focus is shifting toward reliability, scalability, and economic viability.

For Ubtech, the challenge will be sustaining growth while improving profitability in a market that is likely to become increasingly competitive. For the industry, the company’s results provide early evidence that humanoid robots may be moving beyond experimentation and into the first stages of commercial adoption.

Whether that momentum can be maintained will depend on the ability of these systems to deliver consistent value in real-world environments. But for now, the trajectory is clear: embodied AI is beginning to generate tangible business outcomes, not just technical demonstrations.

Humanoid Robot Prices Fall Sharply as Market Splits into Three Tiers

Humanoid robot prices have dropped more than 70% in two years, signaling a shift toward commercialization. The market is now dividing into premium, mid-range, and volume-driven segments.

The humanoid robotics industry may be approaching its first meaningful commercial inflection point, driven not by a single breakthrough but by a rapid decline in prices. New data from manufacturers indicates that average system costs have fallen dramatically over the past two years, reshaping expectations around adoption and market structure.

Reported pricing for some platforms has dropped from roughly $85,000 in 2023 to about $25,000 in 2025, reflecting a broader trend across the sector. While high-end systems still command significantly higher prices, the overall range has expanded, with entry-level humanoids now approaching levels previously associated with industrial equipment rather than research prototypes.

The shift suggests that humanoid robots are beginning to follow the economic trajectory seen in other hardware-driven industries, where scaling production and standardizing components lead to rapid cost compression.

From Experimental Platforms to Manufactured Products

For much of the past decade, humanoid robots existed primarily as research systems, built in small volumes and priced accordingly. Their role was to demonstrate capability rather than deliver consistent operational value.

That dynamic is changing as companies move into early production runs. Standardized components, improved supply chains, and iterative design cycles are reducing costs while increasing reliability. Even companies still developing their platforms are publicly targeting price points in the $20,000 to $30,000 range once manufacturing reaches scale.

This transition marks a critical shift. As humanoids become manufactured products rather than bespoke systems, they enter a different set of economic constraints. Pricing, margins, and return on investment begin to matter as much as technical capability.

A Three-Tier Market Begins to Form

As prices fall, the market is starting to segment into distinct tiers, each defined by a different strategy.

At the high end, premium systems focus on maximum performance, targeting industrial and enterprise environments where reliability and capability justify higher costs. These platforms often exceed $150,000 and emphasize advanced manipulation, safety, and integration.

In the middle, a growing category of “good enough” humanoids is emerging. These systems aim to balance performance with affordability, making them viable for applications such as logistics, warehousing, and light manufacturing. With projected pricing around $20,000 to $30,000, this segment is widely expected to drive the majority of unit volume.

At the lower end, a volume-driven approach is taking shape. Some manufacturers are aggressively reducing costs to accelerate adoption, offering simplified systems at significantly lower price points. This strategy prioritizes scale and rapid iteration, echoing patterns seen in industries such as electric vehicles and solar energy.

Price Compression Creates Strategic Pressure

The rapid decline in prices introduces both opportunity and risk. Lower costs could accelerate adoption in sectors facing labor shortages, where even modest improvements in productivity can justify investment.

At the same time, price compression is likely to strain margins and intensify competition. For companies in the United States and Europe, competing on cost alone may prove difficult, potentially pushing them toward premium positioning strategies that emphasize performance, safety, and integration.

This dynamic mirrors developments in other manufacturing sectors, where different regions specialize in either high-end engineering or cost-efficient production. Whether humanoid robotics follows a similar path remains uncertain, given that large-scale demand has yet to fully materialize.

The emergence of tiered pricing signals that humanoid robotics is entering a more mature phase. The industry is moving beyond demonstrations toward real economic constraints, where success depends not only on what robots can do, but on whether they can do it at a cost that makes sense.

Falling prices are a necessary step toward widespread deployment, but they do not guarantee it. The next phase will test whether humanoid robots can deliver consistent performance in real-world environments and generate measurable returns.

If they can, the current decline in prices could unlock large-scale adoption across industries. If not, it may instead mark a transition from early optimism to a more selective and competitive market.

Europe Bets on Humanoid Robots to Reclaim Ground in Global Tech Race

European companies are accelerating investment in humanoid robotics as the region seeks to regain technological momentum. Industrial expertise and automotive supply chains are emerging as key advantages.

Europe is increasingly positioning humanoid robotics as a strategic pathway to remain competitive in a global technology landscape dominated by the United States and China. While the region has lagged in areas such as large-scale artificial intelligence platforms and electric vehicles, it retains deep industrial capabilities that are now being redirected toward embodied AI.

Recent developments across the continent suggest that humanoid robots are no longer confined to research labs or demonstrations. Instead, they are moving into early industrial testing, supported by a combination of established manufacturing expertise and growing investor interest.

Industrial Strength Becomes a Robotics Advantage

Companies such as Hexagon AB are advancing humanoid systems designed for industrial environments. Its Aeon robot is currently undergoing trials with manufacturing clients including BMW Group, with plans to scale production significantly by the end of the decade.

Executives at Hexagon have indicated that production could expand from small batches today to thousands of units within a few years, suggesting confidence that demand will follow once systems reach commercial readiness. The company expects to bring its humanoid platform to market as early as 2026, a timeline that reflects accelerated development cycles compared with traditional industrial robotics.

At the same time, Neura Robotics GmbH has raised approximately €1 billion in fresh capital, with backing from major technology players including Amazon and Qualcomm. The funding signals growing confidence that European robotics firms can compete in a category increasingly defined by scale and integration.

For many of these companies, humanoid robotics represents a natural extension of existing capabilities. Europe’s industrial base, particularly in precision engineering and automation, provides a foundation that can be adapted to new robotic form factors.

Automotive Supply Chains Drive Momentum

The region’s automotive ecosystem is playing a central role in this transition. Suppliers such as Bosch and Schaefflerare investing in robotics as they seek new growth avenues amid slowing demand in traditional vehicle markets.

Humanoid robots share key components with electric vehicles, including batteries, motors, sensors, and control systems. This overlap allows manufacturers to repurpose supply chains and engineering expertise, reducing barriers to entry compared with entirely new technology domains.

The trend is not limited to Europe. Companies such as Hyundai Motor Company have already moved aggressively into the space, notably through the acquisition of Boston Dynamics. Demonstrations of advanced humanoid systems at events like Consumer Electronics Show have further amplified industry momentum and investor attention.

For European firms, the challenge is not starting from zero, but accelerating quickly enough to match competitors that have already made significant investments in AI and robotics platforms.

A Narrow Window to Scale

Analysts increasingly view humanoid robotics as a potential trillion-dollar market over the next decade, driven by labor shortages, aging populations, and the need for flexible automation. For Europe, the sector offers a rare opportunity to establish leadership in a next-generation technology category.

However, the window may be limited. Success will depend not only on technical capability but also on the ability to scale production and integrate software, hardware, and data systems into cohesive platforms.

Unlike earlier waves of automation, humanoid robotics requires coordination across multiple domains, from mechanical engineering to AI software and manufacturing. Europe’s strength lies in its ability to combine these elements, but execution will determine whether that advantage translates into global leadership.

The emerging push into humanoid robotics reflects a broader recalibration of Europe’s technology strategy. Having missed earlier waves in platform-scale AI, the region is now focusing on areas where physical engineering and industrial integration matter as much as software.

If humanoid systems move from pilot deployments to widespread adoption, Europe’s existing strengths could become a competitive edge. If not, the region risks repeating the pattern seen in previous technology cycles, where early capability did not translate into long-term dominance.

Saronic Raises $1.75 Billion to Scale Autonomous Ship Production

Saronic has raised $1.75 billion to expand autonomous ship production and shipyard infrastructure. The funding highlights a shift toward software-defined maritime manufacturing at industrial scale.

Saronic has raised $1.75 billion in a Series D round, pushing its valuation to $9.25 billion and positioning the company among the most heavily funded players in autonomous maritime systems. The investment underscores growing demand for AI-driven vessels, but more importantly, highlights a broader shift toward rebuilding industrial capacity around software-defined platforms.

Unlike many robotics startups focused primarily on autonomy software, Saronic is pursuing a vertically integrated model that combines vessel design, manufacturing, and deployment. The approach reflects increasing recognition that scaling physical AI systems requires not just intelligence, but production infrastructure.

Rebuilding Shipbuilding Around Autonomy

Saronic’s strategy centers on what it describes as an autonomy-first design philosophy. Rather than retrofitting existing vessels with autonomous capabilities, the company is developing ships from the ground up to operate without crews, integrating AI systems into core architecture.

This design approach is paired with investment in manufacturing. A key component is the development of “Port Alpha”, a next-generation shipyard intended to support high-throughput production of autonomous vessels. The company is also expanding existing facilities in Louisiana and Texas, signaling an effort to establish new shipbuilding capacity rather than rely on legacy infrastructure.

The emphasis on production reflects a structural challenge in the maritime sector. Shipbuilding capacity in the United States has declined over decades, limiting the ability to scale new platforms. By combining software-defined systems with modern manufacturing, Saronic is attempting to address both technological and industrial constraints simultaneously.

From Prototype Systems to Fleet-Scale Deployment

The funding will also support expansion of Saronic’s portfolio of autonomous surface vessels, ranging from smaller platforms such as the 24-foot Corsair to larger systems like the 180-foot Marauder. These vessels are designed for missions requiring extended range, endurance, and payload capacity, particularly in defense and maritime security contexts.

The company’s recent trajectory suggests a transition from prototype development to production. It reported completing the first hull of its Marauder platform within months of acquiring a shipyard, indicating an accelerated manufacturing timeline compared with traditional shipbuilding cycles.

This shift is reinforced by growing engagement with government customers, including a production contract with the U.S. Navy. Such partnerships provide both validation and demand signals, particularly as defense organizations explore autonomous systems to extend operational reach without increasing personnel requirements.

Investors in the round, led by Kleiner Perkins, emphasized that the combination of autonomy and manufacturing scale is relatively rare. The ability to produce systems consistently, rather than demonstrate isolated technical capability, is increasingly seen as a defining advantage in physical AI sectors.

Scaling Production as the New Competitive Edge

Saronic’s funding round reflects a broader trend in robotics and autonomous systems: the convergence of software innovation with industrial-scale production. As autonomy matures, the bottleneck is shifting from algorithmic capability to the ability to manufacture, deploy, and sustain systems in large numbers.

In the maritime domain, where platforms are capital-intensive and operational timelines span decades, this shift is particularly pronounced. Companies that can integrate AI with production infrastructure may be better positioned to define the next generation of naval and commercial fleets.

For Saronic, the challenge now lies in translating capital and ambition into sustained output. If successful, the company’s model could reshape not only how ships operate, but how they are built in the first place.

Toyota Introduces Swarm Automation System for Hybrid Warehouse Operations

Toyota Material Handling Europe has launched a coordinated AGV system designed to operate across mixed fleets. The platform reflects a shift toward scalable, software-driven warehouse automation.

Toyota Material Handling Europe has introduced a coordinated automated transport system aimed at simplifying how warehouses adopt and scale automation. The new platform, called Swarm Automation Transport, combines autonomous vehicles with centralized control software to manage material flows across mixed fleets.

The launch reflects a broader transition in warehouse robotics, where the focus is shifting from isolated automation deployments to systems that can operate alongside existing infrastructure. Rather than requiring full replacement of manual processes, the approach allows companies to introduce automation incrementally.

Coordinated Fleets Instead of Isolated Robots

At the core of the system is the integration of Toyota’s automated counterbalance stacker with its T-ONE software platform. The software orchestrates multiple vehicles, enabling them to coordinate tasks such as pallet transport, stacking, and replenishment across different parts of a facility.

This coordination model moves beyond traditional AGV deployments, where individual units are often assigned fixed routes or narrowly defined tasks. By contrast, the Swarm system enables dynamic task allocation across a fleet, allowing operations to adapt to changing warehouse conditions.

The platform is designed to handle a range of pallet formats, including euro pallets and bottom-deck configurations, and supports different loading orientations. This flexibility is critical in environments such as retail distribution and manufacturing, where standardization is often limited.

Importantly, the system can operate in hybrid environments alongside manual forklifts and other equipment. This reduces the operational disruption typically associated with automation rollouts and allows warehouses to scale deployment based on demand and budget constraints.

Integration and Scalability as Primary Drivers

Toyota positions the system as part of a broader ecosystem rather than a standalone product. The Swarm platform integrates with other automated equipment, including reach trucks, enabling vertical storage operations up to higher rack levels when combined.

This layered approach reflects a growing emphasis on interoperability in warehouse automation. Instead of deploying single-purpose machines, operators are increasingly seeking systems that can connect multiple processes into a unified workflow.

The focus on scalability is also evident in the system’s design. Companies can begin with a limited number of vehicles handling repetitive transport tasks, such as buffer zone movement or replenishment, and expand the fleet over time as operational requirements evolve.

Energy and safety considerations are built into the platform as well. Lithium-ion battery systems with automatic charging support continuous operation, while a combination of sensors, scanners, and bumpers provides 360-degree awareness in mixed-traffic environments.

The introduction of Swarm Automation Transport underscores a shift in how industrial automation is being deployed. Rather than pursuing full autonomy in a single step, manufacturers are increasingly adopting hybrid models that blend automated and manual operations under shared control systems.

For Toyota, the system reinforces its strategy of lowering the barrier to entry for automation by emphasizing compatibility and gradual adoption. For warehouse operators, it reflects a pragmatic path forward, where the value of automation lies not just in replacing labor, but in coordinating complex operations more effectively.

As logistics networks continue to expand in scale and variability, such coordination systems may become a defining layer in how physical workflows are managed, linking individual machines into cohesive, software-driven operations.