Google has introduced a new AI model designed to improve how robots understand and operate in real-world environments, targeting one of the most persistent limitations in robotics: the ability to reason beyond predefined instructions.

The model, Gemini Robotics-ER 1.6, focuses on what researchers describe as embodied reasoning – the capacity for machines to interpret visual inputs, plan sequences of actions, and determine when a task has been successfully completed. The update reflects a broader shift in robotics from systems that execute commands to those that can make context-aware decisions in dynamic settings.

The model is being made available to developers through Google’s AI tooling ecosystem, positioning it as part of a growing effort to standardize software layers for physical AI.

Moving from Perception to Reasoning

Robotics systems have historically relied on separate modules for perception, planning, and control, often requiring extensive engineering to connect them. Gemini Robotics-ER 1.6 attempts to unify these functions, allowing robots to process visual information and translate it directly into action.

The model improves spatial reasoning, enabling robots to identify objects, understand their relationships, and break tasks into smaller steps. It can also track objects across multiple viewpoints, combining inputs from different cameras to build a more complete understanding of an environment.

This multi-view capability is particularly relevant in real-world settings, where occlusion, clutter, and changing conditions can limit the effectiveness of single-camera systems. By integrating multiple perspectives, robots can maintain situational awareness even when parts of a scene are temporarily hidden.

Another key advancement is success detection. The model allows robots to evaluate whether a task has been completed correctly, reducing reliance on external validation or rigid programming. This is a critical requirement for autonomous operation, particularly in environments where tasks may need to be repeated or adjusted in real time.

Interpreting the Physical World

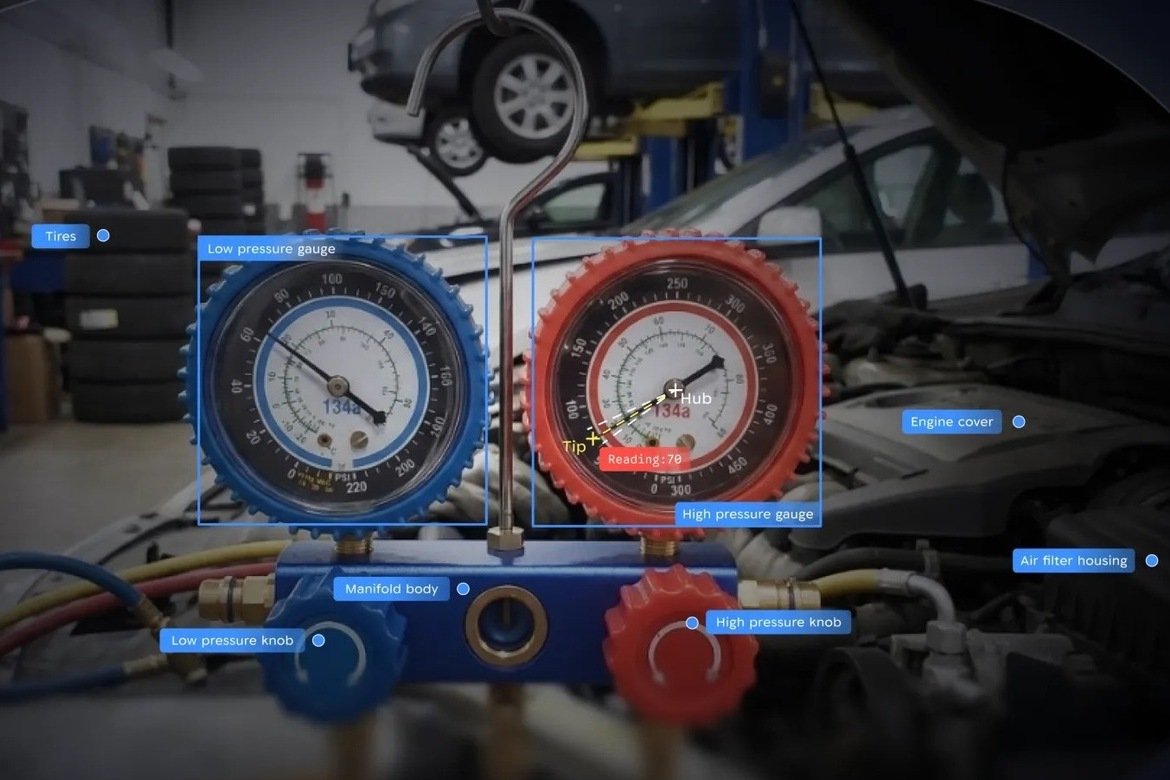

One of the more practical capabilities introduced in the model is the ability to read instruments such as gauges, meters, and digital displays. This function is particularly relevant for industrial and inspection applications, where robots must interpret physical indicators rather than purely digital data.

In collaboration with Boston Dynamics, the system has been applied to robots like Spot, which are used for facility monitoring. The model can analyze visual inputs, identify key components such as needles or numerical readouts, and calculate values with a high degree of accuracy.

Reported improvements in instrument reading performance suggest a significant step forward. Accuracy has increased from earlier levels of around 23% to over 90% in some scenarios, indicating that robots are becoming more capable of handling tasks that require precise interpretation of real-world signals.

The model also incorporates safety-aware reasoning, allowing robots to identify potential hazards and avoid unsafe interactions. This reflects an increasing emphasis on aligning robotic behavior with physical constraints, particularly as systems move into environments shared with humans.

Building a Software Layer for Physical AI

The release of Gemini Robotics-ER 1.6 highlights a broader trend toward treating robotics as a software problem as much as a hardware one. As companies race to develop humanoid and autonomous systems, the ability to generalize across tasks and environments is becoming a key differentiator.

Efforts by companies such as Nvidia and others have focused on simulation and training infrastructure, while Google’s approach emphasizes reasoning and decision-making at runtime. Together, these developments point toward a layered architecture for physical AI, where perception, reasoning, and control are increasingly integrated.

The remaining challenge is translating these capabilities into reliable real-world performance at scale. While models like Gemini Robotics-ER 1.6 demonstrate significant progress in controlled evaluations, deployment in complex environments will require further advances in robustness, data integration, and system design.

Google’s latest model suggests that robotics is entering a phase where intelligence is defined less by isolated capabilities and more by the ability to connect perception, reasoning, and action. As embodied AI systems become more capable of interpreting and responding to the physical world, the boundary between digital intelligence and physical execution continues to narrow.

The extent to which this translates into widespread adoption will depend on how quickly these systems can move from experimental demonstrations to dependable tools in industry and beyond.