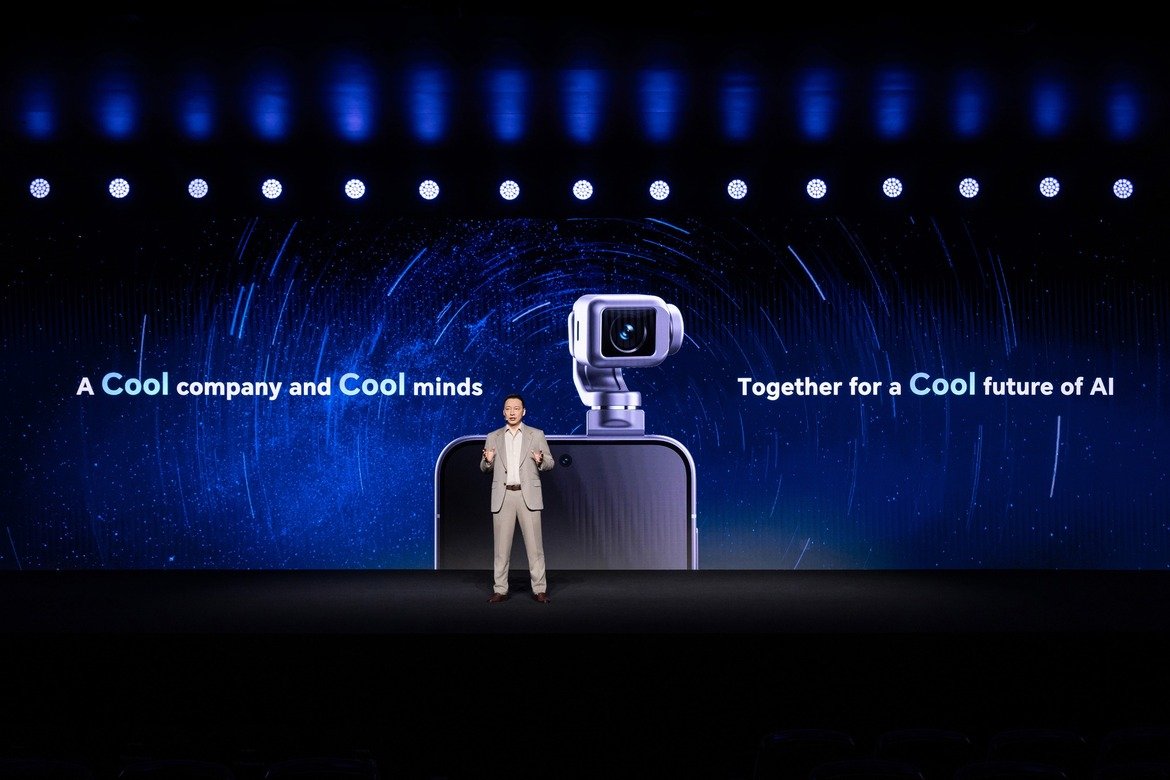

At Mobile World Congress 2026 in Barcelona, Chinese smartphone maker Honor introduced an experimental device that blends robotics and mobile computing: the “Robot Phone”. The concept smartphone features a 200-megapixel camera mounted on a miniature robotic gimbal arm capable of tracking users and responding to physical gestures.

The device reflects a broader push by consumer electronics companies to integrate robotics into everyday devices. Rather than limiting AI to software assistants or image processing, Honor’s concept brings mechanical motion and autonomous camera control directly into the smartphone form factor.

The company says the Robot Phone will launch commercially in China in the second half of 2026.

A Robotic Camera Built into a Smartphone

The most distinctive feature of the Robot Phone is its compact 4-degree-of-freedom (4-DoF) gimbal system. Using a custom micromotor assembly, the camera module can extend outward, rotate, and stabilize itself independently of the phone’s body.

This mechanical flexibility allows the device to track subjects in real time. The camera can follow a moving person during video recording or automatically reposition itself during video calls.

AI-powered tracking algorithms enable the system to detect motion, identify subjects, and adjust orientation accordingly. The camera can also rotate up to 90 or 180 degrees for cinematic shots and perform features such as “AI SpinShot”, which creates dynamic rotating video perspectives.

Honor designed the system primarily for creators, vloggers, and social media users who frequently record hands-free video.

AI Interaction Moves from Screen to Motion

Beyond simple tracking, the robotic camera module can respond to user gestures. During demonstrations, the phone’s camera arm was shown nodding or shaking slightly, mimicking human gestures as it followed a user.

This capability reflects a growing trend toward devices that combine AI perception with physical movement. By integrating robotics directly into the smartphone, Honor is exploring new ways for devices to interact with users in physical space rather than through touchscreens alone.

To improve imaging quality, Honor has partnered with ARRI, a well-known manufacturer of professional cinema cameras. The collaboration aims to adapt professional color science and imaging technologies for mobile devices.

If successful, the concept could narrow the gap between smartphone cameras and dedicated filmmaking equipment.

Part of a Broader AI and Robotics Ecosystem

The Robot Phone was introduced alongside several other products at MWC 2026, including the Magic V6 foldable smartphone, the MagicPad 4 tablet, and the MagicBook Pro 14 laptop.

Together, these devices form part of Honor’s strategy to build an AI-powered ecosystem connecting mobile devices, computing platforms, and robotics.

The company also demonstrated a humanoid robot designed for service and assistance roles. The robot is intended for applications such as retail support, workplace inspection, and general customer assistance.

By linking these devices through shared AI systems, Honor aims to create what it calls “augmented human intelligence”, where digital assistants can interact with users in both virtual and physical environments.

Consumer Robotics Begins to Enter Everyday Devices

The Robot Phone illustrates how robotics concepts are beginning to move beyond dedicated robots into mainstream consumer electronics.

Smartphones have long incorporated advanced sensors and AI processing, but the addition of mechanical motion introduces a new dimension of interaction. Cameras that can physically reposition themselves could improve video calls, content creation, and augmented reality experiences.

While it remains to be seen whether robotic camera systems will become a standard feature in smartphones, the concept reflects how the boundaries between robotics, AI, and personal devices are increasingly converging.

As companies experiment with new hardware designs, robotics may gradually become part of everyday consumer technology – not just as standalone machines, but embedded within the devices people carry every day.