The global market for humanoid and quadruped robots is entering a decisive growth phase, with shipments projected to reach 810,000 units by 2030, according to new industry forecasts by SAG. The shift reflects a broader transition from early-stage experimentation to real-world deployment across logistics, manufacturing, and service industries.

Recent data shows the pace of expansion is already accelerating, reports AIstify. Global shipments reached nearly 53,000 units in 2025, representing a 250% year-over-year increase, while total market revenue approached $1 billion. By the end of the decade, the market is expected to scale to $8 billion, supported by sustained double-digit growth.

The defining change is not just technological progress, but demand. After years of testing and pilot programs, companies are now integrating robots directly into operational workflows where labor shortages, safety requirements, and efficiency pressures are most acute.

Enterprise Adoption Becomes the Primary Growth Driver

The next phase of growth will be driven primarily by enterprise adoption rather than experimentation. Early deployments focused on validation and proof-of-concept, but that cycle is now reaching its limits.

“The robotics industry delivered strong growth in 2025, but the real test lies ahead,” said Yiwen Wu, Lead Research Advisor at Smart Analytics Global. “Enterprise adoption will be the key. Only vendors that can scale real-world deployments will define the next phase of the industry.”

Quadruped robots are currently leading in real-world use cases, particularly in inspection, security, and industrial monitoring. Their ability to navigate uneven terrain and operate in hazardous environments has made them easier to commercialize at scale.

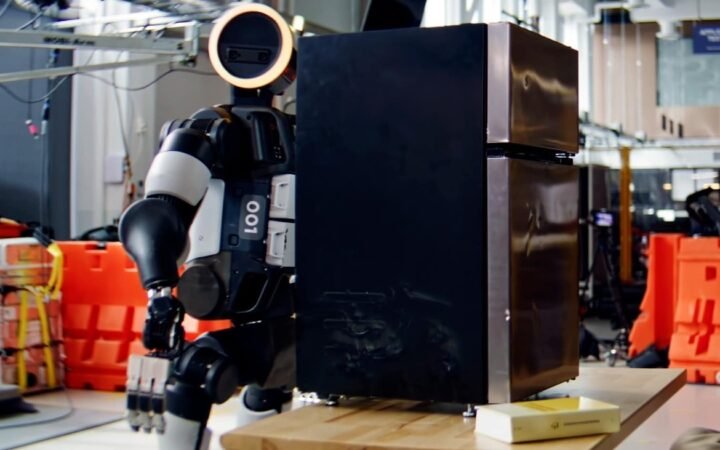

Humanoid robots, by contrast, remain earlier in deployment but are attracting significantly more investment and policy support. Their long-term potential lies in operating within human-designed environments, from warehouses and retail to healthcare and household applications.

This creates a dual-track market: quadrupeds driving immediate adoption, while humanoids dominate long-term strategic positioning.

China Dominates Hardware While Global Competition Intensifies

The geographic distribution of the market reveals a clear imbalance. Chinese companies accounted for approximately 85% of global shipments in 2025, with China itself absorbing more than 60% of total demand.

Companies such as Unitree Robotics, Agibot, DOBOT, and Galbot are scaling production rapidly, leveraging manufacturing efficiency to capture early market share. Unitree alone held a leading position across both segments, with a particularly dominant share in quadruped robots.

At the same time, Western companies are maintaining an advantage in software, AI models, and advanced research. Firms like Boston Dynamics, Tesla, and Amazon are focusing on autonomy, perception systems, and large-scale AI integration.

This divergence is shaping a fragmented but complementary global landscape, where leadership is split across hardware manufacturing, software intelligence, and regulatory frameworks. South Korea is increasing investment in robotics, while Europe continues to specialize in safety, certification, and high-value industrial applications.

Looking ahead, analysts expect consolidation pressure to increase as the market matures. Vendors that expanded production ahead of proven demand may face challenges, while others with strong deployment pipelines could emerge as dominant players.

The result is a market approaching a critical inflection point. Robotics is no longer defined by technical capability alone – it is increasingly shaped by scalability, economics, and the ability to operate reliably in the real world.