The ability to teach robots complex physical skills has long depended on carefully curated training data and highly controlled demonstrations. A new research project suggests that requirement may be loosening.

Researchers from Tsinghua University and robotics company Galbot have demonstrated a humanoid robot capable of learning tennis using imperfect human motion clips rather than idealized training data. The system, called LATENT, enabled a Unitree G1 humanoid robot to improve rapidly in real-world play – eventually defeating the researcher who trained it.

The project highlights a growing shift in robotics toward learning systems that can extract usable behaviors from messy, incomplete demonstrations. Instead of relying on precise instruction, the robot learns how to combine imperfect examples into effective motion strategies.

According to project lead Zhikai Zhang, the robot’s progress was strikingly fast. On its first day of real-world deployment it failed to return a single serve. By the final day of testing, Zhang reported that he could no longer beat the robot in rallies.

🎾Introducing LATENT: Learning Athletic Humanoid Tennis Skills from Imperfect Human Motion Data

Dynamic movements, agile whole-body coordination, and rapid reactions. A step toward athletic humanoid sports skills.

Project: https://t.co/MFy2NIOsrn

Code: https://t.co/A7B5H8PIBh pic.twitter.com/vOnEzkCHXC— Zhikai Zhang (@Zhikai273) March 15, 2026

Teaching Robots with Imperfect Demonstrations

Traditional robotic skill learning typically requires large datasets of clean, carefully labeled demonstrations. Capturing these datasets can be expensive and time consuming, particularly for tasks involving dynamic whole-body motion such as sports.

The LATENT system approaches the problem differently. Instead of relying on perfect motion capture data, the system trains on fragmented human tennis clips that include errors, inconsistencies, and incomplete movements.

From these clips, the model constructs what the researchers call a latent action space – a structured representation of primitive movements extracted from imperfect examples. A higher-level AI policy then functions as a coordinating controller, selecting and refining those primitive actions to produce effective gameplay behavior.

The training process occurs first in simulation, where the robot practices thousands of interactions without risk. Once the controller stabilizes, the policy is transferred to a physical robot using sim-to-real techniques, allowing the learned behaviors to operate on the Unitree G1 humanoid platform.

This approach allows the robot to learn usable skills even when the input demonstrations are flawed, something that more traditional robotics pipelines struggle to accommodate.

Why Messy Data May Be the Future of Robot Training

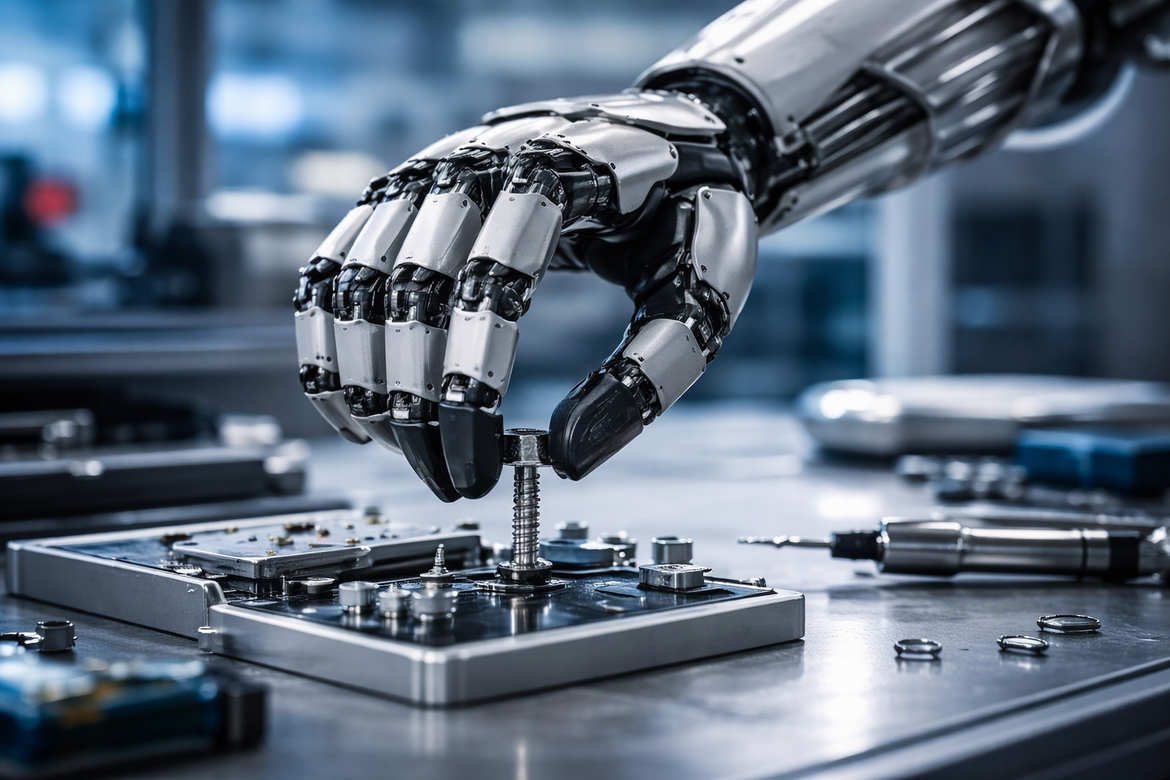

While a tennis-playing robot may appear like a demonstration project, the underlying method addresses a broader challenge in robotics: scaling physical skill acquisition.

Most robots today still depend on highly structured environments and carefully engineered training pipelines. In real-world settings such as warehouses, construction sites, or disaster response zones, collecting perfect demonstrations is rarely practical.

Learning from imperfect data could significantly lower the barrier to training robots for complex tasks. Instead of requiring a flawless example every time, robots could observe ordinary human activity and gradually assemble functional behaviors.

This shift mirrors trends already underway in large-scale AI systems, where models increasingly learn from vast amounts of noisy real-world data rather than tightly curated datasets.

For robotics, the implications are particularly significant because physical tasks often involve unpredictable conditions, subtle motor control, and continuous feedback from the environment. Systems that can tolerate imperfect training signals may adapt more quickly to these realities.

A Testbed for Embodied AI

Sports have become a useful proving ground for embodied AI research because they combine perception, motion planning, balance control, and fast decision making.

Tennis, in particular, requires precise timing, whole-body coordination, and real-time reaction to an unpredictable opponent. Successfully sustaining rallies demonstrates that a robot can integrate visual perception with dynamic locomotion and arm control.

In this case, the tennis court served as a compact test environment for evaluating how well the LATENT system could convert imperfect demonstrations into coordinated action.

The research team has made the project details and code publicly available, allowing other researchers to replicate and extend the approach.

If the underlying method proves scalable, it could influence how robots are trained for tasks far beyond sports – from industrial manipulation to collaborative human-robot work. Instead of waiting for perfect datasets, robots may increasingly learn from the same imperfect movements humans produce every day.