Boston Dynamics has integrated a new generation of AI models from Google into its industrial inspection platform, marking a step toward more autonomous and context-aware robotics in real-world environments.

The update brings Google’s Gemini and Gemini Robotics-ER 1.6 models into Boston Dynamics’ Orbit AIVI-Learning system, which powers inspection workflows for robots such as Spot. The integration reflects a broader shift in robotics toward combining physical systems with advanced reasoning models capable of interpreting complex environments and making decisions in real time.

The rollout is already live for existing AIVI-Learning customers, with the company positioning the upgrade as a foundational improvement in how robots understand and monitor industrial sites.

From Detection to Interpretation

Industrial inspection has traditionally relied on rule-based systems that identify predefined objects or anomalies. The integration of Gemini introduces a different approach, where robots can analyze scenes more holistically and reason about what they observe.

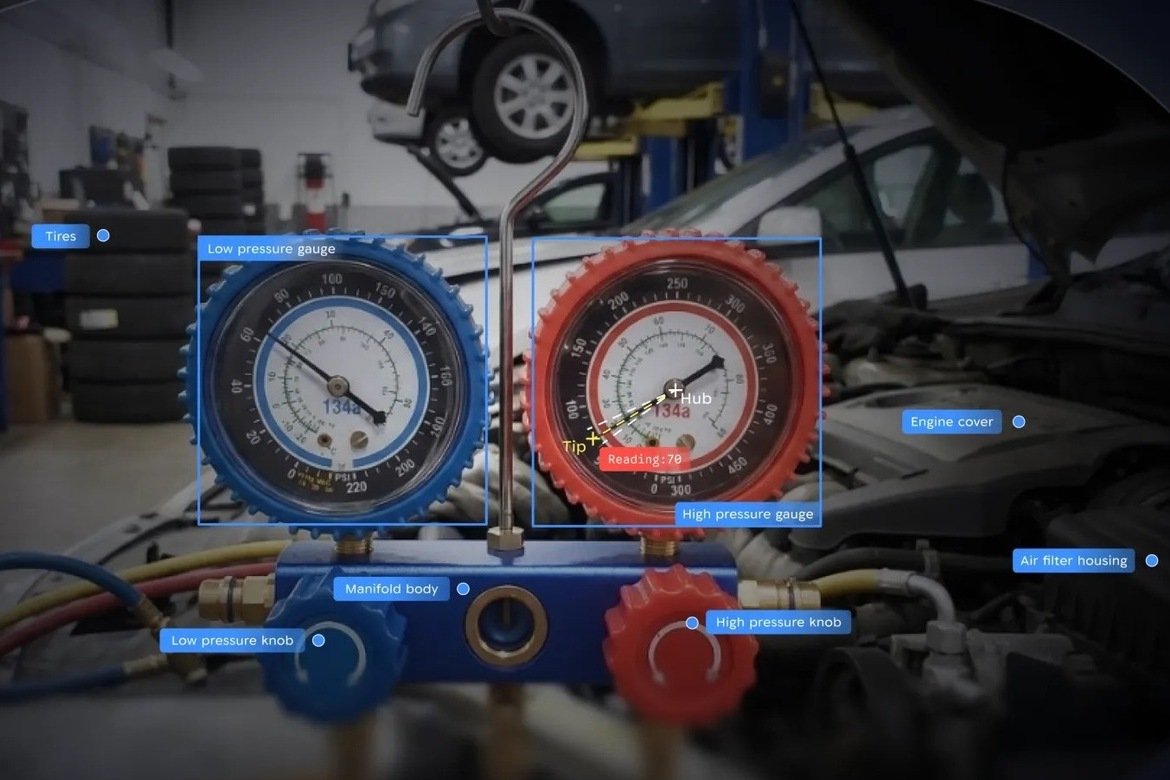

Using the updated system, Spot can perform tasks such as reading gauges, assessing fluid levels, counting materials, and identifying safety hazards like spills or debris. These capabilities extend beyond simple detection, requiring the robot to interpret visual signals and determine their operational significance.

This shift is particularly important in environments where conditions are dynamic and difficult to model in advance. Rather than relying on static rules, the system can adapt to new scenarios, enabling broader deployment across facilities with varying layouts and equipment.

The addition of “transparent reasoning” features also allows operators to review how the system arrives at its conclusions, offering greater visibility into AI-driven decisions – a requirement that is becoming increasingly important in industrial settings.

Continuous Learning in Live Environments

A defining feature of the updated platform is its ability to improve over time through continuous data collection and model updates. The system operates as a cloud-connected service, allowing performance improvements to be deployed without interrupting operations.

This “zero-downtime” update model reflects a shift toward treating robotics systems as evolving software platforms rather than static hardware installations. As new data is collected from deployed robots, the models can be refined to better understand specific environments and use cases.

The approach, however, also introduces new considerations around data sharing. Customers using AIVI-Learning are required to share operational data with Boston Dynamics to enable ongoing model training, highlighting the growing role of data as a core component of robotics performance.

Toward Site Wide Intelligence

Boston Dynamics frames the integration as a move toward “site-wide intelligence”, where robots contribute to a unified understanding of industrial operations. By combining visual inspection data with higher-level reasoning, the system aims to provide insights across safety, maintenance, and logistics.

This aligns with a broader industry trend toward physical AI systems that integrate perception, reasoning, and action. Companies such as Nvidia have emphasized similar approaches, focusing on the convergence of simulation, AI models, and robotics hardware.

In practical terms, the upgraded system enables Spot to handle more complex inspection workflows, from monitoring equipment health to tracking material movement. The ability to interpret gauges and other analog instruments is particularly relevant in industries where digital integration remains incomplete.

The integration of Gemini into Boston Dynamics’ inspection platform highlights how quickly robotics is evolving from task-specific automation to more generalized, intelligent systems. By embedding reasoning capabilities directly into deployed robots, companies are beginning to close the gap between perception and decision-making.

The remaining challenge lies in scaling these systems across diverse environments while maintaining reliability and trust. As robots take on more responsibility in industrial settings, their ability to explain and justify decisions may become as important as their technical performance.