Elon Musk has announced plans for a massive semiconductor manufacturing project aimed at producing specialized AI chips for Tesla vehicles, humanoid robots, and space systems.

The facility, called Terafab, will be built near Austin, Texas and jointly developed by Tesla and SpaceX. According to Musk, the plant will focus on producing custom processors designed specifically for artificial intelligence workloads used in autonomous driving, robotics, and satellite-based computing.

SpaceXAI + Tesla TERAFAB Project

Goal is a trillion watts of compute/year

Most must necessarily go to space, as US electricity is only 0.5TW https://t.co/hMtg9vNLcw

— Elon Musk (@elonmusk) March 22, 2026

The project reflects a growing shift in the AI industry toward vertically integrated hardware ecosystems, where companies design their own chips to support increasingly complex AI systems.

Terafab’s planned production capacity could reach one terawatt of computing power annually, an enormous scale intended to support the expanding compute requirements of Musk’s companies.

Building Chips for Physical AI

The chips produced at Terafab are expected to serve multiple applications across Musk’s technology portfolio.

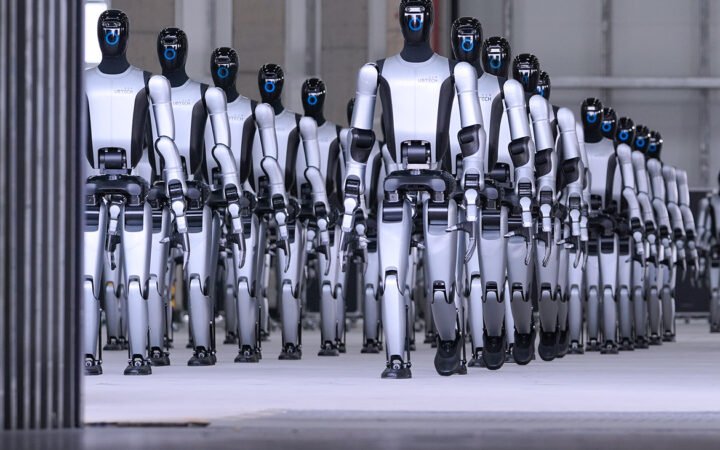

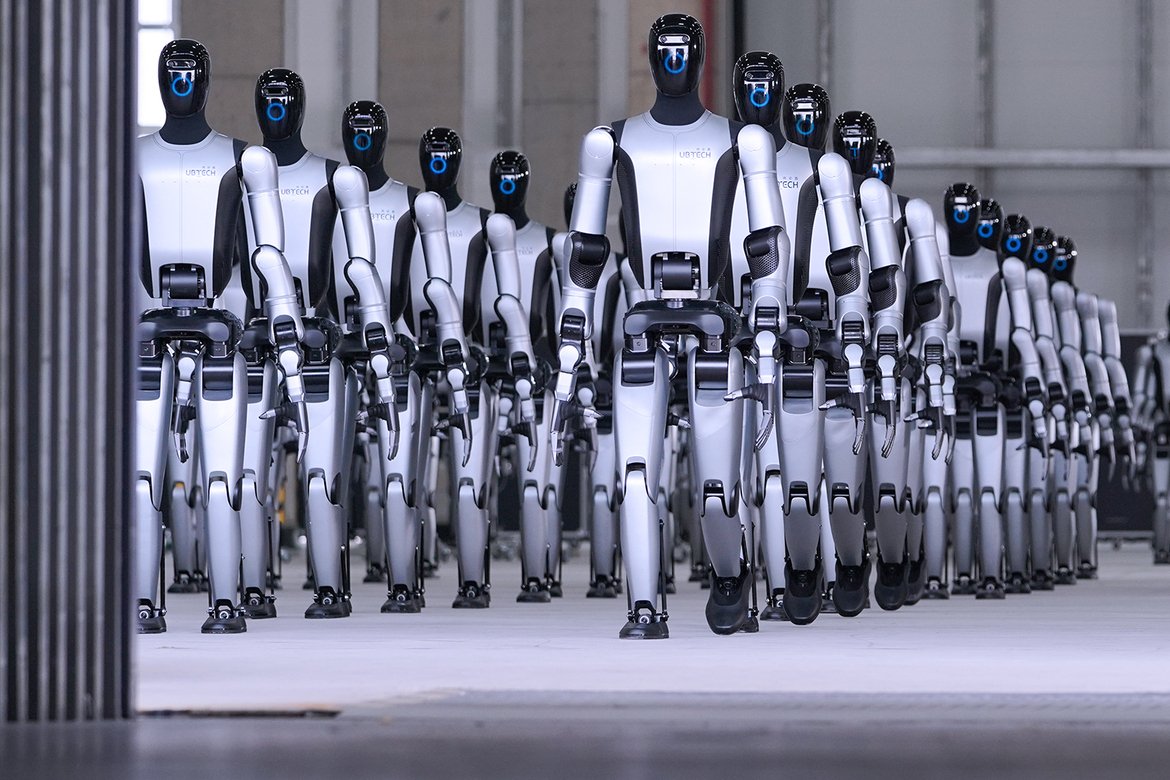

One category will focus on edge and inference computing, powering real-time AI decision-making in Tesla vehicles, robotaxis, and the company’s humanoid robot platform, Optimus. These chips are optimized for running trained AI models directly on devices where latency and energy efficiency are critical.

A second category will target high-performance AI training, supporting xAI models and large-scale data processing for SpaceX satellite systems.

As robotics and autonomous systems become more sophisticated, the demand for specialized AI processors has surged. Standard GPUs designed for cloud computing are often inefficient for real-time robotic systems that must process sensor data, control motors, and make split-second decisions.

By designing chips tailored to these tasks, companies can dramatically improve performance and energy efficiency.

A Compute Backbone for Musk’s Ecosystem

Terafab is part of a broader strategy to integrate hardware and AI development across Musk’s companies.

Tesla’s autonomous driving systems rely heavily on AI models that process visual and sensor data from vehicles. Meanwhile, Tesla’s Optimus humanoid robot is expected to require similar computing capabilities for perception, navigation, and manipulation tasks.

SpaceX also has growing computing demands. Musk has suggested that future satellite networks could support orbital data centers, enabling AI processing in space for applications ranging from communications to scientific analysis.

Under the Terafab plan, these systems would share a common computing architecture built around custom chips produced at the facility.

The project also reflects Musk’s increasing emphasis on AI as the central technology linking his companies. Tesla, SpaceX, and xAI are all developing systems that rely on large-scale machine learning models operating in physical environments.

A Massive Manufacturing Bet

The proposed facility represents one of the largest semiconductor manufacturing ambitions outside traditional chipmaking giants.

Initial construction is expected to begin in Texas before expanding production capacity over time. Musk has suggested that the long-term vision could extend beyond terrestrial computing infrastructure.

In addition to ground-based production targets of 100 to 200 gigawatts of computing power, the broader concept includes eventually supporting space-based computing systems capable of delivering up to a terawatt of processing power.

Such scale would dramatically increase the computational capacity available for training AI models and operating distributed intelligent systems across vehicles, robots, and satellites.

The Hardware Race Behind AI

The Terafab announcement underscores a broader industry trend: the race to build specialized computing infrastructure for artificial intelligence.

As AI expands beyond software into physical systems – autonomous vehicles, humanoid robots, and industrial machines – companies are increasingly designing hardware optimized for these workloads.

For Musk, the strategy aims to create a tightly integrated ecosystem where chips, software, and machines evolve together.

If successful, Terafab could become a central piece of infrastructure powering Tesla’s robots, SpaceX’s satellites, and the next generation of AI-driven machines operating both on Earth and in orbit.