Monthly Archives: February 2026

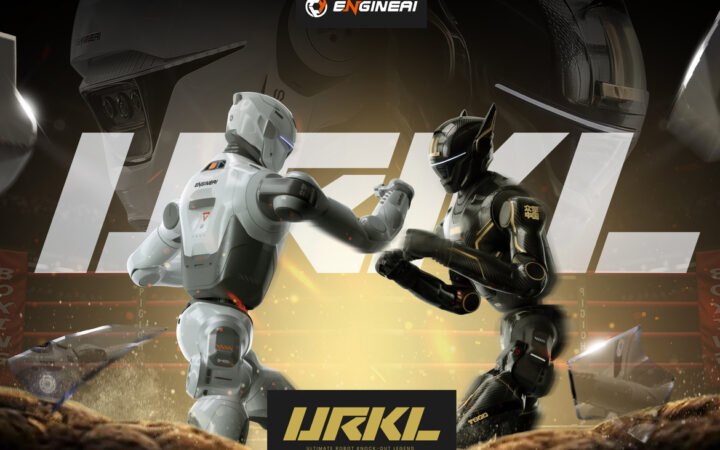

AGIBOT Unveils A3 Humanoid Robot with High-Impact Martial Arts Demonstration

AGIBOT introduced its full-size A3 humanoid robot in Shanghai, showcasing dynamic martial arts movements that signal progress in balance control and real-time motion planning. The demonstration highlights intensifying competition in China’s humanoid robotics sector.

Chinese robotics company AGIBOT presented its latest full-size humanoid robot, the A3, on Friday in Shanghai, marking one of the company’s most visible demonstrations of dynamic embodied intelligence to date. The event positions AGIBOT more directly in the race to build agile, general-purpose humanoids capable of operating beyond controlled lab environments.

The unveiling comes amid intensified competition in China’s humanoid robotics sector, where companies are moving rapidly from static walking demos toward machines capable of athletic, high-load maneuvers.

From Stable Walking to Dynamic Motion

In public demonstrations, the A3 executed mid-air kicks, rapid spinning strikes, and complex coordinated sequences reminiscent of martial arts training. While such movements may appear theatrical, they serve a technical purpose: stress-testing whole-body control systems under extreme dynamic conditions.

Humanoid robots historically struggled with balance recovery and high-speed motion due to limitations in actuator torque, joint responsiveness, and latency in perception-to-action loops. Movements such as jumping kicks require synchronized control of hips, knees, ankles, and upper-body counterbalances, all coordinated in milliseconds.

The A3’s performance suggests improvements in three critical areas:

- High power-density actuators capable of explosive bursts without destabilizing the platform

- Advanced balance algorithms for real-time center-of-mass adjustment

- Low-latency motion planning systems that integrate perception and control

Unlike choreographed walking sequences, airborne maneuvers introduce transient instability. A robot must predict landing forces and pre-adjust joint stiffness before ground contact. This type of anticipatory control is a stepping stone toward real-world tasks such as climbing debris, navigating uneven terrain, or handling dynamic industrial environments.

Athleticism as a Benchmark for Physical Intelligence

The demonstration reflects a broader shift in how robotics companies measure progress. Early humanoid milestones focused on bipedal locomotion. The next phase emphasizes agility, resilience, and coordinated whole-body action.

Dynamic martial arts-style routines push hardware and software simultaneously. They test actuator durability, thermal management, sensor fusion, and high-frequency control loops under stress. More importantly, they showcase integrated embodied intelligence, where perception, prediction, and actuation operate as a unified system.

This shift mirrors trends across the sector. Chinese competitor Unitree Robotics has also accelerated development of high-mobility humanoids, emphasizing speed and balance recovery. The competitive landscape increasingly rewards systems that can combine strength, flexibility, and computational adaptability.

While spectacle draws attention, the underlying technical implications are practical. Robots capable of maintaining stability during aggressive maneuvers are more likely to withstand unpredictable conditions in logistics, manufacturing, and public service roles.

China’s Accelerating Humanoid Push

The A3 unveiling in Shanghai highlights how Chinese robotics firms are compressing development cycles. Public demonstrations are no longer limited to cautious lab experiments but are becoming stress tests in front of live audiences.

This reflects a maturing ecosystem where hardware supply chains, actuator innovation, AI model training, and simulation platforms are advancing in parallel. Companies are increasingly treating humanoids not as research novelties but as future infrastructure systems.

The key question now is not whether humanoids can walk reliably, but whether they can operate dynamically, safely, and economically at scale. AGIBOT’s A3 suggests that the benchmark for physical intelligence is rising, and that athletic capability may become a proxy for broader real-world robustness.

As embodied AI moves from static balance toward high-energy coordination, demonstrations like this serve as early signals of where the industry is heading: toward machines that are not just stable, but physically expressive and adaptable under pressure.

X-Humanoid Launches Embodied Tien Kung 3.0 as Open, Practical Humanoid Platform

Beijing-based X-Humanoid unveils Embodied Tien Kung 3.0, a full-size humanoid robot built on the Wise KaiWu platform, emphasizing openness, interoperability, and real-world industrial deployment.

Beijing-based Beijing Innovation Center of Humanoid Robotics, also known as X-Humanoid, has unveiled Embodied Tien Kung 3.0 – a next-generation general-purpose humanoid platform designed to balance openness with practical deployment. The launch signals a shift in China’s humanoid robotics strategy from demonstration projects toward scalable industrial integration.

Built on X-Humanoid’s proprietary Wise KaiWu embodied AI platform, the full-size robot introduces upgrades across balance control, motion coordination, and autonomous decision-making. The company says Tien Kung 3.0 is the first humanoid of its size to combine high-dynamic whole-body motion control with integrated tactile interaction, positioning it for more demanding real-world tasks.

An Open Architecture Aimed At Accelerating Adoption

A central theme of the Tien Kung 3.0 release is interoperability. The humanoid robotics sector continues to face fragmentation – with closed hardware stacks and incompatible software frameworks slowing commercial rollouts. X-Humanoid is attempting to address those bottlenecks directly.

On the hardware side, the robot includes multiple expansion interfaces that allow developers to integrate different end-effectors and tools without redesigning the base system. The architecture is intended to simplify adaptation across manufacturing, commercial services, and specialized industrial scenarios.

Software openness is equally emphasized. The Wise KaiWu ecosystem provides documentation, toolchains, and a low-code environment designed to reduce development complexity. Compatibility with widely used middleware and communication protocols such as ROS2, MQTT, and TCP/IP allows research institutions and integrators to customize applications without reengineering foundational components.

X-Humanoid has also open-sourced several core technologies tied to the platform, including elements of its motion control framework, world model, embodied vision-language models, cross-ontology VLA systems, training pipelines, datasets, and simulation libraries. The strategy aims to cultivate a broader developer ecosystem capable of iterating and deploying humanoid applications more quickly.

From High-Torque Hardware To Multi-Robot Intelligence

Beyond openness, the company is positioning Tien Kung 3.0 as a practical industrial machine rather than a research prototype. The robot integrates high-torque joints capable of supporting heavy-load tasks while maintaining balance on uneven terrain. Its multi-degree-of-freedom coordination allows for complex actions such as kneeling, bending, obstacle clearing, and precise manipulation in confined spaces.

Millimeter-level calibration accuracy, enabled through coordinated joint control, is intended to meet industrial precision requirements. The physical platform is paired with the Wise KaiWu AI stack, which establishes a continuous perception-decision-execution loop.

At the cognitive level, world models and vision-language systems interpret scenes and break down complex tasks into executable steps. Real-time navigation and VLA-based control manage obstacle avoidance and fine motor actions. A multi-agent framework enables centralized scheduling and collaboration among multiple robots, signaling a move from single-unit operation to coordinated fleet deployment.

Taken together, Embodied Tien Kung 3.0 reflects a broader ambition: transforming humanoid robotics from experimental showcases into interoperable, production-ready systems capable of functioning in commercial and industrial environments at scale.

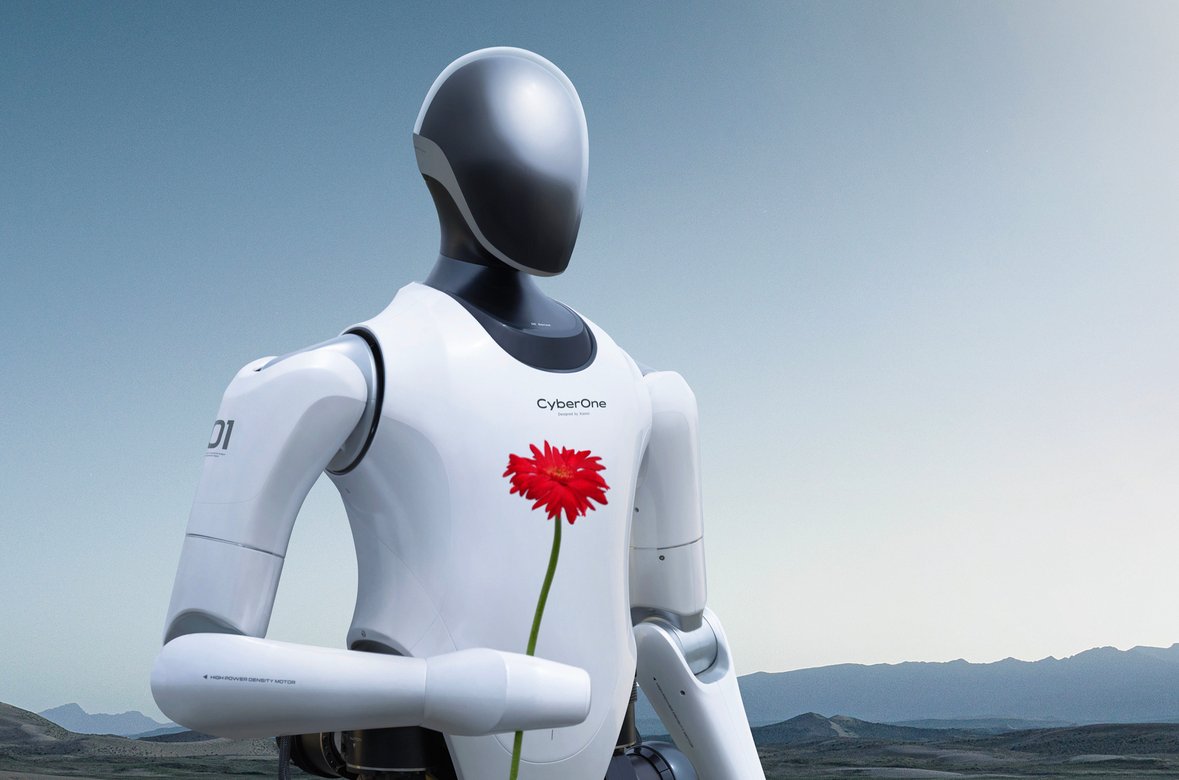

Xiaomi Open-Sources Robotics-0: A Vision-Language-Action Model for Generalist Robots

Xiaomi has released Robotics-0, an open-source Vision-Language-Action (VLA) model designed to enable real-time perception and control for embodied AI systems, marking a strategic push into foundational robotics software.

Xiaomi has released Robotics-0, an open-source Vision-Language-Action (VLA) model intended to power real-time robotic control and perception, underscoring the company’s ambitions beyond consumer electronics and into the foundation layers of physical AI.

The model, made publicly available with pretrained weights and code, integrates multimodal understanding with continuous action generation – an approach increasingly viewed as essential for robots that operate in dynamic, unstructured environments. Unlike models tailored solely for perception or language tasks, Robotics-0 is designed to connect what a robot sees and hears with what it does next.

By open-sourcing the technology, Xiaomi signals its intent to shape the broader research and development ecosystem for generalist robotics, potentially accelerating adoption and experimentation across academia and industry.

Bridging Perception, Language, and Action

At its core, Robotics-0 blends a pretrained Vision-Language Model (VLM) with a Diffusion Transformer (DiT) that generates continuous action sequences conditioned on both visual inputs and language instructions. The combined architecture allows the system to interpret images and textual directives, then produce executable robot control commands in real time.

Xiaomi reports that the model contains 4.7 billion parameters and was trained on a massive dataset that includes approximately 200 million robot trajectory steps alongside over 80 million samples of general vision-language data. The robot data spans tasks collected via teleoperation, including complex bimanual manipulation scenarios such as Lego disassembly and towel folding.

This dual-domain training approach is designed to preserve strong vision-language capabilities while enabling generalizable action generation – allowing the model to interpret instructions and translate them into motion without requiring extensive task-specific retraining.

A central challenge in combining perception and control is inference latency. Robotics-0 addresses this with asynchronous execution, where the robot begins executing one chunk of actions while the system prepares the next. This padding strategy ensures that physical motion flows continuously even under real-time constraints.

Early benchmarks suggest strong performance across simulation environments, with high success rates on standard tasks and competitive results on multimodal benchmarks. According to Xiaomi, the model approaches the underlying VLM’s performance on language and visual understanding metrics while delivering robust action generation in simulation.

Implications for Robotics Development

The open-source release comes at a pivotal moment in robotics. Across academia and industry, there is converging interest in foundation models that can generalize across tasks without retraining from scratch for each new scenario. By providing a publicly accessible, pretrained VLA model, Xiaomi is lowering barriers to experimentation and potentially influencing how next-generation embodied AI systems are built.

For robotics developers, Robotics-0 offers several strategic advantages:

- Multimodal integration: The ability to combine vision, language, and action generation facilitates more intuitive human-robot interaction workflows, as instructions can be delivered in natural language and grounded in visual context.

- Scalability: Pretrained weights and public code allow researchers and integrators to start from a shared baseline rather than building models from scratch.

- Real-time execution: By tackling the latency problem head-on through asynchronous action generation, Xiaomi moves toward practical deployment on physical platforms rather than pure simulation.

Open-source foundation models have accelerated progress in fields such as natural language processing and computer vision. Applying the same principle to embodied AI suggests a roadmap where robots can share knowledge structures and learning strategies across domains rather than isolated task solutions.

However, moving from benchmark performance to robust, commercialized robotics applications remains a substantive challenge. Physical systems must contend with real-world variability – from lighting conditions and sensor noise to mechanical wear and safety constraints – that remains difficult to capture in simulated training.

By releasing Robotics-0, Xiaomi invites the global robotics community to contribute toward refining and extending the model’s capabilities. Partnerships between researchers, integrators, and hardware manufacturers could accelerate the pace at which vision-language-action models power real-world robots.

Whether this open-source push will translate into commercial leadership depends on broader ecosystem adoption and how effectively developers leverage the model across diverse robotic platforms. But in a field where software has often lagged hardware advances, Xiaomi’s move marks an important effort to define the software stack for a new generation of embodied intelligence.

Samsung Display Bets OLED Screens Will Anchor Human-Robot Interaction

Samsung Display is positioning OLED panels as a core interface layer for humanoid robots, arguing that voice alone will not be sufficient for reliable human-machine interaction.

As artificial intelligence systems become increasingly conversational, Samsung Display is making a counterintuitive bet: the future of humanoid robots will still depend on screens.

While voice interfaces have reduced friction in consumer AI products, recognition errors and contextual limitations remain persistent challenges. For humanoid robots operating in homes, hospitals, or public spaces, visual confirmation may prove critical. Samsung Display and domestic rival LG Display are positioning OLED panels as the interface layer that bridges machine cognition and human trust.

The strategy reflects a broader industrial calculation. As robotics moves from industrial arms toward service-oriented humanoids, display manufacturers see a new growth frontier beyond smartphones and televisions.

Screens as a Reliability Layer

Voice control is often framed as the natural interface for AI-powered machines. But real-world deployments reveal gaps in comprehension, ambient noise interference, and user hesitation around relying solely on audio feedback.

Display makers argue that humanoid robots will require multimodal interfaces, combining speech with visual cues that clarify instructions, system states, and user prompts. An OLED screen embedded in a robot’s torso or face could present task confirmations, safety alerts, or contextual information in environments where verbal communication is insufficient.

Samsung Display and LG Display have reportedly been engaging automakers entering robotics and smaller robotics developers alike, seeking early design wins before the humanoid segment scales. The companies are effectively attempting to establish screens as standard components in next-generation robot platforms.

Industry forecasts cited in Korean media estimate the humanoid robotics market could reach 55 trillion won, roughly $40 billion, by 2035. If display adoption approaches 80 percent across assistance, service, and home robots, it would open a significant adjacent revenue stream for display manufacturers.

Early Positioning Ahead of Scale

The emphasis on robotics was visible at CES 2026 in Las Vegas, where Samsung Display dedicated substantial floor space to demonstrating OLED applications across multiple robotic form factors. Rather than treating robotics as an experimental sideline, the company framed it as a long-term vertical alongside automotive and mobile displays.

LG Display is pursuing a parallel strategy, aiming to secure supply agreements before humanoid robotics transitions from pilot programs to scaled production. The competitive dynamic mirrors earlier battles in automotive displays, where early supplier relationships often determine long-term dominance.

For display makers facing saturation in smartphones and televisions, humanoid robotics offers a new category with potentially differentiated requirements, including durability, energy efficiency, and unconventional shapes.

The Interface Question in Physical AI

The push by Samsung and LG underscores a deeper debate within robotics: how humans will interact with embodied AI systems.

As generative AI advances rapidly in language and reasoning, the physical manifestation of that intelligence still requires intuitive communication channels. In factories, robots can rely on status lights and dashboards. In homes or public settings, interaction becomes more social and immediate.

Screens provide persistent visibility. Unlike voice, they allow users to review information, confirm instructions, and understand robot intent at a glance. That capability could become particularly important in service roles where clarity and safety are paramount.

If humanoid robots become widespread over the next decade, interface design may prove as commercially consequential as mobility or manipulation hardware. Samsung Display’s investment suggests it sees OLED panels not as optional accessories, but as foundational infrastructure in the emerging human-robot relationship.

RoboDK Introduces CAM Software to Accelerate Deployment of Robotic Machining Cells

RoboDK has launched RoboDK CAM, a software platform designed to automate toolpath generation and robot programming for machining cells, aiming to reduce integration time and engineering complexity.

RoboDK has introduced RoboDK CAM, a new software platform aimed at simplifying and accelerating the deployment of robotic machining cells by automating toolpath generation, simulation, and robot code creation.

The release targets a persistent bottleneck in manufacturing automation: the complexity of programming industrial robots for machining tasks. Traditionally, deploying a robotic machining cell requires specialists to manually write and test vendor-specific robot code, a process that can take weeks and often demands deep expertise in both robotics and computer-aided manufacturing.

RoboDK CAM seeks to streamline that workflow by generating robot programs directly from CAD designs and digital simulations, reducing the need for manual coding and lowering integration costs.

Bridging CAD and Industrial Robots

The software supports a range of machining operations including milling, drilling, deburring, cutting, and additive manufacturing. Within a unified environment, users can create advanced toolpaths, simulate full material-removal processes, and perform collision detection before deploying code to physical robots.

A key feature is the ability to move between 3-axis and 5-axis machining tasks without switching platforms, allowing manufacturers to consolidate programming and simulation steps. The system also provides stock tracking to visualize material removal throughout the machining cycle.

RoboDK offers the software in two configurations. A standalone version enables users to manage the entire workflow – from toolpath generation to robot simulation and code export – within RoboDK’s interface. An integrated version connects with established CAD/CAM systems such as Fusion 360, SolidWorks, and Mastercam through add-ins, extending existing workflows to industrial robots without requiring a separate programming environment.

By embedding robotic simulation within familiar design platforms, the company is positioning the software as an incremental extension rather than a wholesale replacement of existing machining processes.

Reducing Engineering Overhead

According to early testers cited by the company, RoboDK CAM can cut testing time by as much as 40 percent, depending on the complexity of the automation setup. More broadly, the platform aims to shrink deployment timelines from weeks of programming and troubleshooting to a significantly shorter configuration cycle supported by virtual validation.

In conventional machining automation, engineers must iteratively test reach, orientation, collision risks, and tool access within the physical cell. Errors discovered late in the process can lead to costly downtime and rework. By enabling full simulation before deployment, RoboDK CAM shifts more of that validation upstream.

For manufacturers, the economic case centers on faster automation rollouts and reduced disruption to production lines. For system integrators, it translates into shorter project cycles and lower engineering labor requirements.

Software as the Automation Multiplier

The launch reflects a broader shift in industrial robotics: as hardware becomes more standardized and accessible, software increasingly determines deployment speed and flexibility.

Robotic machining, in particular, has gained traction as manufacturers seek alternatives to traditional CNC systems, leveraging industrial arms for large-format parts or complex geometries. But adoption has been limited by programming complexity and integration overhead.

By automating code generation and embedding simulation directly into design workflows, RoboDK is attempting to lower the barrier to entry for robotic machining cells.

The competitive landscape includes established CAD/CAM vendors and robot manufacturers offering proprietary programming tools. RoboDK’s strategy hinges on vendor-agnostic compatibility and its existing base of users who rely on its simulation engine for offline robot programming.

As factories push toward more flexible and digitally integrated production environments, tools that connect design intent directly to robotic execution could play a growing role in accelerating automation across mid-sized and large manufacturers.

Mobileye Secures Major Indian Partnership and Expands Into Robotics

Mobileye deepens its push into physical AI with a major ADAS partnership in India while advancing its robotics strategy through the planned acquisition of Mentee Robotics.

Autonomous driving pioneer Mobileye is widening its footprint across two fast-growing frontiers: advanced driver assistance systems in emerging markets and humanoid robotics powered by physical AI.

The company has secured a significant partnership in India while continuing to lay the groundwork for a broader robotics strategy announced earlier this year. Together, the moves underscore Mobileye’s ambition to position itself not just as a vehicle autonomy leader, but as a foundational player in machines that operate in the physical world.

Strengthening Its Position in India’s Auto Market

Indian automaker Mahindra & Mahindra has selected Mobileye’s SuperVision and Surround ADAS technologies for its upcoming vehicle lineup. The collaboration brings Level 2+ advanced driver assistance capabilities – including surround perception, lane support, and driver monitoring – to next-generation models aimed at a rapidly modernizing market.

India represents one of the fastest-growing automotive regions globally, where regulatory standards and consumer expectations around safety are evolving quickly. For Mobileye, the deal extends its global reach beyond established markets in Europe and North America and into a region where demand for intelligent mobility systems is accelerating.

The agreement also reinforces Mobileye’s modular technology approach. Its SuperVision platform integrates cameras, radar, and advanced AI-based perception to deliver scalable driver assistance features that can later evolve toward higher levels of autonomy. As automakers compete to differentiate through software-defined capabilities, Mobileye’s stack positions it as a long-term systems partner rather than just a component supplier.

From Vehicle Autonomy to Physical AI

While expanding in automotive, Mobileye is simultaneously advancing its robotics ambitions. Earlier this year, the company announced plans to acquire Mentee Robotics in a deal valued at approximately $900 million. The acquisition is intended to combine Mobileye’s autonomy and safety expertise with Mentee’s vertically integrated humanoid platform.

Mobileye has framed the move as a natural evolution of its autonomy stack. The perception, mapping, planning, and safety systems originally built for vehicles can, in principle, be adapted to humanoid robots navigating human environments. Both domains require real-time decision-making under uncertainty, rigorous safety validation, and edge-compute efficiency.

The robotics push aligns with a broader industry shift toward “physical AI” – systems that move beyond digital intelligence into machines that interact directly with the real world. Executives have emphasized that autonomy in cars and humanoids share foundational technical challenges: multimodal perception, world modeling, intent-aware reasoning, and safe actuation.

By combining a strengthened automotive presence in high-growth markets like India with a parallel bet on humanoid robotics, Mobileye is effectively hedging its future across two complementary sectors. If successful, the strategy could position the company at the center of both autonomous mobility and next-generation embodied AI systems.

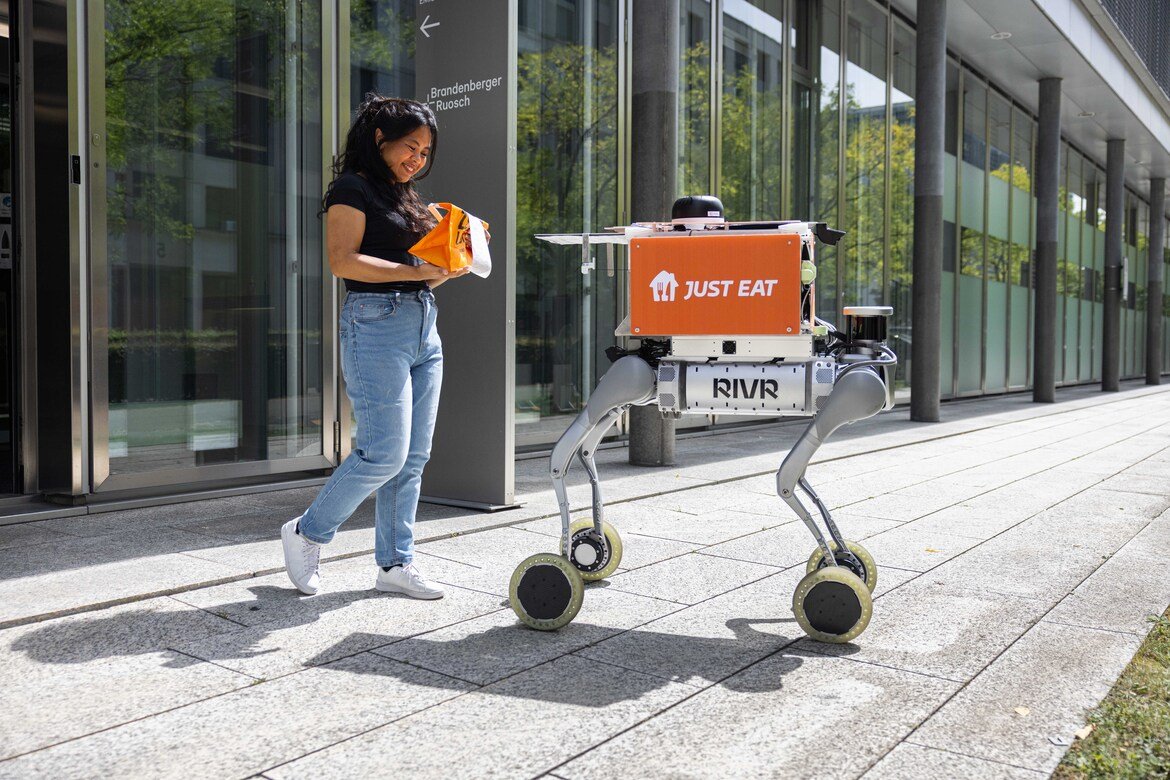

Just Eat Expands UK Robot Delivery Trial as Competition with Uber Eats Intensifies

Just Eat Takeaway.com has launched ground robot delivery trials in Bristol and Milton Keynes, following Uber Eats’ recent rollout in Leeds, as UK platforms test physical AI for last-mile logistics.

Just Eat Takeaway.com has launched a UK trial of autonomous ground delivery robots in Bristol and Milton Keynes, marking its most visible step yet into physical AI-driven last-mile logistics.

The move places the Amsterdam-listed company alongside rival Uber Eats, which began deploying delivery robots in Leeds late last year through a partnership with Starship Technologies. Together, the pilots signal a broader push among food delivery platforms to test automation as order volumes fluctuate and labor costs remain under pressure.

The initial trial comes ahead of the Valentine’s Day weekend, a peak ordering period that companies often use to stress-test operational capacity. Just Eat said the addition of robotic couriers is intended to increase delivery throughput during high-demand windows.

From Pilot to Multi-City Testing

Just Eat’s UK rollout builds on earlier experimentation in Switzerland, where the company conducted a pilot program that completed close to 1,000 robot deliveries. The group has also tested drone-based delivery in Ireland, indicating a multi-modal approach to automation.

In Bristol, the company is working with Delivers.AI, while the Milton Keynes deployment is being conducted with RIVR, a robotics firm focused on doorstep automation. The robots operate at street level, transporting meals from local restaurants directly to customers’ homes.

“We’re always innovating to improve the delivery experience for our customers,” said Mert Öztekin, chief technology officer at Just Eat. He described the robotics trial as part of a broader effort to collaborate with specialist partners while maintaining service quality.

Executives at both robotics partners framed the initiative as a step toward scaling autonomous delivery across European cities. RIVR’s chief executive, Marko Bjelonic, said automating the final leg to the customer’s doorstep is aimed at reducing friction in the delivery process.

Economics of the Last Mile

Food delivery remains one of the most logistically complex consumer services, with thin margins and heavy reliance on gig-economy couriers. Ground robots offer a potential path to reducing labor dependency for short-distance orders, particularly in dense urban neighborhoods.

However, autonomous delivery systems face practical constraints. They typically operate within limited geographic zones, require regulatory approval, and must navigate pedestrian traffic safely. For now, robots supplement rather than replace human couriers.

In the case of Uber Eats, customers in some UK cities have already encountered robot deliveries that cannot accept tips, underscoring how automation alters the economic model of gig-based platforms.

For delivery companies, the appeal lies in predictability. Robots do not require shift scheduling, surge pricing, or variable compensation. But they introduce new costs related to hardware, maintenance, remote monitoring, and fleet management.

Physical AI Moves Into Consumer Services

The expansion of robotic delivery reflects a broader trend in physical AI: systems that were once confined to research labs or industrial warehouses are moving into consumer-facing environments.

Unlike warehouse robotics, sidewalk delivery requires machines to operate in semi-structured public spaces. That makes perception, obstacle avoidance, and real-time route adaptation critical capabilities.

By testing in cities such as Bristol and Milton Keynes, Just Eat is effectively evaluating whether ground robotics can integrate into existing urban infrastructure without degrading service reliability.

The competitive dynamic with Uber Eats adds urgency. As platforms compete on speed, cost, and novelty, automation may become a differentiator in markets where brand loyalty is limited and switching costs are low.

For now, the UK trials remain limited in scope. But if operational metrics prove favorable, food delivery could become one of the most visible public use cases for everyday robotics in Europe.

CynLr Launches Object Intelligence Platform to Enable On-the-Fly Robot Learning

Indian robotics startup CynLr has unveiled its Object Intelligence platform, designed to let robots learn and manipulate previously unseen objects in seconds without offline retraining.

CynLr, a Bengaluru-based deep technology company, has introduced what it describes as a commercially ready Object Intelligence platform aimed at addressing one of robotics’ most persistent limitations: the inability to adapt quickly to unfamiliar objects.

After five years of research and development, the company unveiled its Object Intelligence, or OI, system on Wednesday, positioning it as a foundational layer that allows robots to learn new manipulation tasks within seconds rather than through months of dataset training and offline reprogramming.

The announcement comes as manufacturers globally search for more flexible automation systems capable of handling high product variability without constant retooling. While conventional industrial robots excel in structured, repetitive environments, they often struggle when confronted with new shapes, reflective surfaces, or irregular materials.

Learning Through Interaction

At the core of CynLr’s platform is a vision-driven perception system called CLX. Instead of relying solely on large pre-labeled datasets, the system analyzes an unfamiliar object in real time, breaking it down into geometric structure, texture, reflectance properties, and potential grasp points. That information informs an immediate trial-and-error interaction process.

According to the company, robots equipped with the platform can attempt to pick and manipulate previously unseen objects within 10 to 15 seconds. Each grasp attempt becomes a feedback event, allowing the system to recalibrate dynamically without being sent back for retraining.

Founder Gokul NA described the shift as a transition from rigid programming to adaptive cognition. “The last fifty years of robotics were about controlled environments,” he said in a statement. “The next fifty years will be about machines that observe, reason and adapt.”

The company draws inspiration from biological sensorimotor learning, arguing that robots must actively probe their environment to build understanding rather than passively interpret static visual data.

Addressing the Limits of Physical AI

Handling transparent, reflective, or irregular objects remains a central challenge in physical AI. Items such as glass bottles, plastic-wrapped packages, or metallic components can confuse traditional vision systems that depend on predictable visual cues.

CynLr contends that its approach reduces reliance on massive training datasets and data center infrastructure by enabling closed-loop learning directly at the point of interaction. The platform is designed to be form-factor agnostic, supporting industrial robotic arms, multi-arm configurations, and humanoid systems.

The company has developed two primary robotic products, CyRo and CyNoid, and plans to release an open hardware platform called Mantroid. The goal is to allow customers to build custom robotic configurations rather than being limited to fixed humanoid designs.

Nikhil, the company’s co-founder overseeing go-to-market strategy, argued that perception remains the bottleneck in physical intelligence. He contrasted large vision-language models with what he described as “vision force models”, systems that integrate sensing and physical feedback rather than depending primarily on text-based training paradigms.

From Lab to Factory Floor

CynLr says its technology is already being evaluated by global manufacturing firms, including luxury automotive brands and semiconductor automation companies. Potential use cases include complex assembly tasks and maintenance operations in semiconductor fabrication facilities.

In scenarios where automation has historically been infeasible due to variability, the ability to switch tasks without mechanical retooling could prove significant. The company envisions what it calls a “software-defined” factory floor, where machines transition between product lines through software updates rather than hardware changes.

For existing tasks, configuration changes can reportedly be completed within an hour, while entirely new task training may take from one week to several months depending on complexity. In many assembly processes where no traditional automation solution exists, even partial adaptation could represent a step change.

CynLr is pursuing additional funding to expand production capacity, with an ambition to reach one robot per day by 2028. The company has also established research and business operations in Switzerland and the United States as it targets international markets.

The broader question for the robotics industry is whether adaptive perception systems such as Object Intelligence can deliver consistent reliability in production environments. As manufacturers seek flexible automation capable of managing SKU proliferation and shorter product cycles, the ability to learn in situ rather than in advance could mark a meaningful shift in how physical AI systems are deployed.

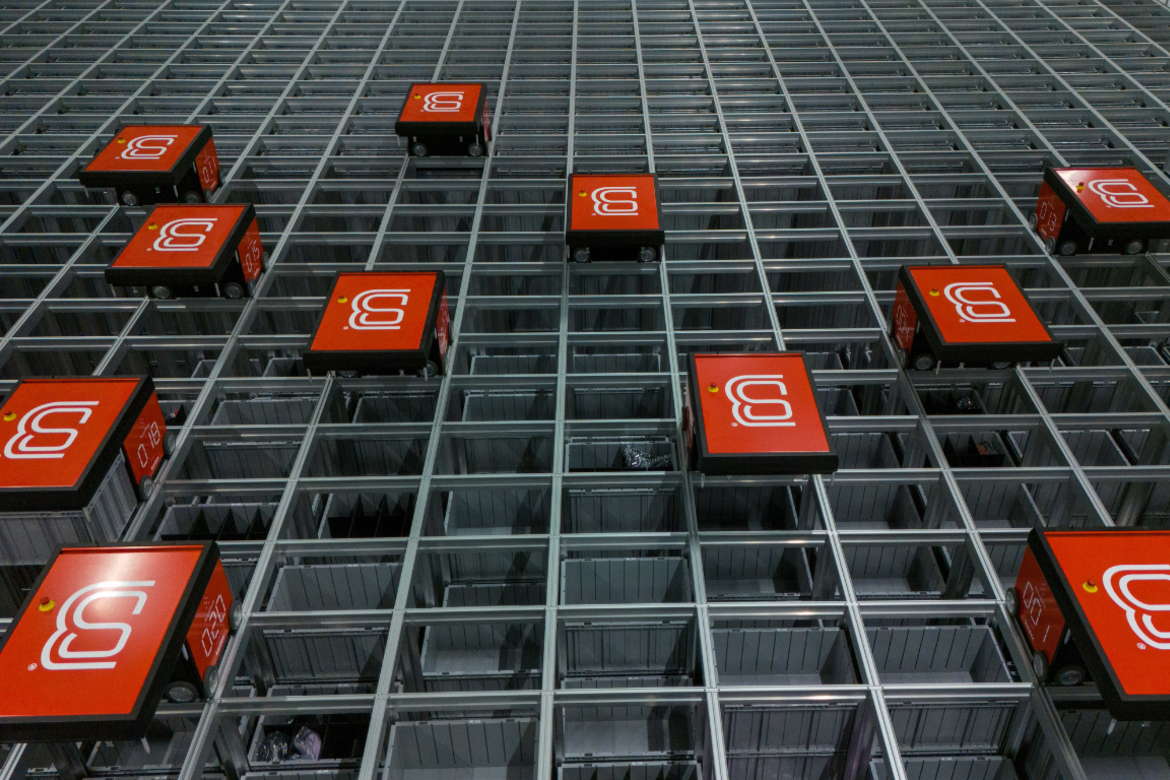

Bleckmann Deploys Robot-Driven AutoStore System to Accelerate Fashion Fulfillment

Logistics provider Bleckmann has launched an automated storage and retrieval system in the Netherlands using radio-controlled robots, aiming to reduce labor dependency and speed up fashion e-commerce fulfillment.

Bleckmann, the supply chain specialist serving fashion brands such as Superdry and Gymshark, has activated a new automated storage and retrieval system at its Almelo site in the Netherlands, marking a significant step in the company’s shift toward robotics-driven warehouse operations.

The system is built around Kardex’s AutoStore technology, a high-density grid in which radio-controlled robots retrieve storage bins and deliver them to human operators or automated packing lines. By adopting a goods-to-person model, Bleckmann is reducing the need for warehouse staff to walk between picking locations, compressing fulfillment times and lowering operational friction.

The deployment comes as European logistics providers face persistent labor shortages and mounting pressure from fashion retailers to shorten delivery windows without expanding physical warehouse footprints.

High-Density Storage and Faster Picking

AutoStore systems rely on fleets of small robots that travel across the top of a cubic storage grid, lifting bins from stacked columns and transporting them to designated workstations. According to Bleckmann, the installation in Almelo can use up to seven times less space than a conventional picking floor to store the same inventory volume.

The compact layout allows for higher SKU density and multi-client storage within a single grid, reducing the risk of stock imbalances while increasing throughput. Bleckmann executives say the system automatically prioritizes frequently ordered items, making high-demand products more accessible during peak sales periods.

Tom Wijlens, chief operating officer for Netherlands North at Bleckmann, said the system can be programmed ahead of high-volume events such as Black Friday to accelerate access to top-selling products. The goal is to extend order cut-off times for next-day delivery without increasing labor intensity.

From the moment items enter the grid to final packing, the company aims to minimize manual handling. According to Kevin Paindeville, director of warehouse solutions and innovation at Bleckmann, each product is physically handled only once before shipment, reducing both error rates and product damage.

Robotics as a Response to Labor Scarcity

The move reflects a broader structural shift in European e-commerce logistics. Warehousing has historically depended on large numbers of temporary workers during peak periods. Automation offers a way to stabilize operations amid workforce shortages while improving predictability.

Bleckmann has framed the Almelo installation as part of a longer-term automation strategy designed to address labor scarcity and create a more scalable fulfillment model. Unlike traditional rack storage, AutoStore systems can expand incrementally by adding robots or grid modules, allowing capacity increases without major facility redesign.

Energy efficiency also factors into the business case. The company notes that multiple robots operating simultaneously consume relatively low power, and because the grid system does not require constant lighting across large picking floors, overall energy usage can decline.

Competitive Pressure in Fashion Logistics

Fashion and lifestyle brands are among the most demanding e-commerce clients, managing high SKU turnover, seasonal peaks, and return-heavy order flows. Faster fulfillment and later order cut-offs can translate directly into competitive advantage for retailers.

By investing in robotics infrastructure, Bleckmann is positioning itself as a technology-enabled logistics partner rather than a conventional warehouse operator. The Almelo deployment may also serve as a blueprint for similar installations across its European network.

Automated storage systems have become increasingly common in grocery and electronics logistics, but adoption in fashion has lagged due to SKU complexity and variability. Bleckmann’s rollout signals that robotics-based goods-to-person systems are maturing to handle the demands of apparel fulfillment at scale.

As supply chains face tighter margins and higher customer expectations, warehouse robotics is shifting from efficiency enhancement to operational necessity. Bleckmann’s latest deployment illustrates how automation is becoming embedded in the infrastructure of European fashion logistics rather than layered on as an optional upgrade.

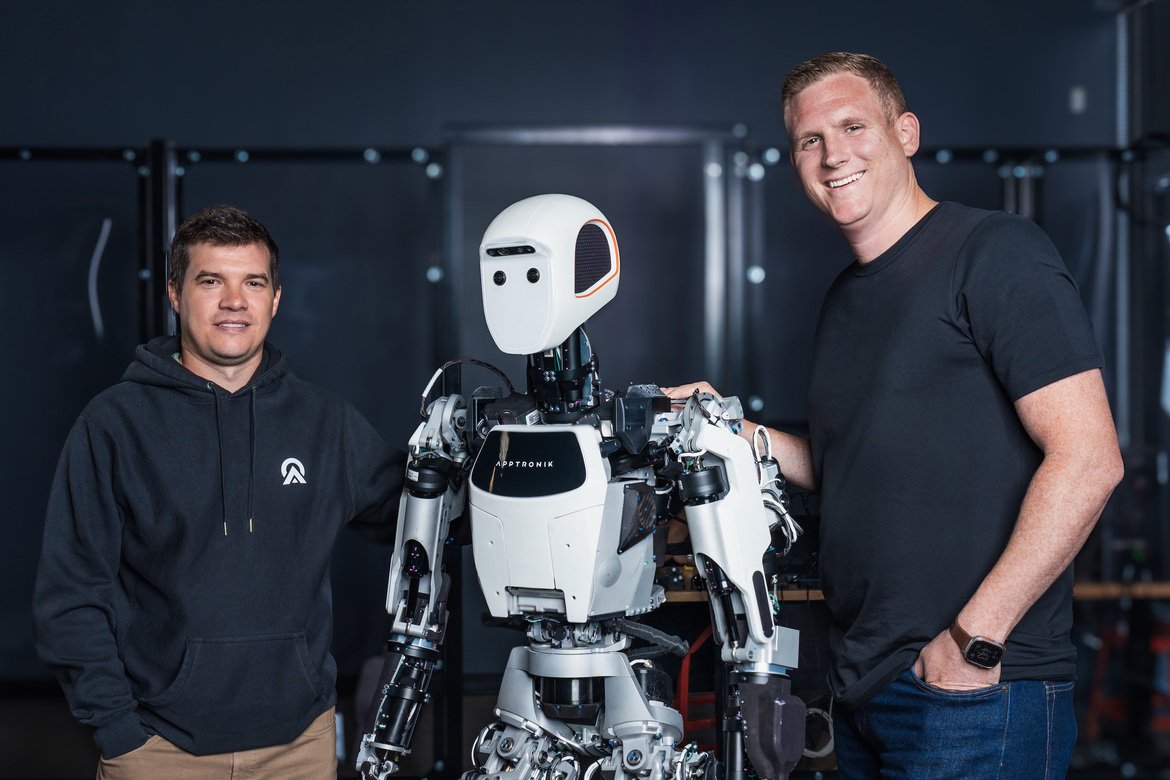

Apptronik Raises $935 Million to Scale Apollo Humanoid Robot Production

Apptronik has closed a $520 million extension to its Series A, bringing the total round to more than $935 million as it accelerates production and deployment of its Apollo humanoid robot.

Apptronik has secured more than $935 million in Series A financing, positioning the Austin-based startup among the most heavily funded humanoid robotics companies globally as it moves from pilot programs toward scaled production.

The company announced a $520 million extension to its previously oversubscribed $415 million Series A round, bringing total capital raised to nearly $1 billion. The extension includes participation from existing backers such as B Capital, Google, Mercedes-Benz and PEAK6, alongside new investors including AT&T Ventures, John Deere and the Qatar Investment Authority.

The size of the raise, particularly at what the company described as a significantly higher valuation than its initial Series A, reflects intensifying investor conviction that humanoid robotics is entering a commercialization phase rather than remaining a research-driven category.

From Demonstration to Deployment

Apptronik plans to use the capital to ramp up production of Apollo, its flagship humanoid robot, and expand commercial and pilot deployments across manufacturing and logistics environments. The company also intends to invest in dedicated training and data collection facilities, underscoring how embodied AI systems increasingly depend on large-scale operational data to refine performance.

Apollo is designed for physically demanding industrial tasks such as transporting components, sorting materials and kitting operations. Rather than targeting fully autonomous environments, the company emphasizes collaborative deployment alongside human workers.

The funding round suggests that investors view industrial humanoids as an emerging labor infrastructure layer, particularly in sectors facing workforce shortages and rising automation demands.

Strategic Backers Signal Industrial Alignment

The investor roster highlights Apptronik’s positioning at the intersection of robotics and heavy industry. Mercedes-Benz and GXO Logistics have already partnered with the company to explore deployments in manufacturing and supply chain operations, while Jabil has engaged in pilot programs.

The inclusion of John Deere and AT&T Ventures in the extension round broadens the strategic footprint into agriculture and telecommunications infrastructure, sectors that could benefit from adaptable, mobile robotic labor.

Apptronik also maintains a high-profile partnership with Google DeepMind aimed at integrating advanced AI models into its humanoid systems. The collaboration is intended to bring next-generation embodied intelligence into Apollo’s control stack, reflecting a broader industry convergence between large-scale AI model developers and hardware-focused robotics firms.

A Crowded but Capital-Intensive Race

Humanoid robotics has become one of the most capital-intensive segments of the broader robotics industry. Startups and established players alike are racing to prove that general-purpose, bipedal machines can deliver consistent economic value in structured work environments.

The nearly $1 billion raised by Apptronik places it in the top tier of humanoid funding rounds. The scale of investment underscores both the technical complexity of building reliable humanoids and the expectation that early leaders could capture significant market share if they reach production scale first.

Unlike industrial robotic arms, humanoids must integrate advanced locomotion, manipulation, perception and safety systems into a single mobile platform. Scaling manufacturing while maintaining reliability presents additional challenges that require substantial capital investment.

Commercialization as the Next Test

The central question for Apptronik and its peers is no longer whether humanoid robots can perform controlled demonstrations, but whether they can operate continuously in real-world industrial settings with acceptable uptime, safety and cost profiles.

By allocating new capital toward production capacity and deployment infrastructure, Apptronik is signaling that it believes the market is ready for broader rollout. The company has indicated that a new version of Apollo is expected in 2026, suggesting ongoing hardware and software iteration even as initial deployments expand.

Investor enthusiasm indicates confidence that embodied AI is transitioning from experimental prototypes to enterprise tools. Whether that optimism translates into sustained revenue growth will depend on how effectively companies like Apptronik convert pilot projects into long-term industrial contracts.

For now, the funding milestone reinforces a broader industry narrative: humanoid robotics is no longer a speculative frontier but an increasingly structured race to scale.

Harvard Engineers Develop Rotational 3D Printing Method for Programmable Soft Robots

Harvard researchers have introduced a multimaterial 3D printing technique that enables soft robots to bend and morph in predictable ways, potentially simplifying fabrication for surgical and assistive applications.

Engineers at Harvard University have developed a new 3D printing method that enables soft robots to bend and change shape in precisely programmed ways when inflated, offering a streamlined alternative to conventional mold-based fabrication.

The technique, described in a study published in Advanced Materials, relies on rotational multimaterial 3D printing to embed hollow pneumatic channels directly into flexible structures. The approach could simplify how soft robotic actuators and grippers are designed and manufactured, particularly for applications requiring biocompatibility and fine motion control.

Soft robotics has drawn sustained interest from healthcare, manufacturing, and assistive technology sectors because flexible materials are safer for interacting with humans and delicate objects. But controlling how these materials deform under pressure remains a persistent engineering challenge.

Printing Motion Into the Material

The research was led by graduate student Jackson Wilt and former postdoctoral fellow Natalie Larson in the laboratory of Jennifer Lewis, the Hansjorg Wyss Professor of Biologically Inspired Engineering at Harvard’s John A. Paulson School of Engineering and Applied Sciences.

At the core of the advance is a dual-material printing nozzle capable of extruding two substances simultaneously. The system produces filaments with a polyurethane outer shell and a removable inner core made from a poloxamer gel, a material commonly found in cosmetic products.

As the nozzle rotates during printing, it precisely controls the orientation and placement of the inner gel channel within each filament. After the outer shell solidifies, the inner gel is washed away, leaving behind hollow, pressurizable pathways. When air is pumped into these channels, the structures bend or twist in predetermined directions.

“We use two materials from a single outlet, which can be rotated to program the direction the robot bends when inflated,” Wilt said in a statement released by Harvard. “Our goals are aligned with creating soft, bio-inspired robots for various applications.”

The ability to encode actuation behavior directly during printing eliminates the need for casting molds, layering materials, and manually embedding pneumatic networks, steps that have traditionally limited customization and scalability.

From Flat Patterns to Functional Grippers

To demonstrate the method’s flexibility, the team printed intricate structures including a spiral flower-like actuator and a five-fingered gripper with articulated “knuckles”. Each device was produced in a continuous printing process, with motion characteristics defined by nozzle rotation speed, filament geometry, and flow rate.

By adjusting these parameters, researchers can determine how much a structure bends, in which direction, and under what level of internal pressure. The result is a programmable mechanical response embedded at the fabrication stage.

The broader implication is a shift toward more automated and customizable soft robot production. Traditional fabrication often requires multiple casting steps and careful alignment of pneumatic channels between layers. In contrast, the Harvard approach prints structure and functionality simultaneously.

“In this work, we don’t have a mold,” Wilt noted. “We print the structures, we program them rapidly, and we’re able to quickly customize actuation.”

Implications for Medical and Assistive Robotics

Soft robots are particularly attractive in surgical and rehabilitation contexts, where rigid devices can pose safety risks. Flexible actuators that conform to tissue or assist human motion must deliver controlled, repeatable movement under constrained conditions.

By enabling predictable shape morphing through embedded channels, the rotational multimaterial technique could support next-generation surgical tools, wearable assistive devices, or adaptive grippers for delicate manufacturing tasks.

The work builds on prior innovations from the Lewis lab in multimaterial and helical 3D printing, where similar approaches were used to fabricate artificial muscle-like structures. The new method extends that foundation toward more complex, integrated soft robotic systems.

The research received federal funding support from the National Science Foundation through Harvard’s Materials Research Science and Engineering Center and from the U.S. Army Research Office’s MURI program.

As soft robotics continues to mature, advances in fabrication may prove as consequential as breakthroughs in control algorithms. By embedding motion logic directly into printed materials, Harvard’s approach suggests a future in which the mechanical intelligence of soft machines is designed at the nozzle level rather than assembled afterward.

Samsung Unveils AI Robot Vacuum to Challenge Chinese Dominance in Korea

Samsung Electronics has launched a new AI-powered robot vacuum in South Korea, aiming to regain market share from Chinese rivals that currently dominate more than 70 percent of the domestic segment.

Samsung Electronics on Wednesday introduced a new AI-powered robot vacuum cleaner in South Korea, escalating competition in a domestic market that has increasingly been captured by Chinese manufacturers.

The product launch places Samsung in direct contention with Roborock, which industry data shows controls more than half of Korea’s robot vacuum segment. Chinese brands Ecovacs and Dreame follow with double-digit shares, leaving Korean incumbents such as Samsung and LG Electronics with a combined market position below 30 percent.

The imbalance underscores how quickly Chinese appliance makers have leveraged cost efficiency and rapid iteration to outpace traditional electronics leaders in smart home hardware. Samsung’s new Bespoke AI Steam model represents an attempt not only to introduce upgraded features, but to reframe competition around service infrastructure and platform integration.

Hardware Upgrades Meet AI Navigation

At a press briefing in Seoul, Samsung executives positioned the new vacuum as a step-change in performance. The device includes a 10-watt suction motor, roughly doubling the power of prior iterations, designed to improve fine dust and hair collection.

Beyond raw suction, Samsung emphasized edge and corner cleaning. A pop-out mop mechanism extends flush to walls for wet cleaning, while a deployable side brush reaches into tight corners. The unit can also cross thresholds up to 45 millimeters using an updated wheel system, a feature aimed at accommodating Korean apartment layouts with raised door frames and floor transitions.

The most notable changes are in perception. Equipped with RGB camera sensors and infrared LEDs, the vacuum uses upgraded AI-based object and spatial recognition to detect obstacles and even transparent liquids on the floor. That capability allows the device to either avoid spills or target them for cleaning, depending on user settings.

In effect, Samsung is aligning its robot vacuum more closely with broader trends in physical AI: combining visual perception, environmental mapping, and real-time decision-making to handle varied home conditions with less manual oversight.

Security and Service as Differentiators

Samsung’s strategy extends beyond cleaning performance. The company has embedded its Knox Matrix and Knox Vault security platforms into the device, highlighting data protection at a time when connected home appliances increasingly gather spatial and behavioral data.

Executives also stressed after-sales support as a competitive lever. Unlike many Chinese brands that compete primarily on price and hardware specifications, Samsung is leaning into installation services, regular maintenance, and nationwide customer support infrastructure.

The Bespoke AI Steam model includes a steam clean station that sterilizes mop pads at 100 degrees Celsius and a self-cleaning system for debris removal. An automatic water supply and drainage mechanism reduces manual intervention, reinforcing the product’s positioning as a high-end appliance rather than a standalone gadget.

Pricing reflects that premium approach. The device will retail between 1.41 million won and 2.04 million won depending on configuration. To broaden accessibility, Samsung is offering the product through its subscription-based AI club program, spreading payments over time instead of requiring a large upfront purchase.

A Broader Battle in Smart Home Robotics

Samsung’s renewed push highlights a structural shift in the robot vacuum market. Once considered a niche convenience device, robot vacuums have become one of the most commercially mature categories of home robotics. Market leadership has gravitated toward companies capable of rapid hardware iteration combined with increasingly sophisticated navigation software.

Chinese manufacturers have capitalized on that dynamic, using scale and cost advantages to capture more than 70 percent of the Korean market. Samsung’s response suggests that domestic players believe differentiation will depend on ecosystem integration, security assurances, and service reliability rather than pure hardware metrics.

“Our target is to become No. 1 in the domestic market,” Lim Sung-taek, head of Korea sales and marketing at Samsung Electronics, said at the launch event.

Whether Samsung can reverse market share trends will depend on how consumers weigh brand trust and long-term support against price competitiveness. But the launch signals that South Korea’s largest electronics company views home robotics not as a peripheral category, but as a strategic front in the broader AI-enabled appliance race.

Preorders begin Wednesday, with nationwide retail availability scheduled for early March.

Boston Dynamics CEO to Step Down as Hyundai Signals Shift Toward Commercial Scale

Boston Dynamics CEO Robert Playter will step down, a move that coincided with a sharp rise in Hyundai Motor shares and renewed focus on accelerating commercialization of the robotics business.

Robert Playter, chief executive of Boston Dynamics, will step down from his role, the company said this week, triggering investor speculation that parent company Hyundai Motor Group intends to accelerate the commercialization of its robotics portfolio.

The leadership change comes at a pivotal moment for Hyundai, which acquired a controlling stake in the Waltham, Massachusetts-based robotics firm in 2021. Shares of Hyundai Motor rose nearly 6 percent in Seoul trading following the announcement, while affiliate Kia also gained, reflecting market expectations that the automaker may move more aggressively to scale its robotics operations.

From Research Focus to Industrial Rollout

Analysts in Seoul suggested the stock reaction was tied directly to the management transition. Some characterized Playter’s tenure as heavily research and development oriented, focused on advancing the technical capabilities of platforms such as the Atlas humanoid and the quadruped Spot robot.

Hyundai, by contrast, appears increasingly focused on deployment. In January, the automaker said it plans to introduce humanoid robots produced by Boston Dynamics at its U.S. manufacturing plant in Georgia. The move signals a shift from high-profile demonstrations toward practical integration within automotive production lines.

Boston Dynamics has built its reputation on dynamic mobility, dexterous manipulation, and advanced control systems. But commercial success depends less on viral videos and more on reliability, safety, and cost efficiency inside real factories and logistics environments. Hyundai’s industrial footprint provides a natural proving ground.

Recent reports that Boston Dynamics’ quadruped robots have been deployed at a nuclear power facility in the United Kingdom also reinforced investor confidence, suggesting that the company’s machines are beginning to generate meaningful enterprise use cases beyond pilot programs.

Hyundai’s Broader Robotics Strategy

Hyundai has framed robotics as a long-term growth pillar alongside electric vehicles and autonomous driving. The company has repeatedly described robotics as integral to its vision of future mobility, which extends beyond cars to smart factories and automated infrastructure.

The timing of the CEO transition aligns with that broader strategy. As humanoid robots edge closer to real-world industrial deployment, governance and operational discipline become more critical. Scaling production, integrating robots into complex manufacturing systems, and managing global supply chains require a different organizational emphasis than early-stage research.

Investors appear to be betting that Hyundai will leverage its capital resources and manufacturing expertise to move Boston Dynamics from a technology showcase to a revenue-generating industrial division. The positive share reaction suggests confidence that commercialization, rather than experimentation, is becoming the priority.

A Turning Point for Humanoid Robotics

The shift also reflects wider dynamics across the robotics industry. Humanoid platforms are advancing rapidly, with multiple companies planning expanded production runs this year. But sustained growth will depend on proving economic value inside factories, warehouses, and infrastructure sites.

Boston Dynamics remains one of the most technically advanced players in the field. The question now is whether it can translate that technical edge into scaled deployments under Hyundai’s ownership.

Leadership transitions often mark inflection points. In this case, the market response indicates that investors view the change less as instability and more as a signal that robotics is moving from the lab to the balance sheet.

Alibaba Launches RynnBrain Model to Push Deeper Into Physical AI and Robotics

Alibaba has unveiled RynnBrain, an artificial intelligence model designed to help robots understand and interact with the physical world, marking the company’s latest move into physical AI and embodied robotics.

Alibaba on Tuesday introduced RynnBrain, an artificial intelligence model built to support robotics, as competition intensifies among global technology companies to define the software foundations of physical AI. The launch reflects how leading AI developers are moving beyond text and images toward systems that can interpret and act within real-world environments.

RynnBrain is designed to help robots perceive their surroundings, identify objects, and coordinate movement accordingly. In a demonstration released by Alibaba’s DAMO Academy, a robot identifies pieces of fruit and places them into a basket. While visually unremarkable, the task requires a tight integration of perception, reasoning, and motion control, areas that have long constrained the commercial deployment of autonomous machines.

Physical AI as a Strategic Priority

Robotics is increasingly grouped under the broader category of physical AI, which includes machines such as industrial robots and self-driving vehicles that rely on artificial intelligence to operate in dynamic environments. China has made physical AI a strategic focus, viewing it as a critical arena in its technological competition with the United States.

Industry leaders have echoed the scale of the opportunity. Nvidia chief executive Jensen Huang has described AI and robotics as a multitrillion-dollar growth market, a framing that helps explain why major model developers are investing in systems that extend beyond digital tasks. For Alibaba, RynnBrain represents an entry point into this emerging layer of the AI stack, complementing its existing work on large language models under the Qwen brand.

Competing World Models Take Shape

Alibaba’s move places it alongside a growing list of companies developing so-called world models, AI systems intended to help machines understand and simulate the physical world. Nvidia has introduced several robotics-focused models under its Cosmos platform, while Google DeepMind has developed Gemini Robotics-ER, aimed at embodied reasoning and control. Tesla is pursuing a similar approach internally for its Optimus humanoid robot.

The competition is particularly pronounced in humanoid robotics, where China is widely viewed as moving faster than the U.S. toward scaled production. Several Chinese manufacturers have signaled plans to ramp output this year, suggesting that software capable of generalizing across tasks and environments will be a key differentiator.

Open Source as an Adoption Strategy

Like Alibaba’s other recent AI releases, RynnBrain is being offered under an open source model, allowing developers to use and modify it freely. Open sourcing has been central to Alibaba’s strategy for expanding the reach of its AI ecosystem, particularly outside China, and contrasts with more closed approaches taken by some Western rivals.

The decision also reflects a broader industry debate over how foundational physical AI systems should be distributed. By lowering barriers to experimentation, Alibaba is positioning RynnBrain as infrastructure rather than a proprietary product, betting that widespread adoption will accelerate progress in robotics while reinforcing its role in the global AI landscape.

Boston Dynamics’ Atlas Demonstrates Full-Body Agility in Latest Research Showcase

Boston Dynamics and the Robotics & AI Institute unveiled new footage showing the Atlas humanoid robot executing gymnastic-style movements, highlighting advances in whole-body control and simulation-to-reality learning as the company prepares for enterprise deployment.

Boston Dynamics and the Robotics & AI Institute (RAI Institute) this week released new video footage showcasing remarkable advances in full-body control and dynamic agility from their Atlas humanoid research platform. The demonstration, which includes seamlessly linked cartwheels and backflips, highlights how simulation-based learning and whole-body control systems are maturing as the company transitions toward enterprise applications.

In the footage, Atlas moves from stable walking into a sideways cartwheel and then a tightly coordinated backflip, landing on both feet with controlled balance. The sequence underscores two trends in advanced robotics: the ability to synthesize coordinated limb movement across complex actions and the practical utility of simulation-derived policies that transfer directly to real robots.

Now that the Atlas enterprise platform is getting to work, the research version gets one last run in the sun. Our engineers made one final push to test the limits of full-body control and mobility, with help from the RAI Institute. pic.twitter.com/24ZfapoJTf

— Boston Dynamics (@BostonDynamics) February 9, 2026

Simulation to Reality: Whole-Body Learning

At the core of these capabilities is a control framework developed in collaboration with the RAI Institute. Engineers train full-body control policies in simulation, a process that generates extensive experience across a wide range of motions. These “zero-shot” learned policies can then be executed on the physical robot without task-specific fine-tuning, enabling broad and adaptive behaviors that span walking, jumping, and dynamic balancing.

This approach addresses a persistent challenge in humanoid robotics: bridging the gap between virtual learning environments and the physical dynamics of real hardware. By relying on whole-body learning rather than discrete, handcrafted motion scripts, developers aim to create generalist motion strategies that can handle unpredictable real-world conditions.

The video also includes candid clips of earlier test failures – missteps, stumbles, and collapse on landing – which serve to illustrate both the difficulty of the underlying problems and the iterative nature of refining control algorithms. In some sequences, Atlas adjusts its footing after a misaligned step, hinting at reflexive recovery behaviors that will be essential for deployment outside controlled test settings.

From Lab Showcase to Workplace Tool

Although the acrobatic moves capture attention for their spectacle, the underlying technology has clear implications for future use cases. Boston Dynamics envisions Atlas working in industrial environments – such as factory floors and warehouses – where robots must navigate varied terrain, maintain balance while handling objects, and react dynamically to changes in their surroundings.

The company’s broader robotics portfolio has already demonstrated autonomous manipulation and perception in prior videos, where Atlas combines object detection with motion planning to move parts between containers without direct human instruction. Those demonstrations foreshadow a future in which humanoid robots could support assembly lines, material handling, and other manual tasks that require both dexterity and mobility.

Boston Dynamics and its research partners are positioning these advances within a longer timeline of commercial rollout. The latest footage, while still centered on a research platform, reflects a shift toward systems that are robust, adaptable, and capable of the nuanced physical interactions required for real-world work.