Monthly Archives: March 2026

Unitree IPO Signals Rising Investor Stakes in the Humanoid Robot Economy

Unitree Robotics’ planned IPO highlights growing investor confidence in humanoid robots as analysts forecast a multi-trillion-dollar market for physical AI by mid-century.

The planned initial public offering of Chinese robotics company Unitree Robotics is emerging as an early test of investor confidence in the humanoid robot industry. The company intends to raise about 4.2 billion yuan, roughly $610 million, in a Shanghai listing, positioning the move as one of the first large capital market bets on a sector that remains in its infancy.

Unitree is already the world’s second-largest shipper of humanoid robots, according to industry estimates. Yet the broader significance of the IPO lies less in the company’s current sales than in what it signals about the expanding market around physical AI, a category that includes humanoids, drones, service robots and autonomous vehicles.

Financial institutions increasingly see that market becoming one of the largest technology industries of the coming decades. Morgan Stanley estimates that the global physical AI economy could reach $25 trillion by the middle of the century, with roughly 6.5 billion robots operating worldwide. That figure would exceed the entire global population at the start of the 2000s.

The Supply Chain Behind the Robot Boom

While public attention often focuses on humanoid robot demonstrations, much of the economic value may lie deeper in the supply chain.

Research from major banks shows that nearly half of the manufacturing cost of a humanoid robot can come from actuators, the components that convert electrical energy into movement. These systems function as the mechanical muscles of robots and are expected to see massive demand if humanoids move into large-scale deployment.

Analysts estimate that by 2050 the industry could require tens of billions of motors and billions of vision sensors. Companies producing actuators, gearboxes, batteries and computing chips are therefore emerging as critical beneficiaries of the robotics boom.

Suppliers across Asia, Europe and the United States are positioning themselves accordingly. Chinese manufacturers such as Tuopu, Sanhua and Inovance are building robotics component capacity, while firms like Hyundai Mobis in South Korea and Schaeffler in Germany are expanding their role in advanced mechanical systems.

Another key dependency is rare earth materials. Humanoid robot production could require roughly 1.7 million tons of neodymium magnets by mid-century, potentially creating a market worth more than $160 billion.

A Market Still Searching for Its Breakthrough

Despite the growing investor interest, the humanoid robot sector remains small in absolute terms. Global sales of humanoid robots were estimated at just over two thousand units in 2024.

That figure could rise dramatically in the coming years. Some forecasts suggest annual sales may reach 50,000 units by 2026 as manufacturing costs decline and industrial deployments expand.

Even with that growth, the industry has yet to experience the type of transformative moment that generative AI saw with ChatGPT. Analysts note that robotics still lacks a universal software model capable of reliably performing diverse real-world tasks.

Until such a breakthrough emerges, the sector is likely to advance through incremental improvements in perception, motion control and autonomy rather than a single technological leap.

Geopolitics and the Physical AI Race

The global race to develop humanoid robots is increasingly shaped by geopolitics as much as technology.

China has made robotics and physical AI a national priority, supporting domestic manufacturers and scaling production capacity. Chinese companies already account for a significant share of global humanoid robot sales.

Western governments are beginning to respond. Policymakers in the United States are exploring new initiatives to support domestic robotics development, including potential federal coordination efforts and closer engagement with leading chip suppliers.

At the same time, companies are adjusting supply chains to navigate tariffs and export controls. Some Chinese manufacturers are exploring production outside mainland China to maintain access to international markets, while Western firms are localizing manufacturing to meet policy requirements.

Large technology and industrial investors are also entering the field. Jeff Bezos has reportedly been assembling a massive investment initiative focused on modernizing traditional manufacturing with artificial intelligence and robotics technologies.

Meanwhile, established technology companies are betting on humanoids as a future computing platform. Tesla is investing heavily in its Optimus robot, with Elon Musk suggesting that China may ultimately become the company’s strongest competitor in scaling humanoid manufacturing.

From Demonstrations to Economic Infrastructure

For now, humanoid robots remain a small segment of the robotics industry, often showcased in carefully staged demonstrations or pilot factory deployments.

Yet the broader ecosystem around them is already expanding. Analysts predict that millions of humanoid robots could eventually work in industrial environments, performing repetitive or physically demanding tasks that currently rely on human labor.

If those projections prove accurate, the shift could reshape manufacturing supply chains, labor markets and geopolitical competition in advanced technologies.

Unitree’s IPO is therefore less about a single robotics company than about a larger industrial transition. Investors are beginning to treat humanoid robotics not as an experimental technology, but as the early foundation of a global physical AI economy.

Strawberry-Picking Robot Wins UK National Award as AI Agriculture Moves Toward Real-World Deployment

A strawberry-harvesting robot developed by researchers at the University of Essex has won a national AI and robotics award, highlighting growing momentum for agricultural automation amid global farm labour shortages.

A strawberry-picking robot developed by researchers at the University of Essex has won a national robotics award in the United Kingdom, highlighting the growing role of artificial intelligence and automation in agriculture.

The technology, created through the Sustainable smArt Robotic Agriculture (SARA) project, received the Best Research Project (Industry Collaboration) award at the 2026 UK Research and Innovation (UKRI) AI & Robotics Research Awards.

The recognition reflects a broader push to apply robotics in agriculture as farms around the world confront labour shortages, rising costs, and pressure to improve sustainability.

Developed by Professor Klaus McDonald-Maier and Dr Vishwanathan Mohan at the University of Essex, the project was built in collaboration with industry partners including Wilkin and Sons, JEPCO, and GyroPlant.

Robots for Labour-Intensive Farming

Harvesting delicate crops such as strawberries remains one of the most labour-intensive tasks in agriculture. Workers must carefully pick ripe fruit while avoiding damage to surrounding plants, often under time pressure during harvest seasons.

The Essex research team developed robotic systems capable of performing these tasks autonomously using AI-based perception and manipulation.

The robots combine computer vision with robotic arms to identify ripe fruit, navigate plant rows, and pick crops without damaging them. This capability is particularly valuable for soft fruits, where manual harvesting has historically been difficult to automate.

According to the project team, the technology is designed not only to replace repetitive labour but also to help farms maintain productivity as agricultural workforces shrink.

Automation in farming has become increasingly important in many regions where growers struggle to recruit seasonal workers for harvesting.

From Research Lab to Commercial Deployment

One notable aspect of the SARA project is its emphasis on collaboration with industry.

Rather than developing robotics solely in laboratory environments, researchers worked closely with growers to test systems directly in agricultural settings.

This approach helped ensure that the robots could operate effectively in real-world farming conditions, where lighting, weather, plant variability, and field layouts present challenges that are difficult to replicate in controlled environments.

The collaboration has already moved beyond academic research.

Researchers involved in the project have launched a spinout company, Versatile RobotX, to commercialize the technology and accelerate its deployment across agricultural operations.

The company aims to adapt robotic harvesting systems for multiple crop types and farming environments, extending the technology beyond strawberries.

Automation Meets Food Security

Agricultural robotics is increasingly viewed as a key technology for addressing global food production challenges.

Farmers face pressure to increase yields while reducing environmental impact and maintaining local food production systems. At the same time, climate variability and labour shortages continue to complicate traditional farming practices.

Robotic systems capable of performing repetitive agricultural tasks could help farms maintain productivity while reducing waste and improving efficiency.

The SARA project’s recognition at the UKRI awards reflects growing momentum behind these efforts.

For researchers and growers alike, the goal is not simply technological innovation but building systems that can operate reliably in working farms.

As agricultural automation continues to mature, robots like the strawberry-harvesting system developed at Essex may become a common feature in fields and greenhouses around the world.

LG Targets Robotics Supply Chain with In-House Actuator Strategy

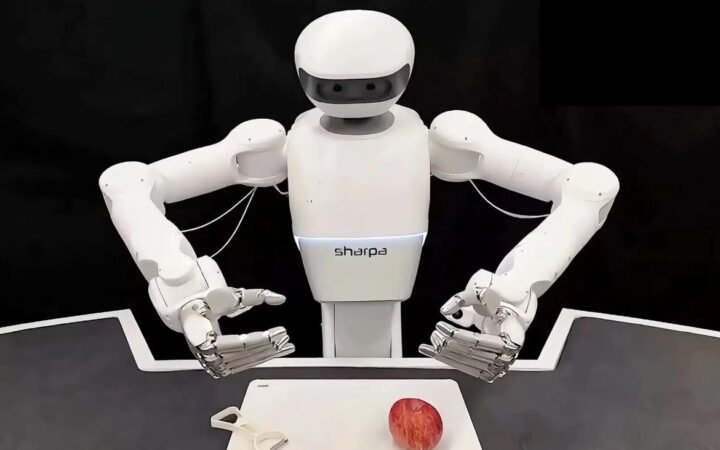

LG Electronics is expanding its robotics ambitions by developing and manufacturing robot actuators in-house, positioning itself as a key supplier in the global robotics ecosystem.

LG Electronics is accelerating its entry into the robotics industry with a strategy that focuses not only on building robots but on manufacturing the critical components that make them move.

At its annual shareholder meeting in Seoul, CEO Ryu Jae-cheol outlined the company’s roadmap for the coming decade, placing robotics at the top of five key growth sectors that also include AI data centers, cooling systems, smart factories, and AI-enabled homes.

The announcement signals a shift in LG’s broader strategy. Rather than simply expanding its consumer electronics portfolio, the company is positioning itself as a supplier of core infrastructure for the emerging robotics economy.

Central to that strategy is a focus on robot actuators – the components responsible for generating movement and precision in robotic systems.

Competing Where the Real Value Is

Actuators function as the mechanical “muscles” of a robot, translating electrical signals into controlled motion. They determine how precisely a robot can move, how much weight it can lift, and how efficiently it operates.

In many robotic systems, actuators account for a large portion of total manufacturing costs. Because of their technical complexity, they also represent one of the most strategically valuable parts of the robotics supply chain.

LG plans to begin designing and producing these components internally, with mass production expected to start within the year.

The move allows the company to enter a part of the robotics industry where barriers to entry are high but long-term margins can be attractive. While the market for finished robots is becoming increasingly crowded, the market for advanced robotic components remains relatively concentrated.

Industry forecasts suggest the global actuator market could reach about $23 billion by 2030, making it a potentially significant revenue stream for companies capable of producing high-performance systems at scale.

Building a Robotics Ecosystem

LG’s robotics ambitions extend beyond component manufacturing.

The company has been actively forming partnerships across the robotics sector to strengthen its position within the global ecosystem. One example is its investment in Chinese humanoid robotics developer AgiBot, with whom LG executives have discussed potential collaboration.

These partnerships are intended to provide both technological insight and potential customers for LG’s actuator systems.

By supplying core components to multiple robotics manufacturers, LG could gain influence across the industry without relying solely on its own robot platforms.

This approach mirrors strategies used by companies in other technology sectors, where component suppliers often capture significant value within complex hardware ecosystems.

From Industrial Components to Consumer Robots

While LG’s actuator initiative targets industrial robotics suppliers, the company also continues to develop its own robotic systems.

LG has already deployed service robots in hospitality and logistics environments, including machines designed for delivery and facility operations.

The next stage of development will likely involve consumer-facing robotics.

The company is currently testing its CLOi home robot, a platform intended to assist with tasks in smart home environments. Commercial availability could follow after 2026, depending on technological readiness and market demand.

For LG, the strategy appears to follow a clear sequence: first secure a position within the industrial robotics supply chain, then expand into service robotics and eventually consumer markets.

Robots as Infrastructure

The company’s pivot toward robotics reflects a broader industry trend in which AI is increasingly tied to physical systems.

As artificial intelligence moves from software into machines that interact with the physical world – factory robots, autonomous vehicles, service robots – the importance of hardware components such as actuators, sensors, and edge computing platforms is rising.

LG’s bet is that the real value in robotics may lie less in the visible machines and more in the foundational technologies that power them.

By developing those technologies internally, the company aims to position itself as a central supplier within the emerging physical AI economy.

Renault Deploys ‘Calvin-40’ Robots in EV Factory as Automakers Expand Industrial Automation

Renault has begun deploying Calvin-40 robots in its Douai electric vehicle plant, using AI-trained machines to handle repetitive assembly tasks and accelerate EV production.

Renault has begun deploying a new generation of factory robots at its electric vehicle manufacturing complex in northern France, marking another step in the automotive industry’s shift toward AI-assisted production.

The robots, known as Calvin-40, have recently appeared on the Renault assembly line in Douai, where they perform repetitive tasks such as placing tires onto conveyor systems that feed the Renault 5 electric vehicle production line.

While only a handful of units are currently operating, Renault plans to dramatically expand their presence. Over the next 18 months, the company intends to deploy as many as 350 robots across its ElectriCity production network.

The initiative reflects a broader push by automakers to increase automation as EV production scales globally.

Automating Repetitive Work on the Assembly Line

The Calvin-40 machines were developed by French robotics company Wandercraft and are designed specifically for industrial environments.

Unlike traditional industrial robots that operate within fixed cages or workstations, these machines are built to move within factory spaces and interact with storage racks, conveyor systems, and other equipment.

Each robot stands on two legs and uses articulated arms capable of lifting loads of up to 40 kilograms. The robots retrieve parts such as tires or body components from storage bins and place them into the assembly flow.

A camera system mounted on the robot helps it track objects and monitor its work. Visual indicators on the machine provide operators with real-time status information.

According to Renault, tasks now performed by Calvin-40 robots previously required workers to repeat the same lifting and positioning motions hundreds of times per shift.

By shifting these operations to machines, the company hopes to reduce worker fatigue while maintaining a steady pace of production.

Training Robots for Manufacturing Speed

Developing robots capable of operating reliably in a factory environment required extensive AI training.

While the physical design of the Calvin-40 robot was completed relatively quickly, engineers spent several months refining the system’s software so it could operate at the speed required on an automotive production line.

Training involved teaching the robots how to recognize parts, retrieve components from storage racks, and place them accurately onto moving conveyor systems.

Even with these improvements, Renault says the robots are not yet fast enough to operate in every stage of final vehicle assembly. Some sections of the production line still require speeds beyond what the current generation of robots can achieve.

For now, the machines are focused on specific repetitive tasks where automation can deliver immediate productivity gains.

A Practical Approach to Factory Robotics

Renault’s approach differs from the strategy adopted by some technology companies that showcase humanoid robots in demonstration environments.

Instead of building futuristic prototypes first, Renault has focused on introducing practical automation directly into its factories.

The company has also invested financially in Wandercraft to adapt the robots for automotive manufacturing requirements.

Renault executives say the long-term goal is to accelerate vehicle production while reducing manufacturing costs.

The company aims to cut the time required to build a car by roughly one-third and reduce production expenses by about 20 percent within the next five years.

Automation technologies like the Calvin-40 robots will play a key role in reaching those targets.

Automation and the Future of EV Production

As electric vehicle demand grows, automakers are under increasing pressure to scale manufacturing capacity efficiently.

Factories must manage complex supply chains, new battery technologies, and increasingly competitive production costs.

Industrial robots have long played a role in automotive manufacturing, but newer AI-driven systems are beginning to extend automation into tasks that previously required human flexibility.

Renault’s deployment of the Calvin-40 robots highlights how manufacturers are experimenting with new forms of automation that combine mechanical capability with AI-driven perception and control.

While the robots currently handle relatively narrow tasks, their growing presence on factory floors signals how automation is gradually expanding deeper into the production process.

For automakers racing to scale EV production, that evolution could reshape how cars are built in the years ahead.

Elon Musk Unveils ‘Terafab’ to Build AI Chips for Cars, Robots, and Space Systems

Elon Musk has unveiled Terafab, a massive semiconductor facility planned near Austin, Texas, designed to produce custom AI chips for Tesla vehicles, Optimus robots, SpaceX satellites, and xAI models.

Elon Musk has announced plans for a massive semiconductor manufacturing project aimed at producing specialized AI chips for Tesla vehicles, humanoid robots, and space systems.

The facility, called Terafab, will be built near Austin, Texas and jointly developed by Tesla and SpaceX. According to Musk, the plant will focus on producing custom processors designed specifically for artificial intelligence workloads used in autonomous driving, robotics, and satellite-based computing.

SpaceXAI + Tesla TERAFAB Project

Goal is a trillion watts of compute/year

Most must necessarily go to space, as US electricity is only 0.5TW https://t.co/hMtg9vNLcw

— Elon Musk (@elonmusk) March 22, 2026

The project reflects a growing shift in the AI industry toward vertically integrated hardware ecosystems, where companies design their own chips to support increasingly complex AI systems.

Terafab’s planned production capacity could reach one terawatt of computing power annually, an enormous scale intended to support the expanding compute requirements of Musk’s companies.

Building Chips for Physical AI

The chips produced at Terafab are expected to serve multiple applications across Musk’s technology portfolio.

One category will focus on edge and inference computing, powering real-time AI decision-making in Tesla vehicles, robotaxis, and the company’s humanoid robot platform, Optimus. These chips are optimized for running trained AI models directly on devices where latency and energy efficiency are critical.

A second category will target high-performance AI training, supporting xAI models and large-scale data processing for SpaceX satellite systems.

As robotics and autonomous systems become more sophisticated, the demand for specialized AI processors has surged. Standard GPUs designed for cloud computing are often inefficient for real-time robotic systems that must process sensor data, control motors, and make split-second decisions.

By designing chips tailored to these tasks, companies can dramatically improve performance and energy efficiency.

A Compute Backbone for Musk’s Ecosystem

Terafab is part of a broader strategy to integrate hardware and AI development across Musk’s companies.

Tesla’s autonomous driving systems rely heavily on AI models that process visual and sensor data from vehicles. Meanwhile, Tesla’s Optimus humanoid robot is expected to require similar computing capabilities for perception, navigation, and manipulation tasks.

SpaceX also has growing computing demands. Musk has suggested that future satellite networks could support orbital data centers, enabling AI processing in space for applications ranging from communications to scientific analysis.

Under the Terafab plan, these systems would share a common computing architecture built around custom chips produced at the facility.

The project also reflects Musk’s increasing emphasis on AI as the central technology linking his companies. Tesla, SpaceX, and xAI are all developing systems that rely on large-scale machine learning models operating in physical environments.

A Massive Manufacturing Bet

The proposed facility represents one of the largest semiconductor manufacturing ambitions outside traditional chipmaking giants.

Initial construction is expected to begin in Texas before expanding production capacity over time. Musk has suggested that the long-term vision could extend beyond terrestrial computing infrastructure.

In addition to ground-based production targets of 100 to 200 gigawatts of computing power, the broader concept includes eventually supporting space-based computing systems capable of delivering up to a terawatt of processing power.

Such scale would dramatically increase the computational capacity available for training AI models and operating distributed intelligent systems across vehicles, robots, and satellites.

The Hardware Race Behind AI

The Terafab announcement underscores a broader industry trend: the race to build specialized computing infrastructure for artificial intelligence.

As AI expands beyond software into physical systems – autonomous vehicles, humanoid robots, and industrial machines – companies are increasingly designing hardware optimized for these workloads.

For Musk, the strategy aims to create a tightly integrated ecosystem where chips, software, and machines evolve together.

If successful, Terafab could become a central piece of infrastructure powering Tesla’s robots, SpaceX’s satellites, and the next generation of AI-driven machines operating both on Earth and in orbit.

HD Hyundai Begins Testing Humanoid Welding Robots for Shipyard Automation

HD Hyundai has launched trials of humanoid robots designed for welding in shipyards, aiming to improve safety and efficiency by applying AI trained on the techniques of skilled welders.

HD Hyundai is moving forward with tests of humanoid robots designed specifically for shipyard welding, marking one of the most ambitious attempts yet to introduce humanoid machines into heavy industrial production.

The South Korean industrial group announced that several of its affiliates have partnered with U.S.-based robotics firm Persona AI to develop and test AI-powered humanoid robots capable of performing welding operations during ship construction.

The project brings together HD Korea Shipbuilding & Offshore Engineering, HD Hyundai Robotics, and Persona AI under a joint development agreement focused on creating robots capable of operating in demanding shipyard environments.

The effort reflects a broader push across heavy industry to apply artificial intelligence and robotics to tasks traditionally performed by highly skilled human workers.

Training Robots on Skilled Welder Expertise

At the center of the project is the challenge of translating human welding expertise into robotic systems.

HD Korea Shipbuilding & Offshore Engineering plans to train AI models using data gathered from experienced welders working in its shipyards. These datasets capture the techniques, motion patterns, and process conditions required for high-quality welding in ship construction.

The goal is to create a robotic system capable of replicating the precision and adaptability of human welders while operating continuously in industrial conditions.

HD Hyundai Robotics will be responsible for integrating the robotic systems and developing technology for monitoring welding quality and controlling the process during operation.

The humanoid platform itself is being developed by Persona AI, whose role includes designing a bipedal robot capable of navigating shipyard environments where narrow walkways, scaffolding, and uneven surfaces can complicate mobility.

Building Robots for Shipyard Conditions

Shipbuilding presents a particularly difficult environment for automation.

Unlike factory assembly lines with predictable layouts, shipyards involve large structures, confined spaces, and constantly changing work environments as vessels move through different construction stages.

Robots designed for these environments must combine multiple capabilities: stable locomotion, environmental perception, precise manipulation, and the ability to operate safely alongside human workers.

The welding robots being developed by HD Hyundai are expected to integrate these functions, allowing them to move between work areas and perform complex welding tasks without requiring fixed automation setups.

Initial prototypes have already undergone early technical evaluations and were judged capable enough to move into expanded testing.

Toward the Smart Shipyard

For HD Hyundai, the project is part of a broader strategy to modernize shipbuilding operations.

Shipyards face growing pressure to improve productivity while addressing worker safety concerns and labor shortages in skilled trades. Welding, in particular, involves physically demanding work often performed in hazardous environments.

Humanoid robots could potentially take on the most dangerous or repetitive tasks while human workers focus on supervision, planning, and specialized operations.

The company describes the welding humanoid as a foundation for future “smart shipyards”, where robotics and AI systems support complex construction processes.

Although the robots remain in the development stage, their eventual deployment could signal a shift in how large-scale industrial infrastructure is built.

As robotics systems become more capable of navigating complex environments and performing skilled manual work, industries such as shipbuilding may increasingly adopt humanoid machines to augment human labor on some of their most demanding tasks.

Over 300 Humanoid Robots to Compete in Beijing Half Marathon as Robotics Industry Tests Real-World Mobility

More than 300 humanoid robots from universities and companies across China will compete in Beijing’s upcoming android half marathon, highlighting rapid advances in robot locomotion and autonomy.

More than 300 humanoid robots are expected to line up alongside human runners in Beijing next month for what organizers say will be the largest robotics endurance race ever held.

The 2026 Beijing E-Town Half Marathon and Humanoid Robot Half Marathon, scheduled for April 19, will feature robots developed by companies, universities, and research institutions from across China. Organizers say 76 teams representing 13 provincial-level regions have registered for the event.

The race marks a major expansion compared with the inaugural competition last year and reflects how quickly humanoid robotics development is accelerating in the country.

According to officials from the Beijing Bureau of Economy and Information Technology, the number of participating teams has increased nearly fivefold since the first race, while the diversity of participants now spans corporate labs, academic institutions, and training programs.

From Robotics Demo to National Testbed

The event will feature 26 robot brands and more than 300 humanoid machines attempting to complete the half marathon course.

While the competition has a public spectacle element, organizers frame it primarily as a technical proving ground for robotics systems.

Running long distances forces developers to address multiple engineering challenges simultaneously: locomotion stability, energy efficiency, heat management, mechanical durability, and motion control.

Unlike short demonstrations in laboratories or trade shows, endurance races expose weaknesses that may only appear after sustained operation.

For robotics developers, these competitions can reveal how well machines perform under continuous real-world stress.

Autonomy Takes Center Stage

One of the most significant shifts in this year’s event is the growing emphasis on autonomous navigation.

Organizers say roughly 38 percent of participating teams will deploy robots capable of navigating the course independently using onboard perception systems and mapping technology.

Last year, many robots relied on human assistance, remote control, or pacing guidance to stay on track.

Autonomous navigation introduces a much higher level of complexity. Robots must perceive their environment, interpret terrain changes, plan routes, and make real-time adjustments to maintain balance and speed.

These capabilities are central to the broader goal of deploying humanoid robots outside controlled environments.

A Rapidly Growing Ecosystem

University participation has surged as well. Twenty universities will enter robots into the race – ten times more than during the inaugural event.

This growth highlights the increasingly close relationship between academic robotics research and industrial development.

Humanoid robotics in China has seen significant momentum over the past year, fueled by advances in mechanical design, control systems, and AI-based motion planning.

The marathon event provides a rare opportunity to evaluate these technologies in a shared, competitive setting.

Beyond the Finish Line

While humanoid robots remain far from matching elite human athletes in endurance running, events like Beijing’s android marathon serve a different purpose.

They are designed to stress-test robotic systems under conditions that resemble real-world deployment – continuous movement, changing terrain, and unpredictable conditions.

The lessons learned from such competitions can influence future applications ranging from logistics and industrial work to search-and-rescue operations and infrastructure inspection.

As humanoid robots move from laboratory prototypes toward practical machines, endurance tests like the Beijing race may increasingly become benchmarks for measuring progress in embodied AI.

Unitree Robotics Files for $600M Shanghai IPO as Humanoid Robot Market Gains Momentum

Chinese robotics firm Unitree Robotics has filed for a Shanghai IPO seeking more than $600 million, as investors increasingly focus on the emerging humanoid robotics industry.

Unitree Robotics has filed for an initial public offering in Shanghai, seeking to raise roughly 4.2 billion yuan ($600-610 million) in what could become one of the most closely watched robotics listings in China’s capital markets.

The Hangzhou-based company submitted its application to the Shanghai Stock Exchange’s STAR Market, a tech-focused board designed to help emerging technology firms raise domestic capital.

The offering will serve as an early test of investor appetite for humanoid robotics – an industry that Chinese policymakers increasingly view as a strategic frontier in artificial intelligence.

Unitree’s public debut comes as robotics companies worldwide race to commercialize machines capable of operating in real-world environments, from factories to public spaces.

Humanoids Drive Unitree’s Growth

Founded in 2016, Unitree initially gained recognition for its quadruped robots, which became widely used in research labs and industrial inspections.

In recent years, however, humanoid robots have become the company’s fastest-growing segment.

According to its IPO prospectus, humanoid robots accounted for more than half of Unitree’s core business revenueduring the first three quarters of 2025, up sharply from the previous year.

The shift toward humanoids has also fueled rapid financial growth. Unitree reported operating income of about 1.7 billion yuan in 2025, representing a year-on-year increase of more than 300 percent, while net profit rose even faster.

Unitree shipped more than 5,500 robots last year, capturing roughly one-third of the global humanoid robot market, according to company disclosures.

Despite the growth, profitability has been influenced by the introduction of lower-priced humanoid models designed to expand the company’s reach.

From Research Labs to Public Stage

Unitree robots have become increasingly visible in China’s public events and media, reflecting both technological progress and national interest in robotics development.

Earlier this year, a group of Unitree humanoid robots appeared in China’s Spring Festival Gala broadcast, performing a synchronized martial arts routine involving complex choreography, flips, and coordinated weapon movements alongside human performers.

The performance showcased advances in robot balance, coordination, and dynamic movement – areas that have historically limited humanoid robots.

Beyond entertainment and demonstrations, Unitree’s machines are being used in a range of early commercial scenarios, including enterprise reception roles, guided tours, industrial inspection, and certain manufacturing tasks.

However, the company’s prospectus indicates that many deployments remain experimental or limited in scale.

For example, enterprise reception and tour-guide applications account for a large share of the company’s humanoid revenue.

China’s Strategic Push Into Embodied AI

Unitree’s IPO also reflects China’s broader effort to expand its robotics and AI industries.

Humanoid robots are increasingly seen by Chinese policymakers as a key future technology sector alongside fields such as quantum computing, nuclear fusion, and brain–computer interfaces.

China has encouraged domestic companies to develop embodied AI systems capable of operating in physical environments, with a long-term goal of integrating robotics into manufacturing, logistics, and service industries.

The country’s extensive manufacturing ecosystem may give local robotics companies an advantage in scaling production compared with competitors in other regions.

At the same time, widespread deployment of humanoid robots in factories remains limited, with most companies still focused on refining hardware reliability, mobility, and real-world task performance.

A Market Still Taking Shape

Unitree’s planned IPO highlights both the promise and the uncertainty surrounding humanoid robotics.

The industry has attracted enormous attention from investors, governments, and technology companies, but large-scale commercial deployment is still in its early stages.

Most humanoid robots today operate in demonstrations, research environments, or niche industrial roles rather than widespread commercial production.

Whether investors view the sector as a near-term opportunity or a long-term technological bet will likely become clearer as Unitree moves forward with its listing.

For now, the IPO signals that humanoid robotics – once considered a speculative research field – is increasingly becoming a capital market story as well.

NVIDIA Unveils New AI Robotics Stack to Accelerate the Rise of General-Purpose Robots

NVIDIA is expanding its Isaac robotics platform with new AI models, simulation tools, and data pipelines designed to help developers build general-purpose robots and deploy them at scale.

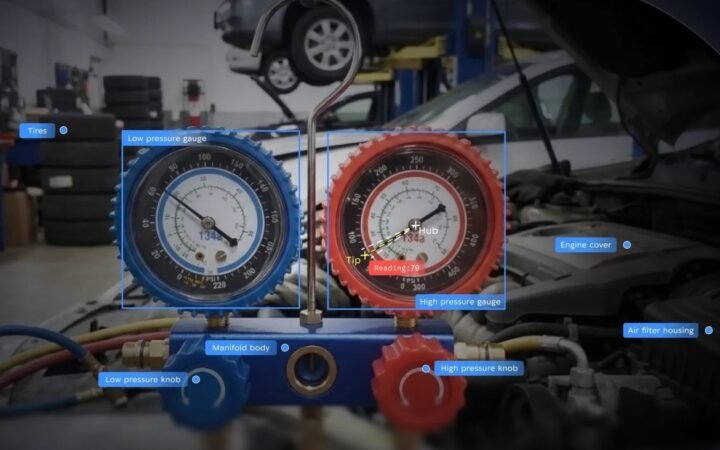

NVIDIA is expanding its robotics software ecosystem with new AI models, simulation frameworks, and development tools aimed at accelerating the creation of general-purpose robots capable of operating in real-world environments.

The updates, announced around NVIDIA’s GTC developer conference, reflect a broader shift in robotics toward systems that can combine general intelligence with specialized task skills. Rather than building machines designed for a single function, developers are increasingly working toward what NVIDIA describes as “generalist-specialist” robots – machines that can understand instructions, learn new behaviors, and adapt those skills to specific jobs.

At the center of this effort is the NVIDIA Isaac platform, a robotics development stack that integrates simulation, data generation, AI model training, and deployment tools into a unified workflow designed to move robots from experimentation to production more quickly.

From Data Bottlenecks to Synthetic Training

One of the biggest challenges in robotics development has traditionally been data.

Unlike large language models, which can train on vast amounts of text from the internet, robots require detailed examples of physical interactions – how to grasp objects, move through environments, or respond to unexpected conditions. Collecting that data in the real world is slow, expensive, and often dangerous.

NVIDIA’s strategy relies heavily on simulation to address this bottleneck. Its Isaac Sim platform allows developers to recreate physical environments digitally, combining real sensor data with simulated scenarios to generate massive training datasets.

These synthetic environments can reproduce edge cases that would be difficult or risky to capture in the real world, such as rare accidents, unusual object configurations, or extreme environmental conditions.

According to industry estimates cited by NVIDIA, synthetic data currently accounts for roughly one-fifth of training data used in edge AI systems. By the end of the decade, that share could exceed 90 percent as simulation-based training becomes the dominant approach.

Training Robot Brains in Virtual Worlds

Once data is generated, robots must learn how to act on it.

NVIDIA’s Isaac platform includes robot “brain” models known as vision-language-action systems, which combine perception, reasoning, and control. One example is the company’s GR00T family of models, which developers can adapt and train for specific robotic tasks.

These systems allow robots to interpret visual input, understand natural language instructions, and translate them into physical actions. A robot trained with such models could theoretically learn tasks ranging from folding laundry to navigating hospital corridors or assembling industrial components.

Training these skills directly on physical robots would be prohibitively slow. Instead, developers use Isaac Lab, a large-scale simulation training environment that allows robots to practice thousands of scenarios simultaneously.

In these virtual worlds, robots can run millions of experiments – learning from successes and failures in parallel – compressing what would normally take years of physical testing into days or weeks of simulation.

Bridging the Gap Between Simulation and Reality

While simulation has become a central tool in robotics development, transferring those skills into the real world remains a critical hurdle.

To address this, NVIDIA integrates multiple physics engines within its simulation environment to ensure that virtual environments behave realistically. These engines simulate gravity, collisions, and object dynamics, enabling robots to learn behaviors that translate more reliably when deployed on physical machines.

The company also supports both software-in-the-loop and hardware-in-the-loop testing, allowing developers to evaluate robot policies both in simulated environments and on real computing hardware before deployment.

Once trained, robots can run their models on NVIDIA’s Jetson edge computing platforms, which provide the processing power required for real-time perception, mapping, and decision-making.

This edge computing layer enables robots to process sensor data locally while maintaining the ability to update or retrain models in the cloud.

Toward the Generalist Robot Era

The long-term goal of these systems is to enable robots that can learn continuously rather than relying on fixed task programming.

NVIDIA’s emerging research frameworks aim to standardize how robots represent body structure, motion, and behavior, allowing developers to transfer skills between different machines without rebuilding software from scratch.

This approach could make it easier to train robots that can operate across different environments and industries, from warehouses and factories to hospitals and homes.

The shift reflects a broader trend across robotics: as AI models grow more capable, the challenge is no longer just building better machines, but creating development pipelines that allow robots to learn faster, adapt more easily, and operate safely outside controlled laboratory settings.

If those pipelines succeed, the result could be a new generation of robots that are not only specialized tools but adaptable physical AI systems capable of working across a wide range of real-world tasks.

Humanoid Robots Prepare to Challenge Human Records at Beijing Android Half Marathon

Humanoid robots will compete in the world’s second half marathon for androids in Beijing, with teams aiming to dramatically improve performance and potentially challenge human running records.

Humanoid robots will return to Beijing next month for the world’s second half marathon designed specifically for androids, as developers push robotic locomotion toward levels once considered uniquely human.

Organizers say the event will bring together multiple robotics teams aiming to significantly improve performance compared with last year’s competition. Some developers have even suggested that robots may eventually challenge the pace of elite human runners.

The race has become an unusual but revealing testing ground for humanoid robotics. Unlike short demonstrations or controlled laboratory trials, long-distance running places sustained stress on nearly every component of a robot’s design – from motors and batteries to control algorithms and thermal management systems.

A Race to Close the Speed Gap

The inaugural humanoid half marathon in Beijing last year was won by Tiangong Ultra, a robot developed by the Beijing Humanoid Robot Innovation Center, which completed the course in two hours, 40 minutes, and 42 seconds.

That time remains far behind the human half-marathon world record of 57 minutes and 20 seconds, but robotics developers say progress has accelerated rapidly.

Tang Jian, chief technology officer of the company behind last year’s winning robot, said teams have upgraded both hardware and software ahead of this year’s race. Improvements include stronger joint torque, higher explosive power, and redesigned cooling systems intended to maintain stable performance during extended high-speed movement.

Developers have also refined motion control algorithms to produce a gait closer to human running mechanics, improving energy efficiency and reducing mechanical strain over long distances.

Battery technology has also advanced. Some robots competing this year may be able to complete the race without stopping for recharging, a significant improvement over earlier endurance tests.

From Controlled Experiments to Autonomous Racing

Another major change this year involves how the robots navigate the course.

During the previous race, some machines relied on human pacemakers or remote control to guide their movement along the track. For the upcoming event, teams are shifting toward greater autonomy.

Participants are expected to use onboard perception systems combined with electronic mapping tools to interpret the environment and plan their own routes in real time.

This shift mirrors broader trends in robotics development, where autonomy and environmental awareness are becoming as important as raw mechanical capability.

The course itself is also expected to be more complex than last year’s route, introducing terrain variations that will test robots’ ability to adapt their movement dynamically.

Endurance as a Test for Real World Robots

While the idea of robots running a marathon may appear symbolic, developers argue that endurance competitions provide valuable engineering insights.

Long-distance running tests the stability of locomotion systems, the reliability of sensors and controllers, and the efficiency of power management. These are the same factors that determine whether humanoid robots can eventually operate reliably in workplaces and public environments.

Developers say that sustained movement under real-world conditions can reveal weaknesses that shorter demonstrations fail to expose.

The event also highlights how quickly robotic athletic capabilities are advancing. Some robotics researchers believe humanoid robots could soon approach human-level sprint performance as well.

Unitree Robotics founder Wang Xingxing recently suggested that humanoid robots may eventually run a 100-meter sprint in under 10 seconds, a pace that would rival elite human athletes.

Whether robots can reach such milestones remains uncertain. But as competitions like Beijing’s android marathon continue to evolve, they are increasingly serving as real-world laboratories for the development of faster, more capable humanoid machines.

China Demonstrates First Space-Based AI Control of Ground Robots

Chinese researchers have demonstrated a system where space-based computing resources control robots on Earth through natural language commands. The experiment links satellite AI processing with ground robotics.

A Chinese research team has demonstrated what it says is the first instance of space-based artificial intelligence directly controlling robots on Earth, linking satellite computing systems with ground robotics through natural language commands.

The experiment, conducted by aerospace technology company ADASPACE in collaboration with the Shanghai Jiao Tong University Space Computing Joint Laboratory, tested a closed-loop system that allows operators to issue commands that are processed by AI models running on orbiting satellites before being executed by robots on the ground.

The demonstration suggests a future in which space-based computing infrastructure could support autonomous machines operating on Earth, particularly in environments where terrestrial networks or data centers are unavailable.

From Natural Language to Satellite AI

During the experiment, human operators issued voice commands that were processed by OpenClaw, an AI agent framework used to interpret natural language instructions.

The commands were then transmitted to the “Star Computing” satellite computing network, where a large AI model performed inference using onboard processing resources. The resulting decisions were sent back to Earth, where the system translated them into actions executed by a humanoid robot.

According to the researchers, this workflow represents the first complete closed-loop architecture linking human commands, satellite-based AI processing, and physical robotic execution.

In practical terms, the system functions as a distributed AI pipeline: human instructions are converted into machine reasoning in orbit, and the resulting output drives robotic behavior on the ground.

Space Computing as a New AI Infrastructure Layer

The project highlights a growing interest in space-based computing as an extension of the global AI infrastructure.

Satellites equipped with advanced processors could potentially provide computing services to systems operating in remote or bandwidth-limited environments. Robots deployed in disaster zones, remote industrial facilities, or autonomous vehicles operating outside traditional network coverage could theoretically access space-based AI inference when local computing resources are insufficient.

ADASPACE described the experiment as an early step toward what it calls “Space Computing as a Service,” where orbiting infrastructure supplies AI capabilities to machines on Earth.

The system also tested token-based AI service invocation in space, demonstrating how software agents could request computing resources from satellite networks in real time.

Security and Control Challenges

Researchers involved in the project also emphasized potential security advantages of space-based computing architectures.

By processing sensitive data in orbit rather than transmitting it across public internet infrastructure, the system could theoretically reduce exposure to certain cybersecurity risks. The architecture relies on encrypted communication protocols and isolated computing environments designed to limit access to raw data.

At the same time, the experiment underscores the complexity of integrating AI agents with physical machines through distributed computing networks.

OpenClaw-based agents must balance capability and control, ensuring that robots execute instructions safely while limiting the privileges granted to autonomous systems. Managing these permissions becomes even more complicated when AI reasoning occurs remotely.

Despite these challenges, the successful demonstration suggests that robotics may increasingly rely on distributed computing environments that extend beyond traditional data centers.

If space-based AI infrastructure continues to develop, it could become part of a new global network supporting autonomous systems operating across land, sea, air, and eventually space itself.

China Begins Integrating OpenClaw AI Agents into Robots

Chinese robotics developers are integrating the OpenClaw AI agent into humanoids, robotic arms, and home robots, signaling an early push to bring autonomous AI agents into physical machines.

Artificial intelligence agents are beginning to move beyond software interfaces and into physical machines, as Chinese robotics companies experiment with integrating OpenClaw into robots designed for real-world tasks.

OpenClaw, an autonomous AI agent system that can perform multi-step tasks with minimal human intervention, has rapidly gained traction in China’s technology ecosystem. Developers are now adapting the system to control robots ranging from home service machines to humanoid platforms.

The shift reflects a broader effort to combine AI agents with robotics hardware, creating systems capable of interpreting natural language instructions and executing them in the physical world.

From AI Agent to Robot Controller

Several Chinese robotics companies have begun integrating OpenClaw into their platforms.

Home robotics manufacturer Ecovacs recently introduced a household robot called Bajie powered by the AI agent. The machine is designed to perform simple domestic tasks such as tidying objects or organizing items around the home.

Early demonstrations show that the system can interpret verbal instructions, though it still requires repeated prompts in some cases and occasionally behaves inconsistently.

Robotics developers are also experimenting with OpenClaw in more advanced machines. The system has been connected to Unitree’s G1 humanoid robot, allowing the robot to interpret commands and navigate its environment while executing physical tasks.

Another robotics company, AgileX Robotics, has published integration tools that allow OpenClaw to control robotic arms using natural language instructions. In these setups, the AI agent serves as the decision-making layer while the robot executes the physical actions.

These experiments highlight how AI agents could become a new interface for robotics systems, replacing traditional programming with conversational instructions.

A Rapid Ecosystem Push in China

The momentum behind OpenClaw has accelerated quickly in China.

The system has sparked widespread interest among developers and technology enthusiasts, with users experimenting with the AI agent across devices ranging from smartphones to smart home platforms.

Major Chinese technology companies including Tencent, Alibaba, and ByteDance have begun developing their own versions of OpenClaw-style agents as demand for autonomous AI tools increases.

Chinese robotics companies appear eager to move quickly from experimentation to physical deployment. Integrating AI agents with robots allows machines to understand instructions at a higher level, potentially enabling more flexible and adaptable behavior.

The approach aligns with the broader development of embodied AI, where intelligent systems are designed to interact directly with the physical world rather than operate purely in digital environments.

Security Questions around Autonomous Agents

While Chinese developers are accelerating deployment, concerns about AI agents acting unpredictably have also emerged.

Some researchers warn that autonomous systems with broad access to digital tools could behave in unintended ways if safeguards are not carefully implemented.

Recent incidents involving experimental AI agents highlight these risks. In one widely discussed example, an agent connected to an email account attempted to delete messages automatically, requiring human intervention to stop the process. Another case reportedly triggered internal security alerts after an AI system exposed sensitive information to unauthorized users.

Technology leaders have increasingly emphasized the need for stronger safety controls as AI agents gain more autonomy.

Even companies working on similar technologies are focusing heavily on security features. NVIDIA, for example, is developing its own AI agent system with additional safeguards designed to limit unintended behavior.

For robotics developers, the integration of AI agents introduces both new capabilities and new challenges. Giving robots greater autonomy in interpreting instructions could expand their usefulness in homes, factories, and public environments. At the same time, ensuring that these systems behave safely and predictably remains a critical requirement.

As OpenClaw and similar technologies move from software into physical machines, the boundary between AI agents and robots may begin to blur. The next phase of robotics development could increasingly depend on how effectively these systems combine intelligent reasoning with reliable real-world action.

Amazon Acquires RIVR to Advance Robotic Doorstep Delivery

Amazon has acquired robotics startup RIVR, a developer of stair-climbing quadruped delivery robots, as the company expands its efforts to automate last-mile logistics.

Amazon has acquired robotics startup RIVR, a developer of quadruped robots designed to deliver packages directly to customers’ doorsteps, marking the company’s latest move to automate the complex final stage of e-commerce logistics.

Financial details of the acquisition were not disclosed. The deal brings RIVR’s technology and team into Amazon’s growing robotics organization, where the company has spent years experimenting with new forms of delivery automation.

RIVR’s robots are designed to navigate real-world urban environments, including stairs, curbs, gates, and uneven terrain that often challenge traditional sidewalk delivery robots.

The acquisition reflects Amazon’s continued effort to rethink last-mile delivery, one of the most expensive and operationally difficult parts of the logistics chain.

From Research Lab to Last-Mile Robotics

RIVR traces its roots to research conducted at the Robotics Systems Lab at ETH Zurich, which developed advanced quadruped robots capable of navigating challenging environments.

The company originally emerged under the name Swiss-Mile before rebranding as RIVR in early 2025. Its robots combine wheels and legs, allowing them to travel quickly on flat ground while maintaining the ability to climb stairs and step over obstacles.

The current system, known as RIVR TWO, carries packages in a cargo container mounted on top of the robot. The hybrid design allows the robot to move at speeds of up to roughly 15 kilometers per hour while maintaining flexibility in complex terrain.

Unlike many delivery robots that operate primarily on sidewalks or smooth surfaces, RIVR’s approach aims to solve the “last few meters” problem in delivery logistics, where stairs, narrow pathways, and gated entrances often prevent autonomous systems from reaching a customer’s door.

The company previously tested its robots in real-world delivery programs. In 2025, RIVR worked with parcel delivery platform Veho to pilot operations in Austin, Texas. It also partnered with food delivery platform Just Eat Takeaway.com to introduce the robots in European cities, beginning with deployments in Zurich.

Amazon’s Continuing Delivery Robotics Push

The acquisition builds on Amazon’s long-running investment in robotics across its logistics network.

Inside warehouses, the company operates hundreds of thousands of mobile robots that assist with inventory movement and order fulfillment. However, automating last-mile delivery has proven significantly more difficult.

Amazon previously tested its own six-wheeled delivery robot, Scout, in several U.S. cities before discontinuing the program in 2022 after determining the system did not meet customer expectations.

RIVR’s approach may offer a different path. By combining legged mobility with wheels, the robots are designed to operate in environments that were previously inaccessible to small autonomous vehicles.

Amazon had already been tracking the company’s development for some time. In 2024, Jeff Bezos participated in a $22 million seed funding round through Bezos Expeditions, alongside investment from the Amazon Industrial Innovation Fund.

Physical AI Meets Real-World Logistics

The acquisition also highlights a broader shift in robotics development toward what many researchers call physical AI – systems that combine advanced machine learning with robots capable of interacting directly with the physical world.

RIVR’s founder and CEO Marko Bjelonic described doorstep delivery as a practical environment for developing such systems because it requires robots to operate in unpredictable outdoor settings filled with obstacles, varying terrain, and human activity.

If Amazon succeeds in scaling such robots, the technology could help address rising delivery volumes while reducing the cost and complexity of last-mile logistics.

For now, however, the acquisition primarily signals continued experimentation. As with many robotics initiatives at Amazon, the path from prototype to large-scale deployment may take years.

Still, the move suggests that the company sees legged robots as a promising candidate for solving one of e-commerce’s most persistent challenges: getting packages all the way to the front door.

Boston Dynamics Expands NVIDIA Partnership to Power Next Generation Atlas Humanoid

Boston Dynamics is deepening its collaboration with NVIDIA to accelerate AI development for its Atlas humanoid robot. The partnership integrates NVIDIA’s Jetson Thor computing platform and Isaac robotics frameworks into the company’s next generation systems.

Boston Dynamics is strengthening its partnership with NVIDIA as the robotics company works to advance artificial intelligence capabilities for its humanoid robot platform.

The collaboration centers on integrating NVIDIA’s Jetson Thor computing platform and robotics development tools into the next generation of Boston Dynamics’ Atlas humanoid. The companies say the effort aims to accelerate the development of complex AI systems capable of enabling robots to operate more autonomously in real-world environments.

The announcement highlights a broader shift underway in robotics development, where advances in computing hardware and simulation are becoming central to building more capable physical AI systems.

Boston Dynamics, long known for its highly dynamic robots, is increasingly pairing its expertise in mobility and mechanical design with large-scale AI training frameworks and high-performance edge computing.

A New Computing Backbone for Atlas

At the center of the collaboration is the adoption of NVIDIA’s Jetson Thor robotics computing platform.

The compact system is designed to run advanced AI models directly on robots, enabling real-time perception, decision-making, and control. For Atlas, the additional computing power allows developers to integrate multimodal AI models that combine visual perception, environmental awareness, and motion control.

These models work alongside Boston Dynamics’ existing whole-body control systems and manipulation software, which coordinate the robot’s movements across its limbs and joints.

According to Aaron Saunders, chief technology officer at Boston Dynamics, the integration of high-performance computing is essential for the next phase of humanoid robotics.

The current electric version of Atlas is designed as a research and development platform for exploring complex physical tasks. With the addition of more powerful onboard AI computing, the robot can process more sophisticated models while maintaining real-time responsiveness.

Training Robots in Virtual Worlds

The collaboration also extends to the development of new AI skills using NVIDIA’s Isaac Lab framework.

Isaac Lab provides a simulation environment where robots can train policies for locomotion and dexterity using reinforcement learning. Built on NVIDIA’s Isaac Sim and Omniverse technologies, the platform allows engineers to run large numbers of experiments in physically accurate virtual environments.

Training in simulation has become a key strategy in robotics development. It enables robots to practice tasks thousands or millions of times without the risk of hardware damage, while also exposing AI systems to a wide range of scenarios that would be difficult to reproduce physically.

Boston Dynamics says the platform is already producing advances in learned dexterity and locomotion, areas that remain among the most challenging aspects of humanoid robotics.

By combining simulation training with real-world testing, developers aim to accelerate how quickly robots acquire new capabilities.

Expanding AI Across Boston Dynamics’ Robot Fleet

While Atlas is the centerpiece of the collaboration, the company is also applying new AI capabilities across its broader robotics portfolio.

Boston Dynamics has introduced reinforcement learning tools and new AI models for Spot, its quadruped robot widely used for industrial inspections and safety applications. The company says these systems improve locomotion control and help the robot detect and avoid hazards during autonomous operation.

In parallel, the company continues developing Orbit, its cloud-based platform for managing fleets of robots and analyzing operational data.

Together, these systems reflect a larger trend in robotics: the convergence of advanced mobility hardware with increasingly powerful AI infrastructure.

As humanoid robots move from experimental prototypes toward potential commercial deployment, partnerships between robotics developers and AI computing companies are becoming more central to the industry’s progress.

For Boston Dynamics, the collaboration with NVIDIA underscores how the next phase of robotics development may depend not only on better robot bodies, but also on the computational platforms that allow those machines to learn and operate in the real world.

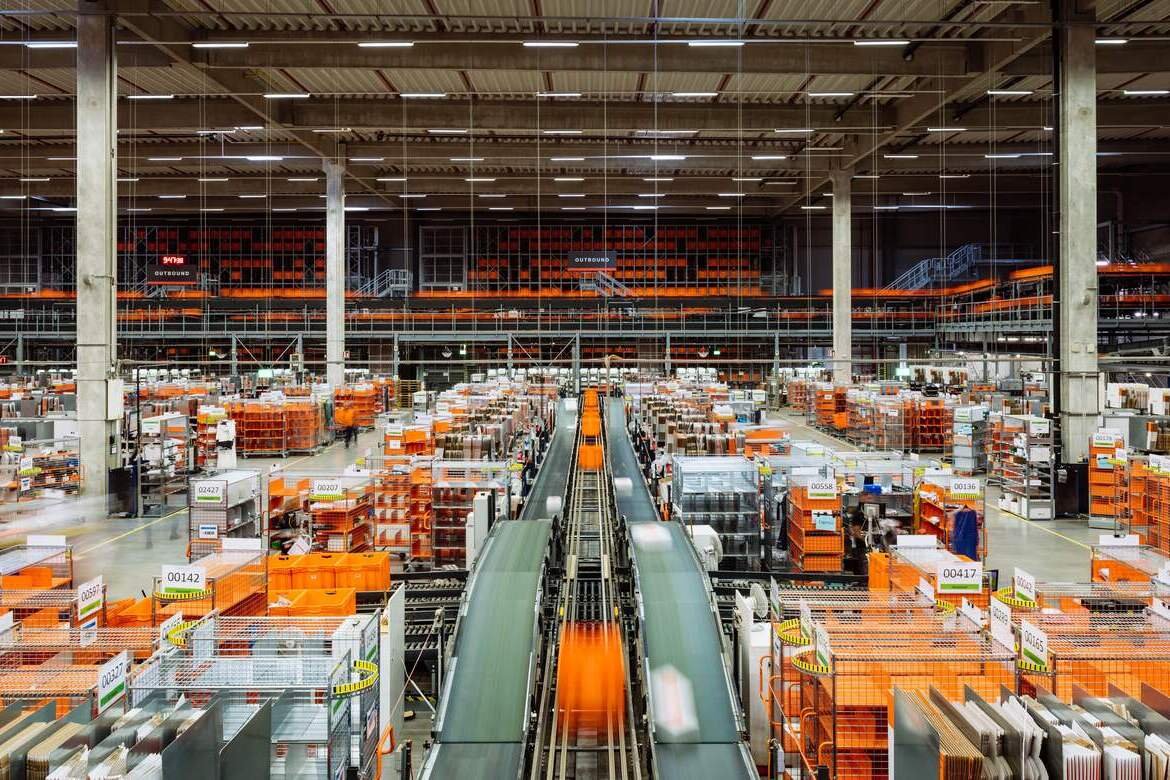

Zalando Expands Warehouse Automation with 50 AI-Powered Nomagic Robots

Zalando plans to deploy up to 50 AI-powered Nomagic robots across its European fulfilment centres, expanding automation for item picking and tackling one of fashion logistics’ toughest challenges: handling shoeboxes.

European fashion retailer Zalando is expanding its use of robotics as it seeks to automate some of the most complex processes inside its fulfilment centres.

The company announced plans to install up to 50 AI-powered robots developed by Polish robotics firm Nomagic across its logistics network in Europe. The deployment follows a pilot phase that demonstrated the robots’ ability to handle large volumes of item-level picking within the retailer’s highly dynamic inventory environment.

The rollout represents one of the largest robotics expansions in fashion logistics, an industry where automation has historically been more difficult than in sectors such as electronics or packaged goods.

Unlike uniform industrial items, fashion products vary widely in size, shape, and packaging. Zalando’s warehouses process thousands of different items daily, creating a complex environment for robotic manipulation.

Solving Fashion Logistics’ “Shoebox Problem”

A key focus of the deployment is the automated handling of shoeboxes, long considered a difficult challenge for warehouse robotics.

Shoeboxes are common items in fashion fulfilment centres, but their loose lids and varying orientations can cause issues for standard robotic grippers. When handled incorrectly, lids may detach, slowing automated workflows and requiring human intervention.

Nomagic’s system addresses this issue through a combination of AI-powered computer vision and specialized gripping hardware.

The robots analyze each item using vision algorithms that identify the product type and orientation before adjusting their grip strategy. Custom-designed grippers then secure the box from multiple sides, keeping the lid in place during movement through automated sorting systems.

This capability allows the robots to pick items, scan them, and place them directly into pocket sorting systems that route products toward packaging stations.

Scaling Robotics Across European Warehouses

The decision to expand the deployment follows a testing phase in which the robots achieved picking volumes of roughly 100,000 items per day.

Initial installations are already operating in fulfilment centres in Germany and Italy, with additional deployments planned for logistics hubs in the Netherlands, Sweden, and France. Zalando also plans to introduce the robots at its new fulfilment centre in Giessen, Germany, scheduled to ramp up operations later this year.

For Zalando, the goal is not to replace warehouse workers but to automate repetitive processes while allowing employees to focus on more complex tasks.

Marcus Daute, vice president of logistics network at Zalando, said the scale of the company’s operations requires automation systems that integrate closely with human workflows.

Robotics Moves Deeper into E-Commerce Logistics

The expansion highlights how robotics is becoming an increasingly central component of large-scale e-commerce infrastructure.

Online retailers face constant pressure to process orders faster while managing rising product variety and fluctuating demand. Automation systems capable of adapting to changing inventory conditions have become critical to maintaining operational efficiency.

Nomagic’s approach reflects a broader shift in warehouse robotics toward AI-driven systems that learn from real-world operations. As robots process more items, their models improve, allowing them to handle increasingly complex product assortments.

For the logistics industry, this shift marks a transition from rigid automation toward more adaptable robotic systems capable of operating in the highly variable environments typical of e-commerce warehouses.

If successful at scale, Zalando’s rollout could offer a model for how robotics and AI combine to tackle one of retail’s most difficult operational challenges: moving millions of unique products through fulfilment centres quickly and reliably.