Monthly Archives: March 2026

iRobot Expands Roomba Mini Launch to Europe and the U.K.

iRobot has introduced its compact Roomba Mini robot vacuum to Europe and the U.K., marking the company’s first product rollout since emerging from Chapter 11 restructuring earlier this year.

iRobot has begun rolling out its smallest robotic vacuum cleaner, the Roomba Mini, across Europe and the United Kingdom, marking the company’s first major product launch since emerging from bankruptcy earlier this year.

The compact robot, which combines vacuuming and mopping capabilities, was previously introduced in Japan and is now being positioned as a cleaning device designed specifically for smaller homes and apartments.

The expansion comes as iRobot attempts to regain momentum following a pre-packaged Chapter 11 restructuring completed in January and a change in ownership that placed the company under the control of its longtime manufacturing partner, Picea.

A Smaller Robot for Smaller Homes

The Roomba Mini is designed to address a practical challenge that has long affected robot vacuums: reaching tight spaces.

According to iRobot, the new model’s compact footprint allows it to navigate narrow corners and areas that are often inaccessible to standard-sized robotic vacuums or traditional upright cleaners.

The robot uses a lidar-based navigation system called ClearView, enabling it to map its surroundings, avoid obstacles, and detect rugs while operating in mopping mode.

Users can control the device through the Roomba Home mobile application, voice assistants, or directly through onboard controls. The system can also operate without a Wi-Fi connection, allowing basic cleaning functionality even when offline.

An AutoEmpty Dock collects debris into an AllergenLock bag capable of holding several months’ worth of dust and dirt, reducing the need for frequent maintenance.

Early Demand Signals in Japan

The Roomba Mini first launched in Japan in February, where iRobot reported strong early demand.

According to company representatives, the black version of the device sold out within the first week of availability.

While the robot was initially designed with compact Japanese homes in mind, iRobot executives say the same characteristics make it suitable for European living spaces, which often feature tighter layouts than homes in North America.

The robot is now available through iRobot’s European online store with a retail price of approximately €399 in the European Union and £379 in the United Kingdom.

A Strategic Moment for iRobot

The European launch arrives at a pivotal moment for the company.

iRobot, once the dominant name in robotic vacuum cleaners, has faced increasing competition in recent years from a growing number of consumer robotics companies offering lower-cost devices with advanced features.

The company’s financial challenges culminated in a bankruptcy restructuring earlier this year. As part of that process, iRobot was acquired by Picea, a firm that previously served as both a manufacturing partner and lender to the company.

Executives say the Roomba Mini was developed before the acquisition and that the ownership transition did not influence the product’s design or release timeline.

However, under Picea’s ownership, iRobot may benefit from expanded manufacturing capabilities and distribution networks across Asia and other global markets.

The Next Phase of Consumer Robotics

The launch of the Roomba Mini also reflects a broader shift within the consumer robotics market.

As robotic vacuum technology matures, manufacturers are increasingly focusing on specialized designs that address specific living environments rather than relying on a single universal product.

Smaller robots capable of navigating dense household layouts may become particularly relevant in urban markets where apartments dominate the housing landscape.

For iRobot, the success of such products could help determine whether the company can maintain its position in an increasingly crowded consumer robotics industry.

Poland’s First Humanoid Influencer Draws Brands and Millions Of Views

A humanoid robot named Edward Warchocki has rapidly gained attention in Poland’s influencer market, attracting hundreds of thousands of social media followers and landing commercial brand partnerships.

A humanoid robot has entered Poland’s influencer economy, quickly drawing attention from brands, social media audiences, and technology observers.

The robot, named Edward Warchocki, has appeared on streets in Warsaw and Poznań interacting with passers-by while building a growing presence on social media platforms. Within roughly two weeks of its debut, the project amassed tens of thousands of followers on Instagram and more than 100,000 on TikTok, while videos featuring the robot reportedly generated hundreds of millions of views across platforms.

The experiment places a physical humanoid robot into a market previously dominated by human personalities and digital avatars.

For companies experimenting with new forms of marketing, the project represents an early test of how embodied AI could reshape influencer culture.

From Lab Experiment to Commercial Platform

Edward Warchocki began as a technology experiment initiated by entrepreneur Radosław Grzelaczyk and supported by artificial intelligence developer Bartosz Idzik, who created the system that powers the robot’s conversational behavior.

Unlike scripted promotional mascots, the robot is designed to interact dynamically with people in real-world environments.

According to the project’s creators, the system uses a combination of proprietary AI tools and existing technologies to generate responses during live interactions. The goal was to create a robot that could adapt to conversations rather than simply repeat preprogrammed lines.

Public reactions have ranged from curiosity to enthusiasm. Videos show people approaching the robot on city streets to shake hands, ask questions, and record short interactions for social media.

These spontaneous encounters have become a key part of the robot’s appeal.

Brands Experiment with a New Type of Influencer

The project has already begun attracting commercial interest.

Edward’s first advertising collaboration reportedly involved promoting a luxury watch valued at approximately 80,000 złoty, marking a symbolic entry into the influencer marketing industry.

For brands, robots introduce a different set of characteristics than human creators.

A humanoid influencer cannot become involved in personal scandals, take breaks, or deviate from a brand’s messaging strategy. The creators of the project argue that this level of control makes robots attractive marketing ambassadors for companies seeking predictable campaigns.

Some analysts also point to engagement metrics as a key factor. Early data from projects involving robotic or virtual influencers suggests that novelty and public curiosity can generate unusually high engagement rates compared with traditional creators.

Physical Presence in a Digital Industry

The rise of virtual influencers is not new. Computer-generated personalities such as Lil Miquela in the United States or Rozy in South Korea have already built large audiences and signed partnerships with major global brands.

However, those figures exist entirely in digital form.

Edward represents a different model: a physical robot capable of interacting with people face to face.

This physical presence creates a type of engagement that purely digital characters cannot replicate. Passers-by can approach the robot, speak with it, and record the interaction in real time.

In practice, the robot becomes both an influencer and a live attraction.

For social media creators and marketers, such encounters provide content that can spread quickly across platforms.

A New Category of Embodied Media

The project reflects a broader trend in robotics where machines are increasingly designed to operate in social environments rather than purely industrial settings.

Humanoid robots have traditionally been developed for research or automation applications. But advances in conversational AI and robotics hardware are opening the possibility of robots that function as public-facing personalities.

If the experiment succeeds commercially, it could mark the emergence of a new category within influencer marketing: embodied influencers.

Analysts estimate that the global virtual influencer market could reach nearly $16 billion by 2026. Robots capable of appearing in both online content and physical events may represent the next stage of that market’s evolution.

For now, Edward Warchocki remains an experiment.

But its rapid rise suggests that the intersection of robotics, artificial intelligence, and digital media may be creating a new kind of celebrity – one built from code, hardware, and algorithms rather than human charisma.

Tesla Teases More Human Like Hands for Next Generation Optimus Robot

Tesla has teased a new generation of humanoid robot hands for Optimus, suggesting the company is focusing on improved dexterity as it develops its next iteration of the humanoid platform.

Tesla has offered a new glimpse into the next stage of development for its humanoid robot Optimus, teasing a redesigned set of robotic hands that appear significantly more human-like than previous prototypes.

The teaser image, shared by Tesla’s AI team on Chinese social media platform Weibo, shows a pair of robotic hands with finger proportions and articulation that closely resemble those of a human hand. The image quickly circulated across the robotics community, fueling speculation that the company is preparing a new iteration of the robot’s manipulation system.

Although Tesla has not released technical specifications, the design suggests the company is focusing heavily on improving dexterity – widely considered one of the most difficult challenges in humanoid robotics.

Why Robotic Hands Matter

In robotics research, the ability to manipulate objects with human-level precision remains a major technical hurdle.

Industrial robots have long been capable of gripping and moving objects, but most rely on specialized end-effectors designed for specific tasks. Humanoid robots, by contrast, must interact with a wide range of tools, devices, and environments originally designed for human hands.

This requirement makes hand design one of the most complex engineering problems in humanoid robotics. Achieving fine motor control requires a combination of compact actuators, high-resolution sensors, and sophisticated control software capable of coordinating dozens of joints simultaneously.

If Optimus is intended to perform tasks in factories, warehouses, or eventually homes, improved hand dexterity will likely be essential.

The teaser image suggests Tesla may be moving toward a more anatomically inspired design, potentially enabling the robot to handle objects with greater precision.

A Key Step Toward Tesla’s Robotics Ambitions

Elon Musk has repeatedly described Optimus as one of Tesla’s most important long-term initiatives, potentially exceeding the impact of the company’s electric vehicles.

Musk has suggested that humanoid robots could eventually perform a wide range of tasks across industries, from manufacturing and logistics to household assistance.

In public comments, he has also framed the project in more ambitious terms, describing Optimus as a potential “Von Neumann machine” – a theoretical self-replicating system capable of building copies of itself using available materials.

While such concepts remain far from practical reality, they reflect the scale of Musk’s long-term vision for the project.

For now, however, Tesla’s progress will likely depend on solving more immediate engineering problems.

Among those, robotic hands remain one of the most critical components determining whether humanoid robots can move beyond demonstrations and into real-world work environments.

The new teaser suggests Tesla is continuing to refine that capability as it works toward the next generation of its Optimus platform.

Elon Musk Confirms Tesla and xAI Collaboration on Digital Optimus AI

Elon Musk has confirmed that Tesla and xAI are jointly developing a system called Digital Optimus, combining Tesla hardware with xAI’s Grok model to power real-time AI agents.

Elon Musk has confirmed that Tesla and artificial intelligence startup xAI are jointly developing a system known as Digital Optimus, a project designed to combine Tesla’s AI hardware with xAI’s reasoning models.

The announcement introduces a new layer of collaboration between Musk’s companies, linking Tesla’s robotics and hardware capabilities with xAI’s Grok large language model. Musk described the system as a hybrid architecture where Tesla processes real-time inputs while Grok handles higher-level reasoning and planning.

Macrohard or Digital Optimus is a joint xAI-Tesla project, coming as part of Tesla’s investment agreement with xAI.

Grok is the master conductor/navigator with deep understanding of the world to direct digital Optimus, which is processing and actioning the past 5 secs of…

— Elon Musk (@elonmusk) March 11, 2026

The project is tied to Tesla’s recent $2 billion investment in xAI and could play a role in the development of advanced AI agents capable of controlling software systems or potentially robotics platforms such as Tesla’s Optimus humanoid robot.

However, the partnership also raises questions about the evolving relationship between Tesla and xAI, particularly as Musk faces an ongoing shareholder lawsuit over the creation of the AI company.

A Dual-System AI Architecture

According to Musk, Digital Optimus is designed around a two-layer architecture inspired by psychologist Daniel Kahneman’s theory of dual cognitive systems.

Tesla’s component functions as a fast-response layer that processes real-time screen input and recent user interactions such as keyboard or mouse actions. This layer operates on Tesla’s AI4 inference hardware, a chip the company has developed for running machine learning systems locally.

Above that layer, xAI’s Grok model provides higher-level reasoning and planning capabilities. Musk described Grok as the system’s “master conductor”, responsible for interpreting context and guiding decision-making.

The architecture is intended to combine rapid reaction with deeper reasoning, allowing AI agents to interact with complex computer environments in real time.

Musk suggested such systems could eventually operate software workflows or coordinate large-scale digital operations, though the technology remains at an early stage.

A Shift in Tesla’s AI Narrative

The collaboration represents a notable shift in how Musk describes the relationship between Tesla and xAI.

In 2024, Musk repeatedly stated that Tesla did not need to license technology from xAI, arguing that Tesla’s real-world AI systems were far more extensive than language models.

Those statements came shortly after Tesla shareholders filed a lawsuit accusing Musk of breaching fiduciary duties by founding xAI as a separate company while Tesla was heavily investing in artificial intelligence.

At the time, Musk argued that the two companies served fundamentally different purposes.

The Digital Optimus announcement suggests the technologies are more closely connected than previously described.

By integrating Grok into Tesla-developed hardware systems, Musk is now presenting the two companies as collaborators rather than independent efforts.

Legal And Strategic Implications

The announcement arrives amid an ongoing legal dispute involving Tesla shareholders.

A lawsuit filed in Delaware Chancery Court by the Cleveland Bakers and Teamsters Pension Fund alleges that Musk diverted AI talent, computing resources, and strategic focus from Tesla to xAI, a company he founded outside the automaker.

Plaintiffs argue that AI capabilities being developed at xAI should have been built within Tesla itself.

Recent corporate developments have further complicated the situation.

Tesla disclosed earlier this year that it invested $2 billion in xAI’s funding round, while SpaceX later acquired xAI in an all-stock transaction that valued the combined entity at roughly $1.25 trillion ahead of a potential public offering.

These moves have created a complex network of financial ties between Musk’s companies while leaving key AI technologies housed outside Tesla.

The confirmation that Tesla hardware will rely on xAI’s models for Digital Optimus could become a central point in the legal debate.

What Digital Optimus Could Mean for Robotics

Although Musk described the system primarily as an AI agent capable of controlling computer systems, the project may also have implications for Tesla’s robotics ambitions.

Tesla has positioned itself as a leader in real-world artificial intelligence through products such as its autonomous driving software and the Optimus humanoid robot.

If Digital Optimus becomes the reasoning layer behind these systems, it would effectively place xAI’s models at the center of Tesla’s robotics strategy.

That possibility highlights a broader trend in the robotics industry: the increasing integration of large-scale AI models with physical machines.

Robotics developers are exploring architectures where perception and control systems operate locally on hardware while cloud-based models handle reasoning and long-term planning.

Whether Digital Optimus evolves into such a system remains uncertain. But Musk’s confirmation that Tesla and xAI are collaborating on the project signals that the future of Tesla’s AI stack may be more closely tied to xAI than previously acknowledged.

Amazon to Build AU$750 Million Robotics Fulfillment Center in Australia

Amazon plans to invest AU$750 million in a new robotics-enabled fulfillment center in Brisbane, Australia, designed to process more than 125 million packages annually with the help of automated systems.

Amazon is expanding its robotics-driven logistics network with plans to build a new AU$750 million automated fulfillment center in Brisbane, Australia, a project that highlights the growing role of robotics in large-scale e-commerce operations.

The facility, scheduled for completion in 2028, will span approximately 150,000 square meters across four levels, making it one of the largest warehouses ever constructed in Queensland. Once fully operational, the center is expected to process more than 125 million packages annually.

The investment reflects Amazon’s continued push to integrate robotics and artificial intelligence into warehouse operations as global e-commerce demand continues to grow.

A New Generation of Robotics Warehouses

Amazon’s newest Australian facility will rely heavily on robotic systems designed to assist human workers in sorting, moving, and preparing products for shipment.

Inside the warehouse, autonomous robots will transport shelving units filled with products across the floor to ergonomic workstations where employees pick items for customer orders.

One of the core systems used in Amazon’s robotics fulfillment centers is a robot known as Hercules. These mobile robots move storage pods weighing up to 500 kilograms, eliminating the need for employees to walk long distances across warehouse floors or manually lift heavy shelves.

The robots navigate the warehouse using onboard sensors and cameras while coordinating with warehouse management systems to route inventory efficiently.

To improve safety, employees working near robotic equipment wear wireless transmitters known as Tech Vests. These devices allow robots to detect nearby workers and adjust their movement accordingly.

AI and Robotics in Order Processing

In addition to mobile robots, Amazon uses robotic arms to assist with item sorting.

A system called Sparrow uses computer vision and artificial intelligence to identify products and place them into containers that move along the fulfillment line.

The robot analyzes visual information to determine the correct item to pick and place, helping workers group items together for customer orders before they are packaged for delivery.

Such systems illustrate how robotics and AI are increasingly integrated into warehouse workflows rather than operating as standalone machines.

Human workers still perform tasks requiring judgment, quality control, and exception handling, while robots handle repetitive physical operations.

Scaling Logistics for E-Commerce

The Brisbane facility will have the capacity to store as many as 15 million small items ranging from electronics and beauty products to household goods and toys.

In addition to Amazon’s own retail operations, the warehouse will also process products sold by small and medium-sized Australian businesses using the company’s online marketplace.

When operating at full capacity, the facility’s throughput of more than 125 million packages per year would make it one of the largest logistics hubs in the region.

Amazon said the project will create more than 1,000 permanent jobs once the facility opens, while the construction phase is expected to generate roughly 2,000 additional roles.

Robotics as Infrastructure

The project highlights how warehouse robotics has become a core part of Amazon’s logistics infrastructure rather than a limited automation experiment.

Over the past decade, the company has deployed hundreds of thousands of robots across its fulfillment network worldwide. These machines support a variety of tasks including inventory transport, picking, packing, and sorting.

By combining automation with human labor, companies aim to increase warehouse efficiency while reducing physically demanding work for employees.

Amazon’s Australian expansion reflects the broader trend of e-commerce companies investing heavily in automated logistics systems to manage growing volumes of online orders.

What This Signals for Industrial Robotics

Large-scale fulfillment centers are emerging as one of the most significant real-world deployments of robotics and AI technologies.

Unlike research prototypes or pilot projects, warehouse robots operate continuously in commercial environments that require reliability, safety, and integration with complex supply chains.

As companies continue building automated logistics hubs around the world, the technologies developed for warehouse robotics are likely to influence other industries where large-scale automation is needed.

For robotics developers, the fulfillment center has become one of the most important proving grounds for real-world automation.

RMIT Engineers Build Dolphin Inspired Robot for Oil Spill Cleanup

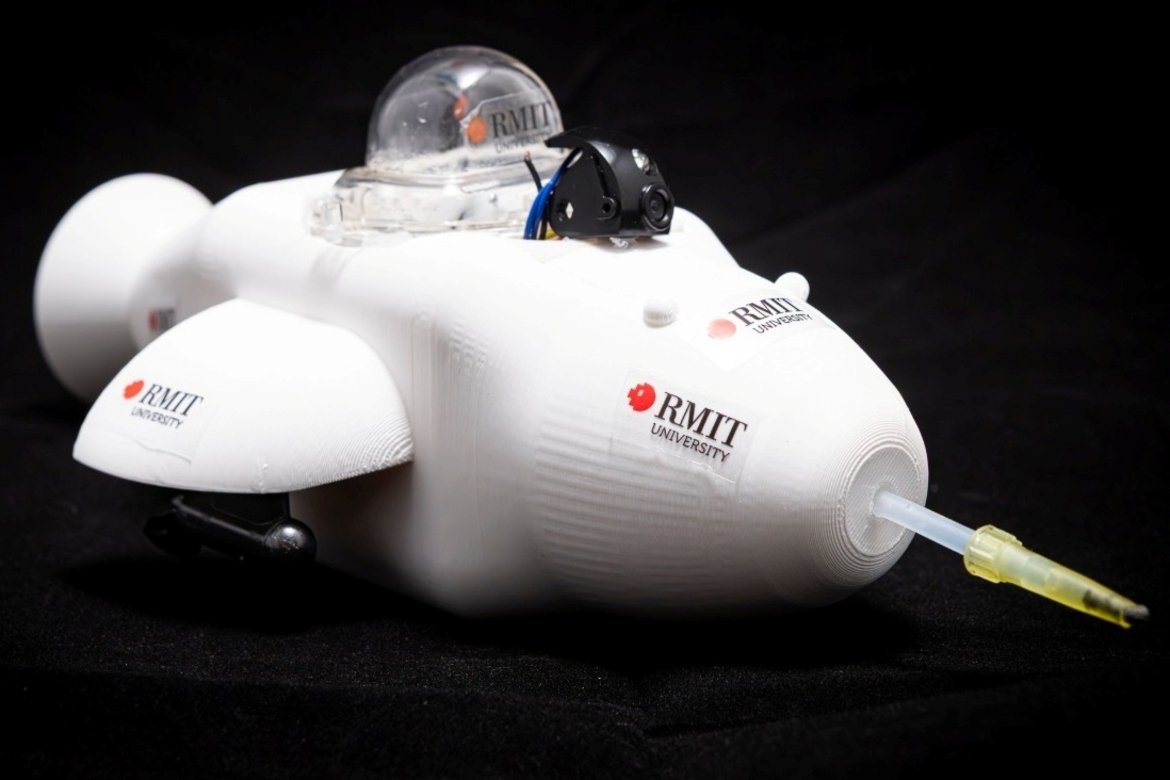

Engineers at RMIT University have developed a sneaker-sized robotic device designed to collect oil from water surfaces using a bio-inspired filter, offering a new approach to targeted oil spill response.

Engineers at RMIT University in Australia have developed a small robotic system designed to help collect oil spills from the surface of water, offering a potentially safer and more targeted method for responding to environmental disasters.

The device, known as the Electronic Dolphin, is a remote-controlled robot roughly the size of a sneaker that can skim oil from water using a specially engineered filter inspired by the structure of sea urchins.

Researchers say the system could eventually form part of robotic cleanup fleets capable of responding quickly to spills in sensitive marine environments where traditional cleanup methods can be slow, expensive, or hazardous.

A Small Robot Designed for a Big Environmental Problem

Oil spills remain one of the most damaging forms of marine pollution, affecting coastlines, wildlife, and fisheries while often requiring complex and costly cleanup operations.

Traditional response strategies rely heavily on manual labor, chemical dispersants, or large mechanical systems that may struggle to reach confined or environmentally sensitive areas.

The Electronic Dolphin project explores whether small robotic platforms could assist with early-stage spill response by collecting oil directly from the water’s surface.

The robot features a pump-driven system that pulls contaminated water across a specialized filter located at the front of the device. Oil is captured and stored in an onboard chamber while water is repelled by the filter surface.

Lead researcher Ataur Rahman said the concept demonstrates how compact robotic platforms could help responders operate in areas where direct human access may be difficult or unsafe.

A Bio Inspired Filtering System

At the heart of the robot is a filtration material developed by the RMIT research team.

The filter uses a surface coating engineered with microscopic structures resembling the spines of sea urchins. These tiny protrusions trap pockets of air, creating a surface that repels water while allowing oil to adhere.

This structure enables the filter to absorb oil selectively without becoming saturated with water.

Because the material is lightweight and reusable, researchers believe it could be deployed repeatedly in environmental cleanup operations.

During laboratory testing, the robot recovered oil at a rate of roughly two milliliters per minute with more than 95 percent purity, demonstrating the feasibility of the concept at small scale.

Toward Autonomous Cleanup Systems

The current Electronic Dolphin prototype operates through remote control and can run for approximately 15 minutes on its onboard battery.

The research team envisions future versions with larger pumps, increased storage capacity, and longer operating times.

In a scaled system, multiple robots could operate cooperatively to collect oil from affected areas. After filling their storage tanks, the robots could potentially return to a base station to empty collected oil, recharge, and redeploy.

Such systems could form semi-autonomous cleanup networks capable of responding quickly after an oil spill occurs.

Scaling the Technology

Before the technology can be deployed in real-world environments, researchers plan to conduct further testing to evaluate durability and long-term performance in marine conditions.

Future development may include expanding the filter surface area across larger robotic platforms, which would increase collection capacity.

The team is also exploring partnerships with industry organizations and environmental agencies to evaluate practical applications for the technology.

What This Signals for Environmental Robotics

The Electronic Dolphin project reflects a growing interest in robotics for environmental monitoring and remediation.

Small autonomous or remotely operated robots are increasingly being explored for tasks such as water quality monitoring, marine wildlife observation, and pollution cleanup.

Compared with large cleanup vessels or manual operations, fleets of smaller robots could potentially offer more flexible and localized responses to environmental hazards.

While still at an early research stage, the Electronic Dolphin demonstrates how advances in materials science, robotics, and bio-inspired engineering could combine to create new tools for addressing environmental challenges.

Researchers Build Centaur Style Robot to Help Humans Carry Loads

Engineers in China have developed a centaur-inspired robotic system that walks behind a human and carries heavy loads, offering an alternative approach to wearable exoskeletons and autonomous cargo robots.

Robotics engineers in China have developed an unusual hybrid system designed to help humans carry heavy loads – by turning the user into something resembling a mechanical centaur.

The experimental robot, created by researchers at the Southern University of Science and Technology in Shenzhen, attaches behind a human operator and walks on two robotic legs while supporting the weight of a backpack-sized payload.

Instead of fully autonomous navigation or wearable exoskeleton assistance, the system combines a human’s natural mobility with robotic load-bearing capability.

The approach highlights a growing trend in robotics research focused on hybrid human-machine systems that augment human movement rather than replace it.

A Different Approach to Load Carrying

Many robotics projects aimed at reducing human physical strain fall into two broad categories: wearable exoskeletons that strengthen the body or autonomous robots that transport equipment independently.

The centaur-inspired design explores a third option.

In demonstrations published alongside the research, a human operator walks across campus while a two-legged robotic unit follows directly behind, attached through a support frame that carries the load.

The robot synchronizes its movement with the person’s walking speed and direction while maintaining balance over uneven terrain.

When the wearer climbs stairs, the robot’s legged design allows it to follow naturally, a scenario that would be more difficult for wheeled cargo devices.

According to the researchers, the system can adapt to variations in human walking patterns and terrain while collaborating closely with the user.

Reducing the Physical Cost of Carrying Weight

In testing, researchers evaluated how the robot affected the physical effort required to transport heavy loads.

The system was compared to carrying a conventional backpack weighing roughly 20 kilograms, about 44 pounds.

Measurements indicated that the robotic system reduced the metabolic cost of carrying the load by redistributing weight through the mechanical support structure.

In other words, the robot absorbs much of the physical burden normally placed on the wearer’s body.

The system can also generate small forward forces through the human-robot interface, potentially providing propulsion assistance while walking.

This capability effectively allows the robot to contribute energy to the user’s movement rather than acting purely as passive cargo support.

Why Not Use Autonomous Robots

The researchers argue that their hybrid design addresses several limitations faced by fully autonomous load-carrying robots.

Autonomous systems such as robotic quadrupeds must independently navigate complex environments, which can require detailed mapping and significant computing power.

Battery limitations also restrict how much weight those robots can carry and how long they can operate.

By combining robotic load support with human navigation, the centaur system eliminates the need for autonomous route planning while maintaining mobility across complex terrain.

The human operator provides the decision-making and navigation capability, while the robot provides physical strength.

Mixed Reactions to the Design

Despite its technical novelty, the system has sparked debate among observers about whether the approach offers practical advantages over simpler solutions.

Some critics have pointed out that wheeled carts or existing load-bearing devices could achieve similar results with fewer mechanical components.

Others raised safety concerns about how the robot might behave if a user were to stumble while carrying heavy loads.

Such skepticism is not unusual for experimental robotics concepts, many of which are designed primarily to explore new design principles rather than produce immediate commercial products.

What Hybrid Human Robot Systems Could Enable

The centaur robot reflects a broader category of research exploring closer integration between humans and machines.

Rather than replacing human labor entirely, these systems aim to augment physical capabilities while preserving human mobility, decision-making, and adaptability.

Possible future applications include disaster response, military logistics, field research, and industrial work where humans must carry heavy equipment across difficult terrain.

As robotics researchers continue experimenting with new human-machine interfaces, hybrid systems like the centaur robot may represent one path toward extending human capabilities in physically demanding environments.

Northwestern Engineers Build Self Reconfiguring Modular Robots

Researchers at Northwestern University have developed modular “legged metamachines” that can flip, jump, and continue operating even after being split into pieces, offering a new approach to resilient robotics.

Engineers at Northwestern University have developed a new type of modular robot capable of continuing to move and operate even after being physically separated into pieces, a design approach that could change how robots are built for unpredictable environments.

The machines, described by researchers as “legged metamachines”, are composed of self-contained robotic modules that can connect, detach, and reorganize themselves while maintaining mobility. Each module includes its own electronics, power source, and motor, allowing it to function as an independent robotic unit.

When combined into larger structures, the modules behave like limbs of a larger robot, capable of performing complex movements such as jumping, flipping, and traversing uneven terrain.

The project explores how robotic systems might achieve a level of resilience that traditional designs lack.

Robots Built from Autonomous Modules

Unlike conventional robots built around a single centralized body, metamachines are constructed from multiple independent units that snap together like building blocks.

Each module resembles a small mechanical limb composed of two elongated segments connected through a central spherical joint. Inside that spherical core are the essential components required for operation, including circuitry, battery power, and a motor.

Individually, these modules are capable of rolling or jumping across the ground. But when combined into multi-limbed configurations, they form coordinated robotic structures capable of far more complex movement.

Researchers describe this architecture as similar to a robot made from smaller robots, where each piece contributes to the overall motion while retaining its own sensing and control systems.

AI Designed the Robot’s Body

The unusual appearance and motion of the metamachines emerged from an AI-driven design process rather than conventional engineering.

The research team used an evolutionary algorithm that simulated a process similar to natural selection. Digital robot designs were generated, tested in simulation, and iteratively modified through virtual “mutations” until high-performing configurations emerged.

Because the algorithm explored design possibilities unconstrained by traditional engineering intuition, it produced unusual structures that resemble the movement patterns of animals.

Some configurations move with motions similar to seals undulating across terrain, while others bound like small mammals or leap using spring-like dynamics.

According to the researchers, these AI-evolved designs allowed the robots to move effectively across a variety of surfaces.

Surviving Damage and Reassembling

Perhaps the most distinctive feature of the metamachines is their ability to continue functioning after severe physical damage.

In traditional robots, losing a limb or structural component often renders the entire system unusable. In modular metamachines, however, damage simply alters the configuration of the system.

If a component is severed, the remaining modules immediately adjust their movement pattern and continue traveling with fewer limbs.

Meanwhile, detached modules do not become inert debris. Each segment remains an autonomous robot capable of sensing its environment and moving independently.

Researchers observed detached modules crawling or rolling across the terrain, potentially allowing them to reconnect with the rest of the system.

The team described the result as a form of functional resilience that resembles biological organisms capable of regenerating or adapting after injury.

Testing Robots in the Real World

To validate the concept outside of simulation, the researchers built physical prototypes composed of three, four, and five modules.

The robots were tested outdoors across uneven terrain, including sand, soil, and forest floor environments.

During these experiments, the metamachines demonstrated the ability to flip themselves upright when overturned and to traverse obstacles without external control adjustments.

The experiments were designed to test whether AI-evolved designs developed in computer simulations could function effectively in real-world environments.

According to the researchers, the robots performed these movements immediately after assembly without requiring manual calibration.

What Modular Robots Could Enable

The research highlights a potential direction for robotics focused on adaptability and resilience rather than rigid precision.

Modular machines capable of self-repair and reconfiguration could be particularly useful in environments where human intervention is difficult or impossible.

Possible applications include planetary exploration, disaster response, and infrastructure inspection, where robots may encounter unpredictable terrain or physical damage.

By distributing intelligence and mobility across many small units rather than relying on a single central structure, such systems may continue operating even when individual components fail.

As robotics researchers continue exploring new approaches to embodied intelligence, designs inspired by biological resilience may play an increasingly important role in machines intended to operate beyond controlled laboratory environments.

DJI Pays Researcher After Robot Vacuum Security Flaw Exposed

A software engineer who discovered a vulnerability allowing remote access to thousands of DJI robot vacuum cleaners has been awarded $30,000 after reporting the issue through the company’s security program.

A software engineer who set out to connect a robot vacuum cleaner to a gaming controller instead uncovered a security vulnerability that allowed access to thousands of connected devices around the world.

Sammy Azdoufal discovered that software used to control his DJI Romo robot vacuum could communicate with the company’s servers in a way that exposed remote access to approximately 7,000 devices. The flaw potentially allowed an outside user to control the robots and access their camera feeds.

After reporting the issue through DJI’s security program, the researcher received a $30,000 reward for identifying the vulnerability.

The episode highlights a growing challenge in robotics and smart home technology: ensuring that connected machines operating inside private spaces remain secure from unauthorized access.

A Discovery That Revealed Remote Access

Azdoufal originally attempted to integrate his DJI robot vacuum with a PlayStation 5 controller, experimenting with ways to control the device remotely.

During testing, he noticed that his custom control application was communicating directly with DJI’s cloud infrastructure. This interaction unexpectedly granted him visibility into a network of thousands of robot vacuums deployed globally.

According to the researcher, the system allowed remote interaction with the devices without requiring traditional hacking methods such as password cracking or server intrusion.

The vulnerability reportedly enabled several capabilities, including remotely operating the robots, accessing live camera feeds, and viewing the digital maps the devices create when scanning household environments.

Because robot vacuums typically map rooms and navigate through homes using onboard sensors and cameras, such access could potentially expose sensitive information about users’ living spaces.

Security Risks in Connected Home Robotics

The incident underscores the broader cybersecurity risks associated with consumer robotics and smart home devices.

Unlike traditional appliances, modern robot vacuums rely heavily on cloud connectivity to enable features such as remote control, mapping, software updates, and integration with mobile apps.

These capabilities create new attack surfaces where vulnerabilities can potentially expose both device control and user data.

In the case of the DJI Romo devices, the vulnerability allowed interaction with the robots through the company’s application infrastructure rather than requiring direct access to individual devices.

Although there is no evidence that the flaw was exploited maliciously, the ability to access cameras and mapping data raised concerns about privacy.

Company Response and Security Updates

DJI said it had already begun addressing related vulnerabilities before the researcher publicly described the issue. The company later confirmed that updates had been deployed to resolve the problem.

According to DJI, the fix was implemented through the DJI Home application infrastructure and did not require action from users.

The company also noted that two independent security researchers reported the vulnerability through its bug bounty program.

DJI said it found no evidence that customer data had been misused.

“Our customers place trust in our technology, and we do not take that lightly,” the company said in a statement outlining steps taken to strengthen the product’s security.

What This Signals for Consumer Robotics

As robots become more common in homes, offices, and industrial environments, cybersecurity is emerging as one of the central challenges facing the robotics industry.

Devices that move through physical spaces while collecting environmental data create a unique category of connected systems that combine robotics with cloud computing and artificial intelligence.

Researchers say this convergence increases the importance of secure software architectures and rapid vulnerability response programs.

Bug bounty initiatives, which reward researchers for reporting security flaws, have become one mechanism companies use to identify weaknesses before they can be exploited.

The DJI case illustrates how unexpected experimentation by independent developers can reveal vulnerabilities that might otherwise remain undetected.

As consumer robotics continues to expand, ensuring that machines operating inside private environments remain secure may become as critical as improving their navigation, autonomy, or intelligence.

Yann LeCun Raises $1 Billion for Common Sense AI and Robots

AI researcher Yann LeCun has raised more than $1 billion for a new startup developing AI systems and robots capable of reasoning about the physical world, challenging the dominance of large language models.

A new artificial intelligence startup founded by prominent AI researcher Yann LeCun has raised more than $1 billion to develop machines capable of reasoning about the physical world, signaling a growing push to move beyond today’s dominant large language model approach.

The company, called Advanced Machine Intelligence (AMI), is focused on building AI systems and robotic platforms designed around what researchers describe as “common sense” understanding – the ability to reason about objects, environments, and cause-and-effect relationships.

The funding round raised approximately $1.03 billion at a pre-money valuation of $3.5 billion and was co-led by several venture investors including Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions.

The investment highlights increasing interest in AI systems that go beyond language generation and move toward embodied intelligence capable of interacting with the real world.

Challenging the Dominance of Language Models

LeCun, who previously served as chief AI scientist at Meta and helped establish the company’s influential FAIR research lab, has long argued that current AI systems built primarily around predicting the next word in a sentence are fundamentally limited.

While large language models have made rapid progress in text generation and coding tasks, LeCun has said they lack deeper reasoning capabilities and an understanding of physical reality.

The new startup aims to develop alternative architectures based on so-called world models – systems designed to build internal representations of how the physical world works.

Such models could allow machines to plan actions, anticipate outcomes, and adapt to changing environments.

In interviews discussing the company’s vision, LeCun has suggested that these capabilities will be essential for robots and autonomous systems that must operate safely in complex environments.

From Software Intelligence to Physical Machines

Although AMI is developing AI software platforms, robotics is expected to be one of its long-term targets.

Machines that interact with the physical world must interpret sensory data, understand spatial relationships, and predict how objects will behave. These tasks require a type of reasoning that goes beyond text-based pattern recognition.

LeCun has argued that robots intended for everyday environments, such as homes or workplaces, will require a significant level of common sense to function reliably.

That includes understanding concepts such as gravity, object permanence, and the likely consequences of physical actions.

Domestic robots, for example, must recognize not only objects but also how those objects can be used or manipulated in different contexts.

Potential Applications Across Multiple Industries

The company’s technology is expected to target a broad range of industries where AI systems must interact with real-world environments.

Potential applications include manufacturing, automotive engineering, aerospace systems, biomedical research, and pharmaceutical development.

In addition to industrial uses, LeCun has indicated that consumer applications could emerge as well. One possibility under discussion is integrating the technology into wearable devices such as smart glasses.

LeCun said he has been in conversations with Meta about potential future applications of the technology in products like the company’s Ray-Ban smart glasses.

Such devices could eventually act as intelligent assistants capable of understanding physical surroundings rather than simply processing spoken commands.

A New Direction in AI Research

The launch of AMI reflects a growing debate within the AI community about the future direction of the field.

Large language models have dominated AI development in recent years, attracting massive investment and widespread adoption across industries. However, some researchers argue that these systems alone cannot produce broadly capable artificial intelligence.

LeCun has been one of the most prominent voices advocating alternative approaches based on learning world models and predictive representations of reality.

If successful, those methods could enable machines to reason more effectively about complex environments and ultimately control robots and other physical systems.

The $1 billion investment suggests that at least some investors are willing to back that alternative vision.

As robotics and embodied AI become central to the next phase of artificial intelligence development, systems capable of understanding and interacting with the physical world may become an increasingly important frontier for the industry.

XGSynBot Launches Z1 Robot Targeting Industrial Embodied AI

XGSynBot has introduced the Z1 wheeled humanoid robot designed for industrial environments, positioning the system as a flexible platform aimed at closing the gap between AI prototypes and real factory automation.

As robotics companies race to bring artificial intelligence into physical workplaces, one of the biggest challenges remains translating laboratory breakthroughs into machines capable of surviving real factory conditions.

XGSynBot, a robotics company focused on embodied AI systems, has introduced a new industrial robot designed specifically to address that gap. The company unveiled its Z1 wheeled humanoid robot during a dual-city launch event held in Silicon Valley and Beijing, positioning the platform as a system built for continuous operation in manufacturing environments rather than controlled demonstrations.

The launch comes at a time when manufacturers are experimenting with more flexible automation technologies, including humanoid robots, but many of those systems remain limited to pilot programs or research settings.

Designing Robots for Real Factories

According to XGSynBot, the Z1 robot was designed around the practical constraints of industrial environments, where automation systems must operate continuously while maintaining high levels of precision.

Unlike traditional industrial robots, which are typically configured for a single task, the Z1 is designed to switch between different types of work through a modular tool interface.

The robot features a quick-change system that allows end-effectors such as grippers, welding tools, or suction devices to be swapped in under six seconds. The approach is intended to allow a single robot to move between multiple workstations rather than being dedicated to a single repetitive operation.

The company also introduced a proprietary joint module architecture that integrates motors, sensors, and mechanical components into a single unit. By consolidating these elements, the system aims to reduce latency and signal interference while improving mechanical stability.

These design choices reflect a broader shift in industrial robotics toward more adaptable machines capable of operating in varied environments rather than highly specialized automation systems.

A Dual-System Architecture for Embodied AI

At the software level, the Z1 platform incorporates a dual-system architecture inspired by cognitive science models of human decision-making.

One system focuses on high-level reasoning, allowing the robot to interpret instructions and plan tasks using AI models. A second system operates at high frequency to manage real-time motor control and tactile feedback, ensuring stable movement and precise manipulation.

The combination allows the robot to interpret complex commands while maintaining the millisecond-level responsiveness required for industrial operations.

Such architectures are becoming increasingly common in embodied AI research, where systems must integrate perception, reasoning, and physical control simultaneously.

Building an Ecosystem Around Embodied AI

Alongside the robot launch, XGSynBot announced a broader ecosystem initiative called Project STARFIRE aimed at accelerating industrial adoption of embodied AI.

The initiative focuses on three areas: collaborative development with industrial partners, open hardware interfaces that allow third-party tools to connect with the robot platform, and gradual open-sourcing of datasets and software tools.

By encouraging external developers and manufacturers to build applications on the platform, the company hopes to create a plug-and-play ecosystem for industrial robotics.

Early interest from industry partners and investors has reportedly generated potential orders worth tens of millions of dollars following the launch event.

The Manufacturing Automation Challenge

Despite rapid progress in robotics and artificial intelligence, the manufacturing sector continues to face what some researchers describe as an “automation paradox”.

Advanced robotics systems have become increasingly capable, yet many remain difficult to deploy in environments where conditions include oil, dust, vibration, and constant operation.

This gap between prototype systems and durable production robots has become one of the central challenges in embodied AI.

XGSynBot’s strategy appears to focus less on building experimental humanoids and more on designing systems capable of surviving industrial use from the outset.

What This Signals for Embodied AI

The Z1 launch highlights an emerging shift in the robotics industry from highly specialized industrial robots toward adaptable machines capable of operating across multiple tasks.

For manufacturers facing labor shortages and increasingly complex production lines, such flexibility could become increasingly valuable.

But achieving reliable performance in industrial environments remains a significant technical hurdle.

If companies like XGSynBot can deliver robots that combine AI-driven flexibility with the durability required for factory operations, embodied AI may begin to move from experimental demonstrations to everyday industrial infrastructure.

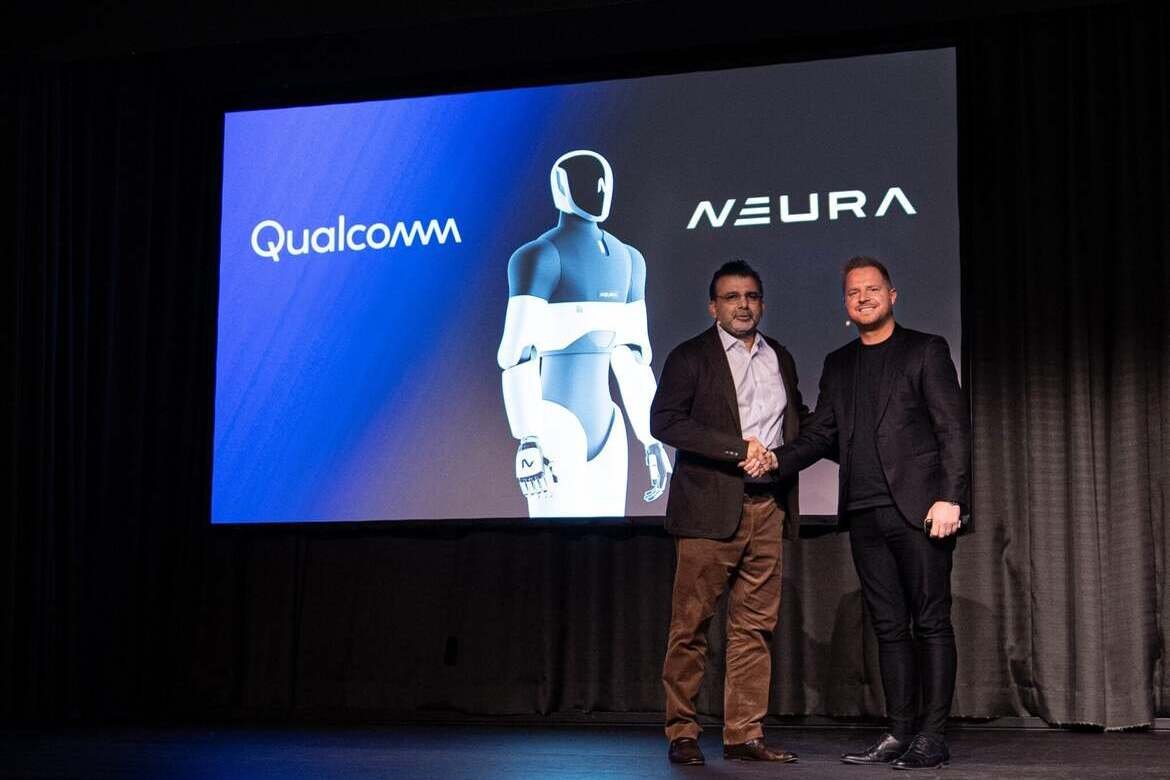

Qualcomm and NEURA Robotics Form Alliance to Advance Physical AI Platforms

Qualcomm and NEURA Robotics have announced a strategic collaboration to develop next-generation robotics platforms, combining edge AI processors with full-stack robotics systems to accelerate the deployment of cognitive robots.

The race to build general-purpose robots capable of operating alongside humans is increasingly becoming a partnership-driven effort. Semiconductor company Qualcomm and German robotics developer NEURA Robotics have announced a long-term strategic collaboration aimed at accelerating the development of physical AI and cognitive robotics systems.

The partnership combines Qualcomm’s edge AI computing platforms with NEURA’s full-stack robotics hardware and embodied AI software, with the goal of enabling scalable robots that can operate safely in industrial, service, and household environments.

The announcement reflects a growing convergence between semiconductor companies and robotics developers as the industry works to move physical AI from experimental prototypes to commercially deployable machines.

Building the “Brain and Nervous System” of Robots

At the center of the collaboration is a joint effort to develop what the companies describe as a “brain plus nervous system” architecture for robots.

In this framework, high-level cognitive functions such as perception, reasoning, and planning operate alongside real-time control systems responsible for motion and interaction with the physical world.

Qualcomm’s role in the partnership focuses on providing the computing layer. Its Dragonwing robotics processors and edge AI platforms are designed to handle demanding workloads locally on robotic systems, allowing machines to make decisions instantly without relying on cloud connectivity.

NEURA Robotics will integrate those processors into its robotics platforms and embodied AI software stack, which powers a range of robotic systems including industrial manipulators, mobile robots, service robots, and humanoid machines.

The companies say the goal is to create standardized reference architectures that simplify how robotics developers design and deploy intelligent machines.

Moving Robotics from Research to Deployment

While robotics research has advanced rapidly in recent years, many systems remain difficult to scale commercially due to fragmented hardware and software ecosystems.

By combining computing platforms with standardized robotics architectures, Qualcomm and NEURA say they hope to accelerate commercialization of robots capable of operating reliably in real-world environments.

Robotics presents one of the most demanding edge AI workloads. Unlike many cloud-based AI applications, robots must process sensory data and make decisions instantly to ensure safe interaction with people and objects.

Nakul Duggal, executive vice president and general manager for automotive, industrial, and embedded IoT at Qualcomm Technologies, said the collaboration reflects a broader shift toward bringing intelligence directly onto devices.

“Robotics represents one of the most demanding edge AI use cases, where decisions must happen instantly, reliably, and locally,” Duggal said.

The companies plan to develop deployment standards that allow AI workloads to be updated and validated across fleets of robots while maintaining the deterministic performance required for safety-critical environments.

Creating a Shared Robotics Ecosystem

Another component of the collaboration is a developer ecosystem designed to encourage third-party robotics applications.

NEURA’s cloud platform, called Neuraverse, will serve as an environment for training, simulation, and lifecycle management of robotic systems running on Qualcomm’s processors.

The platform is designed to connect robots into a shared network where improvements learned by one system can be distributed across others.

In theory, such architectures could allow fleets of robots to continuously improve their capabilities through shared data and software updates, accelerating development cycles for robotics companies.

The partners also aim to create a marketplace for robotics applications, enabling developers to build software once and deploy it across multiple robot platforms.

What the Partnership Signals for the Robotics Industry

The collaboration highlights a broader trend in the robotics sector: the increasing importance of computing infrastructure and ecosystem development.

For decades, robotics progress was largely driven by advances in mechanical engineering and control systems. Today, artificial intelligence and high-performance computing are becoming equally central to how robots perceive their surroundings, make decisions, and interact with humans.

As a result, chipmakers are beginning to play a more direct role in shaping the robotics industry.

For NEURA Robotics, which is pursuing the development of general-purpose cognitive robots including humanoid systems, access to scalable computing platforms could accelerate its path to commercialization.

For Qualcomm, the partnership represents an opportunity to extend its edge AI and connectivity technologies into what many consider one of the next major computing platforms: intelligent machines operating in the physical world.

If successful, collaborations of this type could help define the architecture behind the next generation of robots, linking AI computing, software ecosystems, and physical machines into unified platforms.

PlusAI Unveils SuperDrive 6.0 for Commercial Autonomous Trucking

PlusAI has launched SuperDrive 6.0, an upgraded autonomous driving system designed for large-scale freight operations, adding night driving and construction zone handling as the company prepares for commercial deployment of driverless trucks.

Autonomous trucking developer PlusAI has introduced a new version of its self-driving system designed to support continuous freight operations, marking a step toward large-scale deployment of autonomous trucks in commercial logistics networks.

The company’s upgraded platform, called SuperDrive 6.0, adds capabilities for night driving and construction zone navigation – two conditions that have historically limited the operational range of autonomous freight vehicles.

PlusAI says the system is engineered for commercial-scale deployment rather than limited pilot testing, as the company moves closer to launching factory-built driverless trucks later this decade.

Expanding the Operational Envelope for Autonomous Trucks

Long-haul trucking has been viewed as one of the most promising applications for autonomous driving, largely because highway environments are more structured and predictable than dense urban traffic.

However, achieving reliable operations across a full 24-hour logistics cycle has required solving a range of technical challenges, including low-light perception, unpredictable road construction layouts, and complex interactions with other vehicles.

SuperDrive 6.0 introduces new capabilities designed to address those constraints. According to PlusAI, the system can now operate in construction zones and is expected to support night driving in upcoming releases.

These additions could allow autonomous trucks equipped with the system to run continuously on commercial freight routes.

“SuperDrive 6.0 isn’t an incremental update; it’s a major advancement of what an autonomous ‘brain’ can do,” said David Liu, PlusAI’s chief executive and co-founder. He said the expanded operating capabilities could enable autonomous trucks to run around the clock on logistics routes.

The software has been trained using more than seven million miles of real-world driving data collected across the United States, Europe, and Asia.

Improving the Economics of Autonomous Driving

Beyond new driving capabilities, the company says SuperDrive 6.0 introduces changes aimed at improving the economics of autonomous trucking.

Developing autonomous vehicle software typically requires large volumes of labeled data and extensive testing cycles, both of which contribute significantly to development costs.

PlusAI said the latest version of its system uses a combination of autolabeling, imitation learning, and reinforcement learning to accelerate AI training. According to the company, the approach has increased training speed tenfold while reducing data labeling costs by roughly three times.

Those improvements allow new features to move from internal validation to real-world deployment more quickly, compressing development timelines and reducing the cost of expanding into new operating environments.

A New Architecture for Commercial Deployment

At the hardware level, SuperDrive 6.0 uses a distributed computing architecture built around multiple high-performance system-on-chip processors, including NVIDIA’s DRIVE Orin and Thor platforms.

The system is designed to maintain performance under conditions that can disrupt autonomous driving hardware, such as partial sensor failures, calibration drift, or degraded environmental perception.

PlusAI says resilience under these conditions is essential for commercial freight operations, where vehicles must operate for long periods without interruption.

A key component of the software stack is a transformer-based layer called Reflex, part of the company’s AV 2.0 architecture. This system integrates perception and motion forecasting, allowing the vehicle to predict the behavior of nearby traffic participants.

According to the company, the motion forecasting model doubles prediction accuracy for dynamic actors such as merging vehicles, pedestrians, and lane-changing traffic.

Toward Driverless Freight Networks

Autonomous trucks equipped with earlier versions of SuperDrive are already transporting commercial freight on routes in Texas, though they still operate within supervised environments.

PlusAI says its long-term goal is to deploy factory-built autonomous trucks capable of operating without human drivers by 2027.

The company is also preparing to go public through a merger with the special purpose acquisition company Churchill Capital Corp IX, a move that could provide additional capital for scaling its technology and expanding commercial partnerships.

The combination of improved AI systems and growing industry investment suggests that autonomous trucking is moving from experimental pilots toward operational logistics networks.

Whether those systems can operate reliably across the full complexity of real-world highways remains one of the central questions facing the autonomous vehicle industry.

Agility Robotics Rebrands as Humanoid Market Competition Intensifies

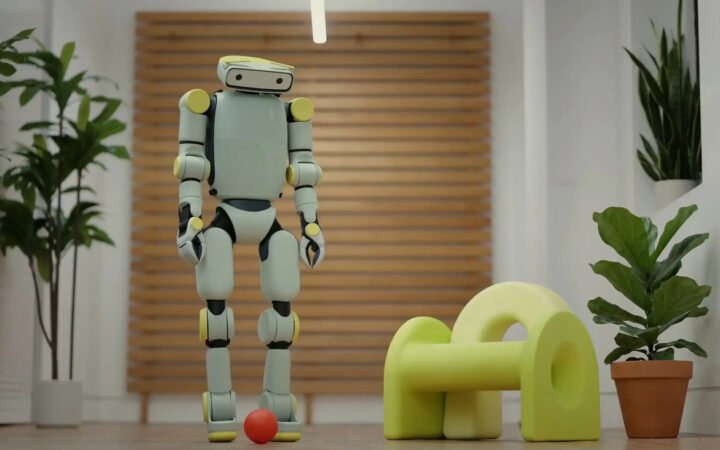

Agility Robotics has rebranded as Agility, signaling plans to expand beyond its current humanoid robot deployments as competition accelerates across the global humanoid robotics market.

Agility Robotics, one of the early developers of commercial humanoid robots, has rebranded as Agility, dropping “Robotics” from its name as the company positions itself for broader growth in the rapidly expanding humanoid automation market.

The Oregon-based company said the change reflects an effort to align its brand with a wider set of applications and services as humanoid robots move from pilot deployments into real industrial operations.

The rebrand comes as competition among humanoid robot developers intensifies globally, with companies racing to bring adaptable machines into warehouses, factories, and logistics centers.

A Shift Toward Commercial Scale

Agility has been among the first companies to deploy humanoid robots in real-world logistics environments.

Its flagship robot, Digit, is designed to handle tasks such as transporting containers and materials within warehouse facilities. The robot’s bipedal design allows it to navigate environments originally built for human workers, including stairs, narrow aisles, and loading docks.

The company recently announced a deployment agreement with Toyota Canada following a year-long pilot program evaluating Digit’s performance in industrial operations.

Toyota joins a growing list of large companies testing or deploying the robot, including logistics provider GXO Logistics, automotive supplier Schaeffler, and e-commerce giant Amazon.

These deployments represent a shift in the humanoid robotics sector from experimental pilots toward commercial use cases where robots operate continuously alongside human workers.

Branding for a Broader Robotics Ecosystem

According to the company, removing “Robotics” from its name reflects an intention to expand beyond a single product category.

In a statement explaining the change, Agility said the simplified brand allows room to explore new industries, partnerships, and services as humanoid automation becomes more widely adopted.

The company also introduced a new logo and visual identity intended to emphasize movement, durability, and reliability, characteristics associated with its hardware and software systems.

Daniel Diez, Agility’s chief business officer, said the rebrand signals the company’s readiness to scale its technology across multiple sectors.

“With our rebrand to Agility, we’re signaling our readiness to scale beyond our current deployments and our ability to lead the adoption of humanoids across many new industries,” Diez said.

The Race to Deploy Humanoid Robots

Agility’s repositioning comes at a moment when the humanoid robotics market is expanding rapidly.

Technology companies and robotics startups around the world are developing humanoid machines capable of performing tasks that traditional industrial robots cannot easily handle. These systems are designed to operate in human-centered environments and perform a range of tasks rather than a single repetitive motion.

Agility has positioned Digit as a “cooperatively safe” humanoid robot intended to operate alongside workers without requiring extensive safety barriers.

The company says it remains on track to deliver the first cooperatively safe humanoid robot in 2026, a milestone that could accelerate the adoption of humanoid automation in logistics and manufacturing environments.

Meanwhile, other robotics companies are pursuing similar goals, targeting industries facing labor shortages and increasing demand for flexible automation.

What the Rebrand Signals for the Industry

Agility’s brand change reflects a broader transformation occurring across the robotics industry.

As humanoid robots transition from research projects to commercial products, companies are increasingly positioning themselves not only as hardware manufacturers but as providers of integrated automation systems.

That shift often includes software platforms, operational services, and partnerships with major industrial customers.

For early entrants such as Agility, maintaining a leadership position may depend less on technical prototypes and more on the ability to scale deployments, integrate with industrial workflows, and demonstrate reliable performance over time.

The company’s rebrand suggests that the next phase of the humanoid robotics market will focus on expansion beyond pilot programs toward widespread commercial adoption.

OpenAI Robotics Leader Resigns after Pentagon AI Agreement

Caitlin Kalinowski, head of OpenAI’s robotics and hardware group, has resigned after raising concerns about the company’s agreement to make its AI systems available within U.S. Department of Defense computing environments.

The leader of OpenAI’s robotics and hardware initiatives has resigned after raising concerns about the company’s agreement with the U.S. Department of Defense, highlighting growing tensions over how advanced artificial intelligence technologies should be used in military settings.

Caitlin Kalinowski, who joined OpenAI in late 2024 to lead the company’s renewed robotics and hardware group, stepped down over the weekend following the announcement of a defense agreement that would allow OpenAI’s AI systems to be deployed within secure Pentagon computing environments.

In public statements posted on social media, Kalinowski said the decision was driven by concerns about governance and the potential risks associated with surveillance and autonomous weapons systems.

“I resigned from OpenAI,” Kalinowski wrote. “AI has an important role in national security. But surveillance of Americans without judicial oversight and lethal autonomy without human authorization are lines that deserved more deliberation than they got.”

A Dispute Over AI Governance

OpenAI announced its agreement with the Department of Defense in late February. The deal allows the company’s generative AI technology to operate within classified government systems, expanding the use of advanced AI models in national security environments.

The arrangement reflects growing demand from defense agencies for access to large-scale AI systems capable of analyzing data, generating intelligence summaries, and assisting with operational planning.

But the partnership has also intensified debate inside the technology sector about the ethical and governance frameworks surrounding military applications of artificial intelligence.

In a follow-up message explaining her resignation, Kalinowski said her primary concern was not the people involved but the process behind the announcement.

“To be clear, my issue is that the announcement was rushed without the guardrails defined,” she wrote, describing the matter as a governance issue that required deeper deliberation.

OpenAI CEO Sam Altman has said the agreement includes safeguards designed to prevent the company’s technology from being used for mass surveillance or fully autonomous weapons systems.

Robotics and Defense Technology

Kalinowski’s departure is notable in part because it comes from a leader responsible for one of OpenAI’s most strategically important emerging areas: robotics and physical AI.

The company revived its robotics efforts in recent years as advances in machine learning and large language models began to influence the development of autonomous machines. Kalinowski was recruited to lead that effort and help scale the company’s hardware initiatives.

Before joining OpenAI, she led augmented reality hardware development at Meta, where she oversaw teams building next-generation AR glasses.

Although OpenAI’s robotics research has historically focused on manipulation and learning systems rather than military hardware, the broader intersection of AI, robotics, and defense technology has become increasingly complex.

AI systems capable of perception, planning, and decision-making are now being integrated into a wide range of autonomous platforms, from drones to surveillance systems and logistics automation.

A Wider Debate Across the AI Industry

The controversy surrounding OpenAI’s defense agreement is part of a broader debate unfolding across the AI sector.

The Pentagon has recently encouraged leading AI companies to make their technologies available for “all lawful purposes,” a position that has sparked pushback from some developers concerned about how their systems might ultimately be used.

Anthropic, another major AI company, previously attempted to negotiate a separate agreement with the Defense Department that would include explicit limitations on the use of its models for domestic surveillance or fully autonomous weapons systems.

After those negotiations ended without an agreement, the Pentagon reportedly designated Anthropic as a supply-chain risk, a classification the company has said it intends to challenge in court.

These disputes highlight the increasingly strategic role that AI developers play in national security infrastructure.

What This Signals for AI and Robotics

Kalinowski’s resignation illustrates the growing pressure facing companies developing advanced AI systems as governments seek access to their technologies.

While many researchers agree that AI can play a role in national security applications, the boundaries between defensive use, surveillance, and autonomous weapons remain contentious.

For companies building the next generation of robotics and physical AI systems, those questions may become even more significant.

Autonomous machines capable of operating in the physical world introduce new layers of risk and responsibility compared with purely digital AI systems. Decisions about governance, safety, and oversight will likely shape not only how the technology evolves but also who controls it.

As governments and technology companies deepen their relationships around AI infrastructure, debates over ethical guardrails and transparency are likely to intensify.